# Technical Document Extraction: Memory Comparison Chart

## 1. Document Overview

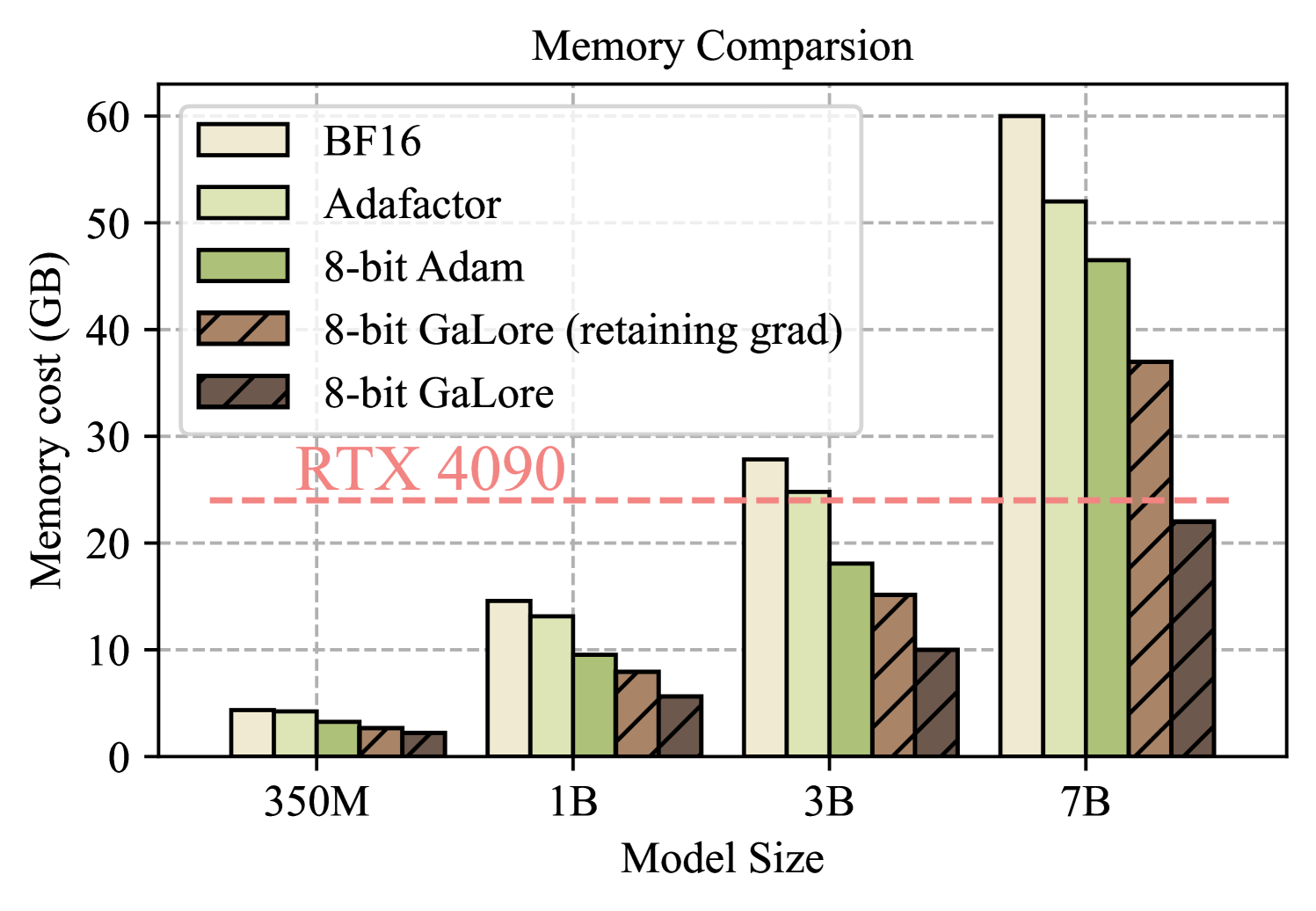

This image is a grouped bar chart titled **"Memory Comparison"** (corrected from the original typo "Comparsion"). It illustrates the memory cost in Gigabytes (GB) for different optimization techniques across four specific Large Language Model (LLM) sizes.

## 2. Component Isolation

### A. Header

* **Title:** Memory Comparison

### B. Main Chart Area

* **Y-Axis Label:** Memory cost (GB)

* **Y-Axis Scale:** 0 to 60, with major gridlines every 10 units (0, 10, 20, 30, 40, 50, 60).

* **X-Axis Label:** Model Size

* **X-Axis Categories:** 350M, 1B, 3B, 7B.

* **Reference Line:** A horizontal dashed red line is positioned at approximately **24 GB**.

* **Reference Label:** "RTX 4090" (written in red text above the dashed line).

### C. Legend

The legend identifies five data series, distinguished by color and texture:

1. **BF16:** Light cream/off-white solid fill.

2. **Adafactor:** Pale lime green solid fill.

3. **8-bit Adam:** Olive green solid fill.

4. **8-bit GaLore (retaining grad):** Light brown fill with diagonal hatching.

5. **8-bit GaLore:** Dark brown fill with diagonal hatching.

---

## 3. Data Extraction and Trend Analysis

### Trend Verification

Across all model sizes (350M to 7B), the memory cost follows a consistent pattern:

* **BF16** always consumes the most memory.

* Memory cost decreases sequentially: BF16 > Adafactor > 8-bit Adam > 8-bit GaLore (retaining grad) > 8-bit GaLore.

* **8-bit GaLore** consistently represents the most memory-efficient method.

* The memory cost scales near-linearly with the model parameter count (Model Size).

### Data Table (Estimated Values in GB)

The following table reconstructs the visual data points. Values are estimated based on the Y-axis scale.

| Model Size | BF16 | Adafactor | 8-bit Adam | 8-bit GaLore (retaining grad) | 8-bit GaLore |

| :--- | :--- | :--- | :--- | :--- | :--- |

| **350M** | ~4.5 | ~4.2 | ~3.5 | ~2.8 | ~2.2 |

| **1B** | ~14.5 | ~13.0 | ~9.5 | ~8.0 | ~5.5 |

| **3B** | ~28.0 | ~25.0 | ~18.0 | ~15.0 | ~10.0 |

| **7B** | ~60.0 | ~52.0 | ~46.5 | ~37.0 | ~22.0 |

---

## 4. Key Technical Insights

* **Hardware Constraint (RTX 4090):** The red dashed line at 24 GB represents the VRAM limit of a consumer-grade NVIDIA RTX 4090 GPU.

* **3B Model Threshold:** For a 3B parameter model, standard BF16 and Adafactor exceed the 24 GB limit of an RTX 4090. Only 8-bit Adam and both GaLore variants stay below this threshold.

* **7B Model Threshold:** For a 7B parameter model, almost all methods significantly exceed the 24 GB limit. **8-bit GaLore** is the only method shown that brings the memory cost below the 24 GB threshold (appearing at approximately 22 GB), making it the only viable option for training/fine-tuning a 7B model on a single RTX 4090 according to this data.

* **Efficiency Gain:** At the 7B scale, 8-bit GaLore reduces memory consumption by approximately 63% compared to the BF16 baseline.