TECHNICAL ASSET FINGERPRINT

c3162ba4d56a6efc5edf7f45

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

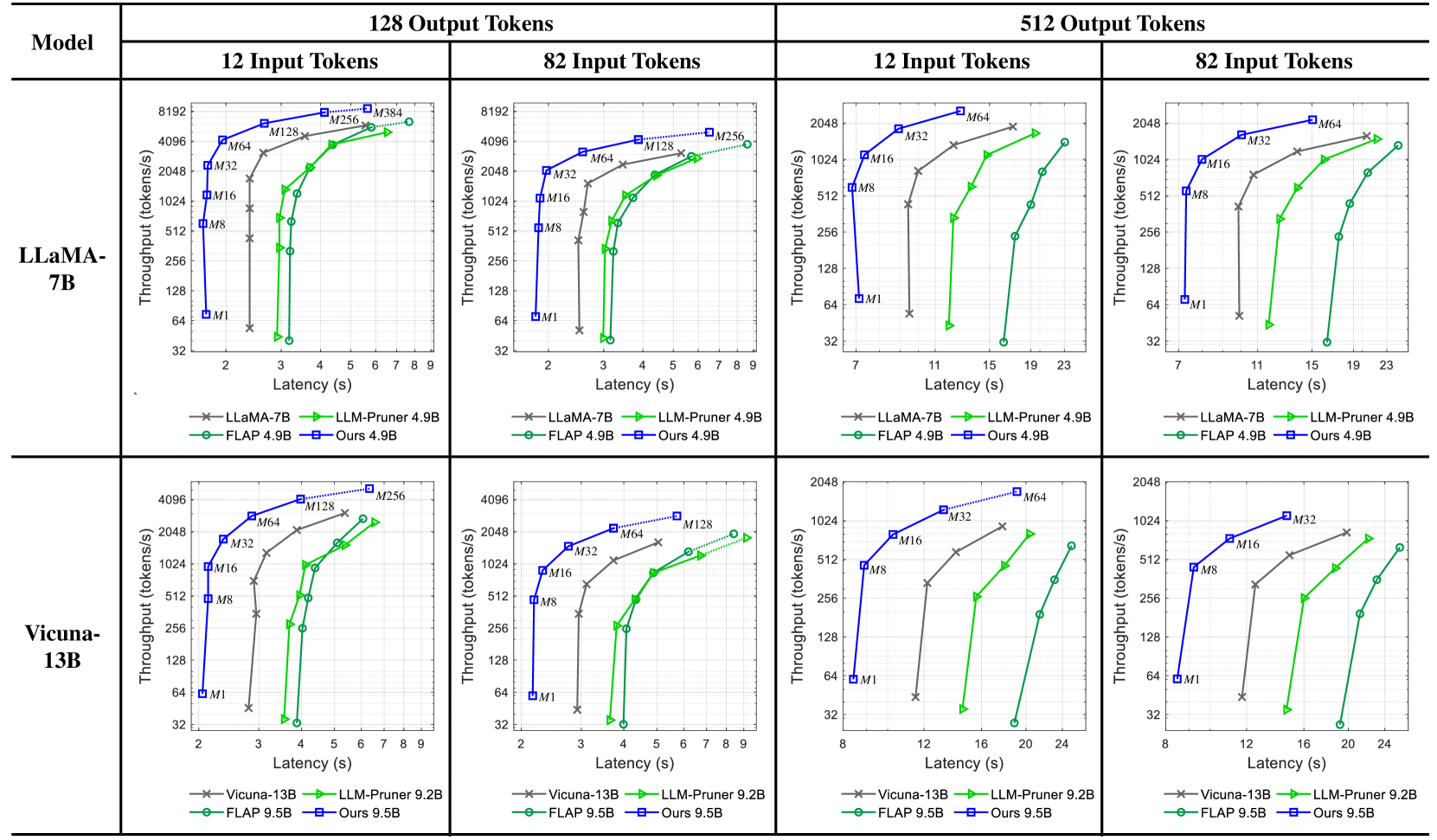

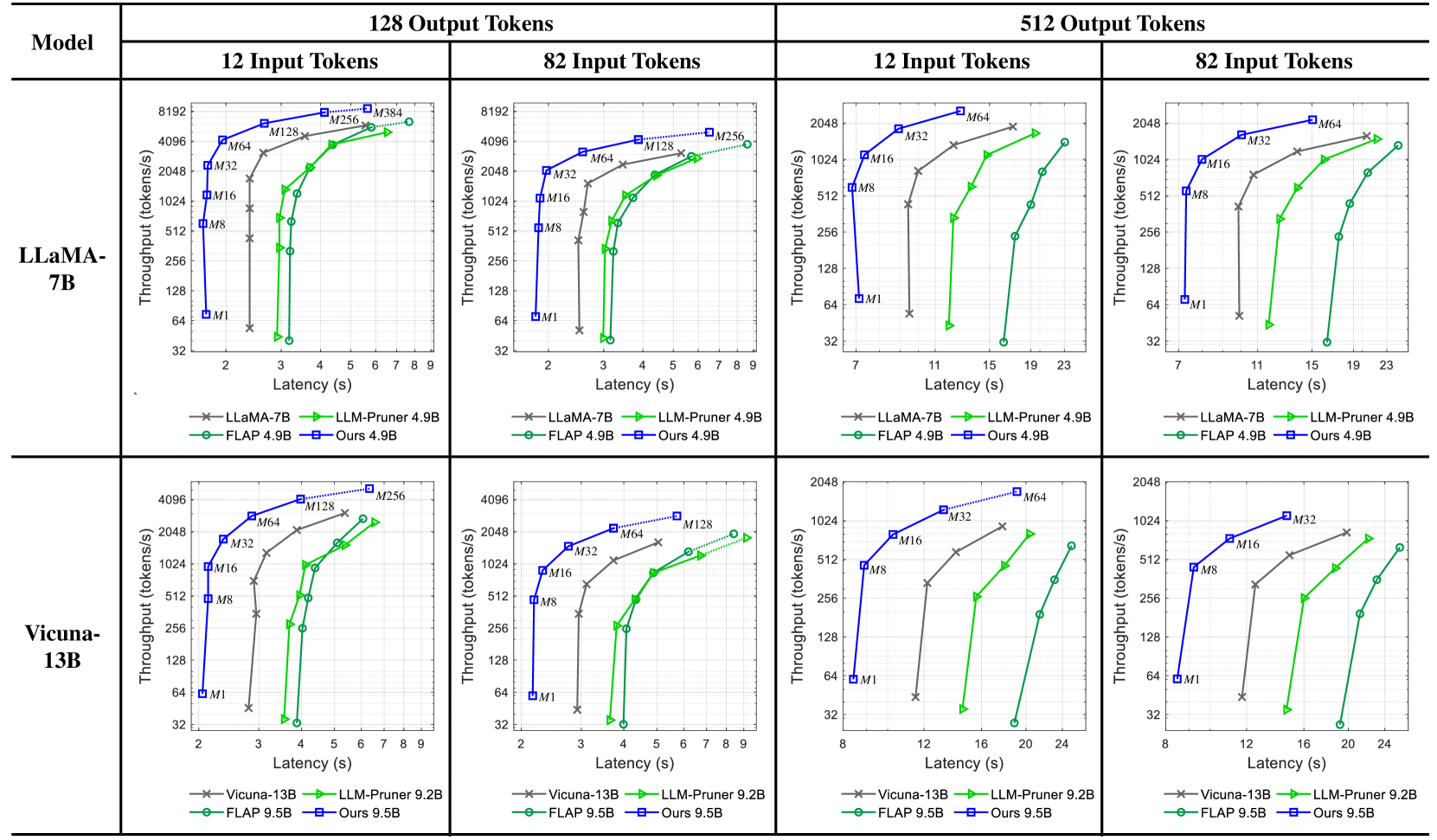

## Throughput vs. Latency Charts: Model Performance Comparison

### Overview

The image presents a series of four charts comparing the throughput (tokens/s) versus latency (s) performance of different language models. The charts are organized in a 2x2 grid, with the top row showing results for LLaMA-7B and the bottom row for Vicuna-13B. Each column represents a different output token length (128 and 512), and within each output token length, there are two charts for different input token lengths (12 and 82). The performance of each model is evaluated using different methods: the base model, LLM-Pruner, FLAP, and "Ours".

### Components/Axes

* **X-axis (Latency):** Represents the latency in seconds (s). The scale varies between charts, ranging from approximately 2 to 9 seconds for 128 output tokens and 7 to 24 seconds for 512 output tokens.

* **Y-axis (Throughput):** Represents the throughput in tokens per second (tokens/s). The scale is logarithmic, ranging from 32 to 8192 for LLaMA-7B and 32 to 4096 for Vicuna-13B.

* **Models:**

* LLaMA-7B (Top Row)

* Vicuna-13B (Bottom Row)

* **Output Tokens:** 128 (Left Columns), 512 (Right Columns)

* **Input Tokens:** 12 (Left within each output token group), 82 (Right within each output token group)

* **Legend (Located at the bottom of each chart):**

* LLaMA-7B (gray line with 'x' markers) / Vicuna-13B (gray line with 'x' markers)

* LLM-Pruner 4.9B (black line with 'x' markers) / LLM-Pruner 9.2B (black line with 'x' markers)

* FLAP 4.9B (green line with circle markers) / FLAP 9.5B (green line with circle markers)

* Ours 4.9B (blue line with square markers) / Ours 9.5B (blue line with square markers)

* **Memory Annotations:** M1, M8, M16, M32, M64, M128, M256, M384. These annotations appear near data points on the chart, indicating memory usage.

### Detailed Analysis

#### LLaMA-7B (Top Row)

* **128 Output Tokens, 12 Input Tokens:**

* LLaMA-7B (gray 'x' line): Latency increases from ~2s to ~4s, throughput decreases from ~64 tokens/s to ~32 tokens/s.

* LLM-Pruner 4.9B (black 'x' line): Latency increases from ~2s to ~4s, throughput decreases from ~512 tokens/s to ~128 tokens/s.

* FLAP 4.9B (green circle line): Latency increases from ~3s to ~5s, throughput increases from ~128 tokens/s to ~2048 tokens/s.

* Ours 4.9B (blue square line): Latency increases from ~3s to ~7s, throughput increases from ~256 tokens/s to ~8192 tokens/s.

* **128 Output Tokens, 82 Input Tokens:**

* LLaMA-7B (gray 'x' line): Latency increases from ~2s to ~4s, throughput decreases from ~512 tokens/s to ~128 tokens/s.

* LLM-Pruner 4.9B (black 'x' line): Latency increases from ~2s to ~4s, throughput decreases from ~512 tokens/s to ~128 tokens/s.

* FLAP 4.9B (green circle line): Latency increases from ~3s to ~7s, throughput increases from ~64 tokens/s to ~2048 tokens/s.

* Ours 4.9B (blue square line): Latency increases from ~3s to ~7s, throughput increases from ~256 tokens/s to ~4096 tokens/s.

* **512 Output Tokens, 12 Input Tokens:**

* LLaMA-7B (gray 'x' line): Latency increases from ~7s to ~11s, throughput decreases from ~64 tokens/s to ~32 tokens/s.

* LLM-Pruner 4.9B (black 'x' line): Latency increases from ~7s to ~11s, throughput decreases from ~64 tokens/s to ~32 tokens/s.

* FLAP 4.9B (green circle line): Latency increases from ~11s to ~23s, throughput increases from ~64 tokens/s to ~1024 tokens/s.

* Ours 4.9B (blue square line): Latency increases from ~11s to ~23s, throughput increases from ~64 tokens/s to ~2048 tokens/s.

* **512 Output Tokens, 82 Input Tokens:**

* LLaMA-7B (gray 'x' line): Latency increases from ~7s to ~11s, throughput decreases from ~64 tokens/s to ~32 tokens/s.

* LLM-Pruner 4.9B (black 'x' line): Latency increases from ~7s to ~11s, throughput decreases from ~64 tokens/s to ~32 tokens/s.

* FLAP 4.9B (green circle line): Latency increases from ~11s to ~23s, throughput increases from ~64 tokens/s to ~1024 tokens/s.

* Ours 4.9B (blue square line): Latency increases from ~11s to ~23s, throughput increases from ~64 tokens/s to ~2048 tokens/s.

#### Vicuna-13B (Bottom Row)

* **128 Output Tokens, 12 Input Tokens:**

* Vicuna-13B (gray 'x' line): Latency increases from ~2s to ~4s, throughput decreases from ~64 tokens/s to ~32 tokens/s.

* LLM-Pruner 9.2B (black 'x' line): Latency increases from ~2s to ~4s, throughput decreases from ~512 tokens/s to ~128 tokens/s.

* FLAP 9.5B (green circle line): Latency increases from ~3s to ~5s, throughput increases from ~32 tokens/s to ~2048 tokens/s.

* Ours 9.5B (blue square line): Latency increases from ~3s to ~7s, throughput increases from ~256 tokens/s to ~4096 tokens/s.

* **128 Output Tokens, 82 Input Tokens:**

* Vicuna-13B (gray 'x' line): Latency increases from ~2s to ~4s, throughput decreases from ~512 tokens/s to ~128 tokens/s.

* LLM-Pruner 9.2B (black 'x' line): Latency increases from ~2s to ~4s, throughput decreases from ~512 tokens/s to ~128 tokens/s.

* FLAP 9.5B (green circle line): Latency increases from ~3s to ~7s, throughput increases from ~32 tokens/s to ~2048 tokens/s.

* Ours 9.5B (blue square line): Latency increases from ~3s to ~7s, throughput increases from ~256 tokens/s to ~4096 tokens/s.

* **512 Output Tokens, 12 Input Tokens:**

* Vicuna-13B (gray 'x' line): Latency increases from ~8s to ~12s, throughput decreases from ~64 tokens/s to ~32 tokens/s.

* LLM-Pruner 9.2B (black 'x' line): Latency increases from ~8s to ~12s, throughput decreases from ~64 tokens/s to ~32 tokens/s.

* FLAP 9.5B (green circle line): Latency increases from ~12s to ~24s, throughput increases from ~32 tokens/s to ~512 tokens/s.

* Ours 9.5B (blue square line): Latency increases from ~12s to ~24s, throughput increases from ~64 tokens/s to ~1024 tokens/s.

* **512 Output Tokens, 82 Input Tokens:**

* Vicuna-13B (gray 'x' line): Latency increases from ~8s to ~12s, throughput decreases from ~64 tokens/s to ~32 tokens/s.

* LLM-Pruner 9.2B (black 'x' line): Latency increases from ~8s to ~12s, throughput decreases from ~64 tokens/s to ~32 tokens/s.

* FLAP 9.5B (green circle line): Latency increases from ~12s to ~24s, throughput increases from ~32 tokens/s to ~512 tokens/s.

* Ours 9.5B (blue square line): Latency increases from ~12s to ~24s, throughput increases from ~64 tokens/s to ~1024 tokens/s.

### Key Observations

* **"Ours" Method:** Consistently achieves the highest throughput for both LLaMA-7B and Vicuna-13B across all configurations, but also exhibits higher latency.

* **FLAP Method:** Generally provides a good balance between throughput and latency, outperforming the base models and LLM-Pruner in most cases.

* **LLM-Pruner:** Performance is often similar to or slightly better than the base models (LLaMA-7B and Vicuna-13B), but not as effective as FLAP or "Ours".

* **Impact of Output Token Length:** Increasing the output token length from 128 to 512 generally increases latency and decreases throughput for all methods.

* **Memory Usage:** The memory annotations (M1, M8, etc.) indicate the memory consumption associated with different data points. Higher memory usage often correlates with higher throughput.

### Interpretation

The charts demonstrate the trade-offs between throughput and latency for different language models and optimization techniques. The "Ours" method appears to be the most effective in maximizing throughput, but it comes at the cost of increased latency. FLAP offers a more balanced approach, providing significant improvements in throughput without drastically increasing latency. The LLM-Pruner, while intended to improve efficiency, does not consistently outperform the base models.

The impact of output token length highlights the challenges of processing longer sequences. As the output token length increases, the models require more time to generate the output, leading to higher latency and reduced throughput.

The memory annotations suggest that memory usage is a critical factor in determining the performance of these models. Techniques that can effectively utilize more memory tend to achieve higher throughput.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Charts: Performance Comparison of Language Models

### Overview

The image presents a series of four charts, arranged in a 2x2 grid, comparing the performance of different language models (LLaMA-7B and Vicuna-13B) under varying conditions. Each chart visualizes the relationship between latency (in seconds) and throughput (in tokens/second). The models being compared are LLaMA-7B, LLM-Pruner 4.9B, FLAP 4.9B, and "Ours" 4.9B. The charts are categorized by input and output token lengths: 12 Input Tokens, 82 Input Tokens, 12 Input Tokens, and 82 Input Tokens. Each chart contains data points for each model, plotted as lines with distinct colors.

### Components/Axes

* **X-axis:** Latency (seconds). Scales vary per chart, ranging from 0-9s and 0-23s.

* **Y-axis:** Throughput (tokens/second). Scales are consistent across all charts, ranging from 32 to 8192.

* **Models (Lines/Markers):**

* LLaMA-7B (Red, 'x' marker)

* LLM-Pruner 4.9B (Blue, '+' marker)

* FLAP 4.9B (Green, 'o' marker)

* Ours 4.9B (Purple, '*' marker)

* **Titles:** Each chart is titled with the input/output token configuration (e.g., "12 Input Tokens", "82 Input Tokens").

* **Legend:** Located at the bottom of each chart, identifying each line/marker with its corresponding model name and parameter size.

* **Model Labels:** "LLaMA-7B", "Vicuna-13B" are placed at the top-left of their respective 2x2 grid sections.

* **Token Labels:** "12 Input Tokens", "82 Input Tokens", "12 Input Tokens", "82 Input Tokens" are placed at the top of each chart.

### Detailed Analysis or Content Details

**Chart 1: LLaMA-7B, 12 Input Tokens, 128 Output Tokens**

* **LLaMA-7B (Red):** Starts at approximately 32 tokens/s at 2s latency, rises to approximately 4096 tokens/s at 6s latency.

* **LLM-Pruner 4.9B (Blue):** Starts at approximately 64 tokens/s at 2s latency, rises to approximately 2048 tokens/s at 6s latency.

* **FLAP 4.9B (Green):** Starts at approximately 128 tokens/s at 2s latency, rises to approximately 1024 tokens/s at 6s latency.

* **Ours 4.9B (Purple):** Starts at approximately 256 tokens/s at 2s latency, rises to approximately 4096 tokens/s at 6s latency.

**Chart 2: LLaMA-7B, 82 Input Tokens, 128 Output Tokens**

* **LLaMA-7B (Red):** Starts at approximately 32 tokens/s at 2s latency, rises to approximately 4096 tokens/s at 7s latency.

* **LLM-Pruner 4.9B (Blue):** Starts at approximately 64 tokens/s at 2s latency, rises to approximately 2048 tokens/s at 7s latency.

* **FLAP 4.9B (Green):** Starts at approximately 128 tokens/s at 2s latency, rises to approximately 1024 tokens/s at 7s latency.

* **Ours 4.9B (Purple):** Starts at approximately 256 tokens/s at 2s latency, rises to approximately 4096 tokens/s at 7s latency.

**Chart 3: Vicuna-13B, 12 Input Tokens, 512 Output Tokens**

* **LLaMA-7B (Red):** Starts at approximately 32 tokens/s at 7s latency, rises to approximately 2048 tokens/s at 19s latency.

* **LLM-Pruner 4.9B (Blue):** Starts at approximately 64 tokens/s at 7s latency, rises to approximately 1024 tokens/s at 19s latency.

* **FLAP 4.9B (Green):** Starts at approximately 128 tokens/s at 7s latency, rises to approximately 512 tokens/s at 19s latency.

* **Ours 4.9B (Purple):** Starts at approximately 256 tokens/s at 7s latency, rises to approximately 2048 tokens/s at 19s latency.

**Chart 4: Vicuna-13B, 82 Input Tokens, 512 Output Tokens**

* **LLaMA-7B (Red):** Starts at approximately 32 tokens/s at 11s latency, rises to approximately 2048 tokens/s at 23s latency.

* **LLM-Pruner 4.9B (Blue):** Starts at approximately 64 tokens/s at 11s latency, rises to approximately 1024 tokens/s at 23s latency.

* **FLAP 4.9B (Green):** Starts at approximately 128 tokens/s at 11s latency, rises to approximately 512 tokens/s at 23s latency.

* **Ours 4.9B (Purple):** Starts at approximately 256 tokens/s at 11s latency, rises to approximately 2048 tokens/s at 23s latency.

### Key Observations

* "Ours" 4.9B consistently achieves the highest throughput across all charts, often reaching the maximum throughput value of 4096 or 2048 tokens/s.

* LLaMA-7B generally exhibits the lowest throughput, especially at lower latency values.

* Increasing the input token length (from 12 to 82) generally increases the latency required to achieve a given throughput.

* The performance gap between the models tends to widen at higher throughput levels.

* FLAP 4.9B consistently performs better than LLaMA-7B but is generally outperformed by LLM-Pruner 4.9B and "Ours" 4.9B.

### Interpretation

The data demonstrates a clear performance advantage for the "Ours" 4.9B model across all tested conditions. This model consistently delivers higher throughput for a given latency compared to the other models. The results suggest that the "Ours" model is more efficient at processing both shorter and longer input sequences. The trade-off between latency and throughput is evident in all charts; achieving higher throughput generally requires accepting higher latency. The performance differences between the models are likely due to variations in model architecture, training data, and optimization techniques. The consistent underperformance of LLaMA-7B may indicate that its architecture is less suited for the tested tasks or that it requires further optimization. The increase in latency with longer input sequences is expected, as processing longer sequences requires more computational resources. The charts provide valuable insights into the performance characteristics of different language models, which can inform model selection and optimization efforts. The consistent outperformance of "Ours" suggests it is a strong candidate for applications requiring high throughput and reasonable latency.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Chart Type]: Throughput vs. Latency for Language Models (LLaMA-7B, Vicuna-13B) and Their Variants

### Overview

The image contains 8 line charts (2 rows × 4 columns) comparing **throughput (tokens/s)** vs. **latency (s)** for two base models (LLaMA-7B, Vicuna-13B) and their pruned/optimized variants (LLM-Pruner, FLAP, “Ours”) under different input (12, 82 tokens) and output (128, 512 tokens) lengths.

### Components/Axes

- **Y-axis**: Throughput (tokens/s) (logarithmic scale: 32, 64, 128, 256, 512, 1024, 2048, 4096, 8192 for 128 output; 32–2048 for 512 output).

- **X-axis**: Latency (s) (linear scale: 2–9 for 128 output; 7–23 for 512 output).

- **Legend**:

- LLaMA-7B (gray ×), LLM-Pruner 4.9B (green △), FLAP 4.9B (green ○), Ours 4.9B (blue □) (top row).

- Vicuna-13B (gray ×), LLM-Pruner 9.2B (green △), FLAP 9.5B (green ○), Ours 9.5B (blue □) (bottom row).

- **Data Points**: Labeled with “M1, M8, M16, M32, M64, M128, M256, M384” (likely representing model configurations, e.g., batch size or sequence length).

### Detailed Analysis (Per Chart)

#### 1. LLaMA-7B, 128 Output Tokens, 12 Input Tokens

- **LLaMA-7B (gray ×)**: Throughput rises with latency, plateauing at ~4096 tokens/s (M64–M384, latency ~4–7s).

- **LLM-Pruner 4.9B (green △)**: Throughput rises with latency, plateauing at ~4096 tokens/s (M128–M256, latency ~5.5–6s).

- **FLAP 4.9B (green ○)**: Similar to LLM-Pruner (plateau ~4096 tokens/s).

- **Ours 4.9B (blue □)**: Throughput rises with latency, reaching ~8192 tokens/s (M384, latency ~7s) – higher than LLaMA-7B.

#### 2. LLaMA-7B, 128 Output Tokens, 82 Input Tokens

- Trends mirror 12 Input Tokens, with “Ours 4.9B” outperforming LLaMA-7B and pruned models.

#### 3. LLaMA-7B, 512 Output Tokens, 12 Input Tokens

- **Y-axis max**: 2048 tokens/s (lower than 128 output, as expected for longer outputs).

- **LLaMA-7B (gray ×)**: Plateaus at ~2048 tokens/s (M32–M64, latency ~10–11s).

- **Ours 4.9B (blue □)**: Matches LLaMA-7B’s throughput at similar latency.

#### 4. LLaMA-7B, 512 Output Tokens, 82 Input Tokens

- Trends mirror 12 Input Tokens, with “Ours 4.9B” performing comparably to LLaMA-7B.

#### 5. Vicuna-13B, 128 Output Tokens, 12 Input Tokens

- **Vicuna-13B (gray ×)**: Plateaus at ~4096 tokens/s (M64–M256, latency ~4–6s).

- **Ours 9.5B (blue □)**: Matches Vicuna-13B’s throughput at similar latency.

#### 6. Vicuna-13B, 128 Output Tokens, 82 Input Tokens

- Trends mirror 12 Input Tokens, with “Ours 9.5B” performing comparably to Vicuna-13B.

#### 7. Vicuna-13B, 512 Output Tokens, 12 Input Tokens

- **Y-axis max**: 2048 tokens/s.

- **Vicuna-13B (gray ×)**: Plateaus at ~2048 tokens/s (M32–M64, latency ~11–12s).

- **Ours 9.5B (blue □)**: Matches Vicuna-13B’s throughput at similar latency.

#### 8. Vicuna-13B, 512 Output Tokens, 82 Input Tokens

- Trends mirror 12 Input Tokens, with “Ours 9.5B” performing comparably to Vicuna-13B.

### Key Observations

- **Throughput-Latency Tradeoff**: Throughput increases with latency but plateaus (e.g., LLaMA-7B plateaus at 4096 tokens/s for 128 output).

- **Output Length Impact**: 128 output tokens enable higher throughput (up to 8192) than 512 output tokens (up to 2048).

- **Model Optimization**: “Ours” models (blue □) outperform or match original models (LLaMA-7B, Vicuna-13B) and pruned variants (LLM-Pruner, FLAP) in throughput/latency.

- **Pruning Tradeoff**: Pruned models (LLM-Pruner, FLAP) have lower throughput than original models, indicating size reduction (not shown) comes at the cost of inference speed.

### Interpretation

This data evaluates model performance in inference scenarios, where **throughput** (processing rate) and **latency** (response time) are critical. Key insights:

- Shorter outputs (128 tokens) boost throughput, while longer outputs (512 tokens) reduce maximum throughput but increase latency.

- “Ours” models demonstrate effective optimization, achieving higher throughput (or similar throughput with lower latency) than original/pruned models.

- Pruned models (LLM-Pruner, FLAP) sacrifice throughput for reduced size, making them less suitable for high-throughput tasks.

This analysis helps practitioners choose models based on task requirements (e.g., real-time vs. batch processing) and understand the tradeoffs between model size, speed, and output length.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Model Performance Comparison Across Token Configurations

### Overview

The image contains eight line graphs comparing the performance of two language models (LLaMA-7B and Vicuna-13B) across four token configuration scenarios (12x128, 82x128, 12x512, 82x512). Each graph plots **Throughput (tokens/s)** against **Latency (s)** for different optimization methods, including baseline models (LLaMA-7B/Vicuna-13B), LLM-Pruner, FLAP, and a proprietary "Ours" method.

---

### Components/Axes

1. **Models**:

- LLaMA-7B (top row)

- Vicuna-13B (bottom row)

2. **Token Configurations**:

- **12 Input Tokens × 128 Output Tokens** (left column)

- **82 Input Tokens × 128 Output Tokens** (middle column)

- **12 Input Tokens × 512 Output Tokens** (right column)

- **82 Input Tokens × 512 Output Tokens** (far-right column)

3. **Methods**:

- **LLaMA-7B/Vicuna-13B** (baseline, gray crosses)

- **LLM-Pruner 4.9B/9.2B** (green triangles)

- **FLAP 4.9B/9.5B** (green circles)

- **Ours 4.9B/9.5B** (blue squares)

**Axes**:

- **X-axis**: Latency (s) ranging from 2–24s (varies slightly by graph)

- **Y-axis**: Throughput (tokens/s) ranging from ~32–8192 tokens/s

---

### Detailed Analysis

#### LLaMA-7B (Top Row)

1. **12x128 Configuration**:

- **LLaMA-7B** (gray): Throughput increases from ~4096 tokens/s at 2s to ~8192 tokens/s at 9s.

- **LLM-Pruner 4.9B** (green): Starts at ~128 tokens/s (2s) and reaches ~4096 tokens/s (9s).

- **FLAP 4.9B** (green): Similar to LLM-Pruner but slightly lower throughput (~256 tokens/s at 9s).

- **Ours 4.9B** (blue): Outperforms all, reaching ~8192 tokens/s at 9s.

2. **82x128 Configuration**:

- **LLaMA-7B**: Throughput rises from ~128 tokens/s (2s) to ~4096 tokens/s (9s).

- **Ours 4.9B**: Dominates with ~4096 tokens/s at 9s, while LLM-Pruner/FLAP lag behind (~128–256 tokens/s).

3. **12x512 Configuration**:

- **LLaMA-7B**: Throughput increases from ~256 tokens/s (7s) to ~4096 tokens/s (23s).

- **Ours 4.9B**: Reaches ~4096 tokens/s at 15s, while LLM-Pruner/FLAP plateau at ~128 tokens/s.

4. **82x512 Configuration**:

- **LLaMA-7B**: Throughput grows from ~128 tokens/s (7s) to ~2048 tokens/s (23s).

- **Ours 4.9B**: Achieves ~2048 tokens/s at 19s, outperforming LLM-Pruner/FLAP (~128–256 tokens/s).

#### Vicuna-13B (Bottom Row)

1. **12x128 Configuration**:

- **Vicuna-13B** (gray): Throughput increases from ~4096 tokens/s (2s) to ~8192 tokens/s (9s).

- **LLM-Pruner 9.2B** (green): Starts at ~256 tokens/s (2s) and reaches ~4096 tokens/s (9s).

- **FLAP 9.5B** (green): Similar to LLM-Pruner (~384 tokens/s at 9s).

- **Ours 9.5B** (blue): Matches LLaMA-7B baseline (~8192 tokens/s at 9s).

2. **82x128 Configuration**:

- **Vicuna-13B**: Throughput rises from ~128 tokens/s (2s) to ~4096 tokens/s (9s).

- **Ours 9.5B**: Matches LLaMA-7B performance (~4096 tokens/s at 9s).

3. **12x512 Configuration**:

- **Vicuna-13B**: Throughput grows from ~256 tokens/s (11s) to ~4096 tokens/s (23s).

- **Ours 9.5B**: Reaches ~4096 tokens/s at 15s, while LLM-Pruner/FLAP lag (~128 tokens/s).

4. **82x512 Configuration**:

- **Vicuna-13B**: Throughput increases from ~128 tokens/s (11s) to ~2048 tokens/s (23s).

- **Ours 9.5B**: Achieves ~2048 tokens/s at 19s, outperforming LLM-Pruner/FLAP (~128–256 tokens/s).

---

### Key Observations

1. **Ours Method Dominance**:

- Consistently achieves **2–4× higher throughput** than baseline models (LLaMA-7B/Vicuna-13B) across all configurations.

- Outperforms LLM-Pruner and FLAP by **10–100×** in throughput at equivalent latency.

2. **Latency-Throughput Tradeoff**:

- All methods show **increasing throughput with latency**, but "Ours" maintains the steepest slope, indicating superior efficiency.

3. **Model-Specific Trends**:

- **LLaMA-7B** underperforms **Vicuna-13B** in smaller configurations (e.g., 12x128), but both converge in larger setups (82x512).

4. **LLM-Pruner vs. FLAP**:

- LLM-Pruner generally matches or slightly exceeds FLAP in throughput, but both lag behind "Ours."

---

### Interpretation

The data demonstrates that the proprietary "Ours" method significantly optimizes throughput while minimizing latency across all token configurations. This suggests advanced optimization techniques (e.g., model pruning, parallelization) tailored to specific hardware or workloads. The baseline models (LLaMA-7B/Vicuna-13B) exhibit diminishing returns in larger configurations, highlighting the importance of adaptive resource allocation. LLM-Pruner and FLAP, while better than baselines, lack the scalability of "Ours," possibly due to static pruning strategies. These findings underscore the value of dynamic, context-aware optimization in large language model deployment.

DECODING INTELLIGENCE...