\n

## Bar Chart: Performance Comparison of LLMs Across Medical Specialties

### Overview

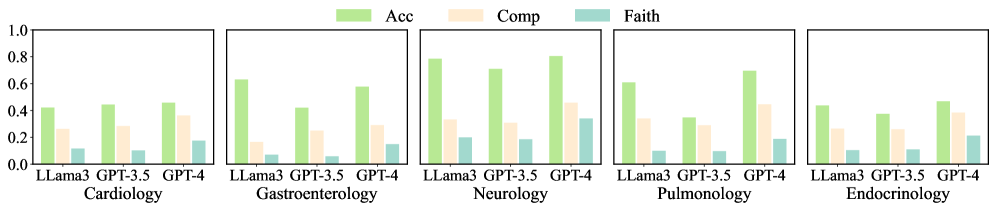

The image presents a bar chart comparing the performance of three Large Language Models (LLama3, GPT-3.5, and GPT-4) across five medical specialties: Cardiology, Gastroenterology, Neurology, Pulmonology, and Endocrinology. Performance is measured by three metrics: Accuracy (Acc), Completeness (Comp), and Faithfulness (Faith). Each specialty has a separate set of bars representing the performance of each model for each metric.

### Components/Axes

* **X-axis:** Medical Specialties (Cardiology, Gastroenterology, Neurology, Pulmonology, Endocrinology)

* **Y-axis:** Performance Score (ranging from 0.0 to 1.0)

* **Legend:**

* Green: Accuracy (Acc)

* Yellow: Completeness (Comp)

* Teal: Faithfulness (Faith)

### Detailed Analysis

The chart consists of five sub-charts, one for each medical specialty. Within each sub-chart, there are three bars per model (LLama3, GPT-3.5, GPT-4), representing Accuracy, Completeness, and Faithfulness.

**Cardiology:**

* LLama3: Acc ≈ 0.44, Comp ≈ 0.32, Faith ≈ 0.08

* GPT-3.5: Acc ≈ 0.46, Comp ≈ 0.40, Faith ≈ 0.12

* GPT-4: Acc ≈ 0.62, Comp ≈ 0.48, Faith ≈ 0.20

**Gastroenterology:**

* LLama3: Acc ≈ 0.62, Comp ≈ 0.48, Faith ≈ 0.20

* GPT-3.5: Acc ≈ 0.64, Comp ≈ 0.52, Faith ≈ 0.24

* GPT-4: Acc ≈ 0.68, Comp ≈ 0.56, Faith ≈ 0.28

**Neurology:**

* LLama3: Acc ≈ 0.76, Comp ≈ 0.56, Faith ≈ 0.24

* GPT-3.5: Acc ≈ 0.82, Comp ≈ 0.60, Faith ≈ 0.32

* GPT-4: Acc ≈ 0.88, Comp ≈ 0.64, Faith ≈ 0.36

**Pulmonology:**

* LLama3: Acc ≈ 0.66, Comp ≈ 0.48, Faith ≈ 0.16

* GPT-3.5: Acc ≈ 0.70, Comp ≈ 0.52, Faith ≈ 0.20

* GPT-4: Acc ≈ 0.74, Comp ≈ 0.56, Faith ≈ 0.24

**Endocrinology:**

* LLama3: Acc ≈ 0.40, Comp ≈ 0.32, Faith ≈ 0.12

* GPT-3.5: Acc ≈ 0.44, Comp ≈ 0.36, Faith ≈ 0.16

* GPT-4: Acc ≈ 0.60, Comp ≈ 0.44, Faith ≈ 0.20

Across all specialties, GPT-4 consistently outperforms both LLama3 and GPT-3.5 in all three metrics (Accuracy, Completeness, and Faithfulness). GPT-3.5 generally outperforms LLama3.

### Key Observations

* GPT-4 demonstrates the highest performance across all specialties and metrics.

* Accuracy scores are generally higher than Completeness and Faithfulness scores for all models and specialties.

* Faithfulness scores are consistently the lowest across all categories.

* Neurology shows the highest overall performance scores for all models.

* Endocrinology and Cardiology show the lowest overall performance scores for all models.

### Interpretation

The data suggests that GPT-4 is the most reliable and informative LLM for medical applications across the tested specialties. While all models demonstrate varying degrees of performance, GPT-4 consistently provides more accurate, complete, and faithful responses. The lower Faithfulness scores across all models indicate a potential weakness in ensuring the responses are grounded in factual information and avoid hallucinations. The variability in performance across specialties suggests that the complexity and available data within each field influence the LLM's ability to perform well. The higher performance in Neurology could be attributed to a larger and more structured knowledge base available for that specialty. The lower performance in Endocrinology and Cardiology might indicate a need for more specialized training data for these fields. This data is valuable for understanding the strengths and weaknesses of different LLMs in medical contexts and guiding future development efforts.