\n

## Diagram: Visual Representation of a Multi-Stage Object and Spatial QA Pipeline

### Overview

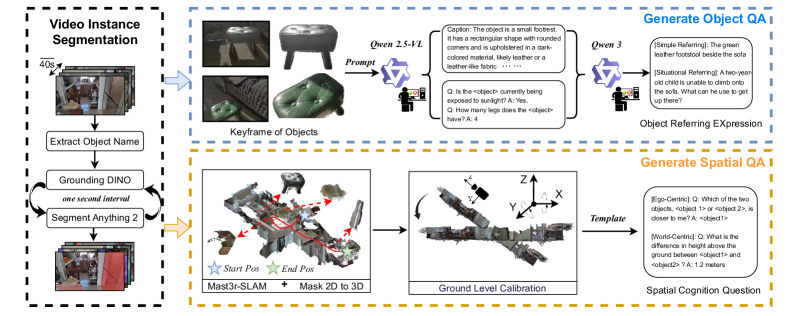

The image depicts a diagram illustrating a pipeline for generating object and spatial Question Answering (QA) systems. The pipeline takes video instance segmentation as input and progresses through keyframe extraction, object description generation, and spatial reasoning to ultimately produce answers to questions about objects and their spatial relationships. The diagram is divided into three main sections: Video Instance Segmentation, Keyframe of Objects, and Generate Spatial QA. Each section is visually separated by colored backgrounds (blue, orange, and teal respectively).

### Components/Axes

The diagram doesn't have traditional axes, but it features several key components and labels:

* **Video Instance Segmentation:** Labeled "40s" indicating a time duration. Shows a series of video frames.

* **Keyframe of Objects:** Contains images of a footstool, labeled "Owen 2.5-VL" and "Owen 3". Includes a "Prompt" box and a text description.

* **Generate Object QA:** Contains images of a child and a sofa. Includes "Object Referring Expression" label.

* **Extract Object Name:** Labeled "Grounding DINO" and "Segment Anything". Shows a series of video frames.

* **Mast3r-SLAM + Mask 2D to 3D:** A 3D point cloud representation with "Start Pos" and "End Pos" markers.

* **Ground Level Calibration:** A 3D representation of the footstool with X, Y, and Z axes labeled.

* **Template:** Labeled "Template"

* **Spatial Cognition Question:** Labeled "Spatial Cognition Question"

* **QA Examples:** Several question-answer pairs are provided within the "Generate Object QA" and "Generate Spatial QA" sections.

### Detailed Analysis or Content Details

**Video Instance Segmentation:**

This section shows a sequence of video frames, suggesting the input to the pipeline is a video stream. The "40s" label indicates the video duration being processed.

**Keyframe of Objects:**

* Two images of a footstool are shown, labeled "Owen 2.5-VL" and "Owen 3".

* A "Prompt" box is present, likely representing the input to a language model.

* The text description associated with "Owen 2.5-VL" reads: "The object is a small footstool. It has a rectangular shape with rounded corners and is upholstered in a dark colored material. Likely leather or leather-like fabric."

* Two QA examples are provided:

* Q: "Is the <object> currently being exposed to sunlight?" A: "Yes"

* Q: "How many legs does the <object> have?" A: "4"

**Extract Object Name:**

* Labeled "Grounding DINO" and "Segment Anything".

* Shows a series of video frames.

* Labeled "one second interval"

**Mast3r-SLAM + Mask 2D to 3D:**

* A 3D point cloud representation of a scene is shown, with red lines indicating the trajectory of a camera or sensor.

* "Start Pos" and "End Pos" markers are visible, indicating the beginning and end points of the trajectory.

**Ground Level Calibration:**

* A 3D representation of the footstool is shown, with X, Y, and Z axes labeled. This suggests a calibration process to align the 3D model with the real world.

**Generate Spatial QA:**

* Two QA examples are provided:

* (Egocentric): Q: "Which of the two objects, object1 or object2, is closer to me?" A: "<object1>"

* (World-Centric): Q: "What is the difference in height above the ground between <object1> and <object2>?" A: "1.2 meters"

### Key Observations

* The pipeline appears to be designed for understanding objects and their spatial relationships within a video scene.

* The use of 3D reconstruction ("Mast3r-SLAM + Mask 2D to 3D") suggests the system aims to create a geometric understanding of the environment.

* The QA examples demonstrate the system's ability to answer both egocentric (relative to the viewer) and world-centric (absolute) spatial questions.

* The "Prompt" box in the "Keyframe of Objects" section suggests the use of a language model to generate object descriptions.

### Interpretation

This diagram illustrates a sophisticated system for visual question answering, going beyond simple object recognition to incorporate spatial reasoning. The pipeline leverages video instance segmentation to identify objects, extracts keyframes for detailed analysis, and uses 3D reconstruction to understand the spatial layout of the scene. The generated object descriptions and spatial QA capabilities suggest the system could be used for applications such as robotic navigation, virtual assistants, and scene understanding. The inclusion of both egocentric and world-centric questions indicates a focus on providing contextually relevant answers. The pipeline appears to be modular, with each stage performing a specific task, allowing for potential improvements and customization. The use of "Grounding DINO" and "Segment Anything" suggests the use of state-of-the-art segmentation models. The overall design suggests a system capable of complex scene understanding and interaction.