TECHNICAL ASSET FINGERPRINT

c48ea801a84caafe39515ebe

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## RAG System Comparison Diagram

### Overview

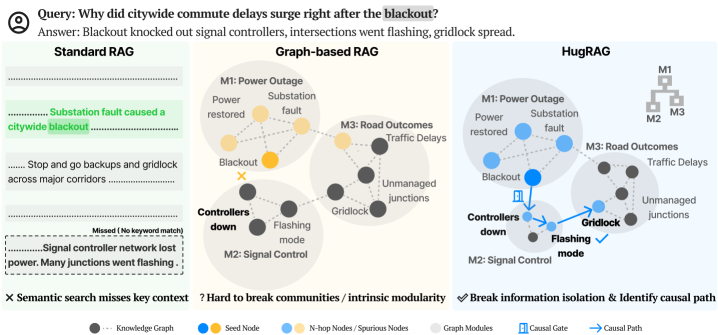

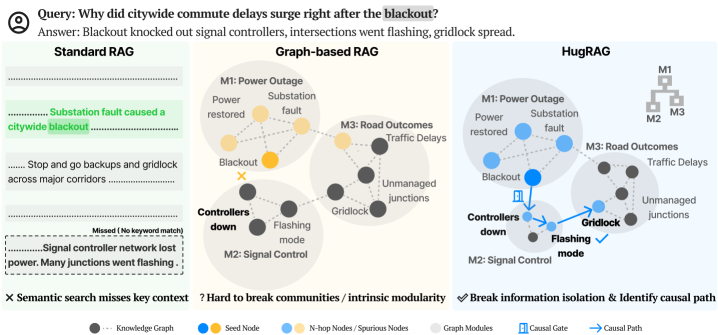

The image presents a comparison of three different Retrieval-Augmented Generation (RAG) systems: Standard RAG, Graph-based RAG, and HugRAG. It illustrates how each system handles the query "Why did citywide commute delays surge right after the blackout?" by showing the flow of information and the connections between different concepts. The diagram highlights the strengths and weaknesses of each approach in terms of context retrieval and causal reasoning.

### Components/Axes

* **Title:** Query: Why did citywide commute delays surge right after the blackout?

Answer: Blackout knocked out signal controllers, intersections went flashing, gridlock spread.

* **RAG Systems:**

* Standard RAG

* Graph-based RAG

* HugRAG

* **Nodes:** Represent concepts or entities.

* **Edges:** Represent relationships between concepts.

* **Modules:** M1: Power Outage, M2: Signal Control, M3: Road Outcomes

* **Legend:** Located at the bottom of the image.

* Knowledge Graph (gray dotted line)

* Seed Node (blue circle)

* N-hop Nodes / Spurious Nodes (yellow circle)

* Graph Modules (light gray shaded area)

* Causal Gate (blue icon of a gate)

* Causal Path (blue arrow)

### Detailed Analysis

**1. Standard RAG (Left)**

* **Description:** This system retrieves text snippets based on keyword matching.

* **Text Snippets:**

* "Substation fault caused a citywide blackout" (highlighted in green)

* "Stop and go backups and gridlock across major corridors"

* "Signal controller network lost power. Many junctions went flashing." (marked as "Missed (No keyword match)")

* **Analysis:** The system successfully retrieves information about the blackout but misses the connection between signal controller failure and traffic delays.

* **Semantic Search:** "X Semantic search misses key context"

**2. Graph-based RAG (Center)**

* **Description:** This system uses a knowledge graph to represent relationships between concepts.

* **Modules:**

* M1: Power Outage (Power restored, Substation fault, Blackout)

* M2: Signal Control (Controllers down, Flashing mode)

* M3: Road Outcomes (Traffic Delays, Unmanaged junctions, Gridlock)

* **Nodes:**

* Blackout (marked with an "X")

* Controllers down

* Flashing mode

* Gridlock

* Unmanaged junctions

* Traffic Delays

* Power restored

* Substation fault

* **Analysis:** The system represents the relationships between power outage, signal control, and road outcomes. However, it struggles to identify the causal path.

* **Community Breaking:** "? Hard to break communities / intrinsic modularity"

**3. HugRAG (Right)**

* **Description:** This system combines knowledge graph representation with causal reasoning.

* **Modules:**

* M1: Power Outage (Power restored, Substation fault, Blackout)

* M2: Signal Control (Controllers down, Flashing mode)

* M3: Road Outcomes (Traffic Delays, Unmanaged junctions, Gridlock)

* **Nodes:**

* Blackout

* Controllers down

* Flashing mode

* Gridlock

* Unmanaged junctions

* Traffic Delays

* Power restored

* Substation fault

* **Causal Path:** A blue arrow indicates the causal path from "Blackout" to "Controllers down" to "Flashing mode" to "Gridlock" to "Traffic Delays".

* **Causal Gate:** A blue gate icon is present between "Blackout" and "Controllers down".

* **Analysis:** The system successfully identifies the causal path from the blackout to traffic delays through signal controller failure.

* **Information Isolation:** "✓ Break information isolation & Identify causal path"

### Key Observations

* Standard RAG relies on keyword matching and misses contextual information.

* Graph-based RAG represents relationships but struggles with causal reasoning.

* HugRAG combines graph representation with causal reasoning to identify the causal path.

### Interpretation

The diagram demonstrates the evolution of RAG systems from simple keyword-based retrieval to more sophisticated approaches that incorporate knowledge graphs and causal reasoning. HugRAG appears to be the most effective system, as it successfully identifies the causal path between the blackout and traffic delays. This suggests that incorporating causal reasoning into RAG systems can improve their ability to answer complex questions and provide more informative responses. The "Causal Gate" likely represents a point where causal inference is applied to determine the most likely cause-and-effect relationship. The diagram highlights the importance of context and causal reasoning in information retrieval and generation.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: RAG Model Comparison for Blackout Analysis

### Overview

The image presents a comparative diagram illustrating three different Retrieval-Augmented Generation (RAG) models – Standard RAG, Graph-based RAG, and HugRAG – in the context of analyzing the causes of citywide commute delays following a blackout. Each model is represented with a network diagram showing the relationships between events and factors contributing to the delays. The diagram highlights the strengths and weaknesses of each approach in identifying the key causal pathways.

### Components/Axes

The diagram is divided into three main sections, one for each RAG model. Each section includes a network diagram and accompanying text describing the model's output and limitations.

**Common Elements:**

* **Nodes:** Represent concepts or events (e.g., "Blackout", "Traffic Delays", "Power restored").

* **Edges:** Represent relationships between nodes.

* **Color Coding:**

* Green: Knowledge Graph

* Blue: Seed Node

* Gray: N-hop Nodes / Spurious Nodes

* Orange: Graph Modules

* Teal: Causal Gate

* Purple: Causal Path

* **Icons:**

* "X": Semantic search misses key context

* "?": Hard to break communities / intrinsic modularity

* Checkmark: Break information isolation & Identify causal path

**Specific Labels:**

* **Query:** "Why did citywide commute delays surge right after the blackout?"

* **Answer:** "Blackout knocked out signal controllers, intersections went flashing, gridlock spread."

* **M1:** Power Outage

* **M2:** Signal Control

* **M3:** Road Outcomes

* **Substation fault caused a citywide blackout**

* **Stop and go backups and gridlock across major corridors**

* **Missed (No keyword match)**

* **Signal controller network lost power. Many junctions went flashing.**

### Detailed Analysis or Content Details

**1. Standard RAG (Left)**

* The diagram shows a simple linear connection between "Substation fault" and "citywide blackout".

* Further connections lead to "Stop and go backups and gridlock across major corridors".

* A dashed box indicates "Missed (No keyword match)" and a text bubble states "Signal controller network lost power. Many junctions went flashing." indicating a failure to identify this key connection.

* The network is relatively sparse, with limited connections beyond the primary path.

**2. Graph-based RAG (Center)**

* The diagram displays a more complex network with multiple interconnected nodes.

* "Blackout" is central, connected to "Controllers down", "Flashing mode", and "Gridlock".

* "Power restored" is connected to "Substation fault".

* "Traffic Delays" and "Unmanaged junctions" are linked to "Gridlock".

* A question mark icon indicates "Hard to break communities / intrinsic modularity".

* The diagram shows a larger number of nodes and edges compared to Standard RAG.

**3. HugRAG (Right)**

* Similar network structure to Graph-based RAG, but with the addition of a hierarchical structure represented by a tree diagram in the top-right corner (M1, M2, M3).

* A checkmark icon indicates "Break information isolation & Identify causal path".

* A blue arrow highlights the causal path from "Blackout" to "Gridlock" via "Flashing mode".

* The diagram appears to emphasize the causal relationships between events.

### Key Observations

* Standard RAG struggles to identify the full context of the blackout, missing the connection to signal controller failures.

* Graph-based RAG provides a more comprehensive view of the relationships but faces challenges in breaking down complex communities.

* HugRAG appears to be the most effective in identifying the causal path and breaking information isolation.

* The complexity of the network diagrams increases from Standard RAG to Graph-based RAG to HugRAG, reflecting the increasing sophistication of the models.

### Interpretation

The diagram demonstrates the varying capabilities of different RAG models in analyzing complex events like a citywide blackout. Standard RAG, relying on keyword matching, fails to capture the nuanced relationships between events. Graph-based RAG improves upon this by leveraging a knowledge graph, but struggles with modularity. HugRAG, by combining graph-based reasoning with a hierarchical structure and causal path identification, provides the most complete and accurate understanding of the blackout's impact on commute delays. The diagram highlights the importance of considering causal relationships and breaking information isolation when building RAG models for complex problem-solving. The use of color-coding and icons effectively communicates the strengths and weaknesses of each approach. The diagram suggests that HugRAG is the most promising approach for analyzing complex events and providing actionable insights.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: Comparative Analysis of RAG Methods for Causal Query Resolution

### Overview

The image is a technical diagram comparing three Retrieval-Augmented Generation (RAG) approaches for answering a causal query about a citywide commute delay. The query is: "Why did citywide commute delays surge right after the blackout?" The provided answer is: "Blackout knocked out signal controllers, intersections went flashing, gridlock spread." The diagram visually contrasts the knowledge representation and reasoning paths of Standard RAG, Graph-based RAG, and a proposed method called HugRAG.

### Components/Axes

The diagram is organized into three vertical columns, each representing a different RAG method. A shared legend is positioned at the bottom.

**Header (Top of Image):**

* **Query:** "Why did citywide commute delays surge right after the blackout?"

* **Answer:** "Blackout knocked out signal controllers, intersections went flashing, gridlock spread."

**Column 1: Standard RAG (Left)**

* **Visual:** A linear sequence of text snippets.

* **Text Blocks:**

1. "Substation fault caused a citywide blackout" (Highlighted in green).

2. "Stop and go backups and gridlock across major corridors"

3. "Signal controller network lost power. Many junctions went flashing." (Preceded by a note: "Missed (No keyword match)").

* **Footer Label:** "✗ Semantic search misses key context"

**Column 2: Graph-based RAG (Center)**

* **Visual:** A knowledge graph with interconnected nodes grouped into modules.

* **Module Labels:**

* **M1: Power Outage** (Top-left cluster)

* **M2: Signal Control** (Bottom-left cluster)

* **M3: Road Outcomes** (Right cluster)

* **Node Labels (within modules):**

* M1: "Power restored", "Substation fault", "Blackout" (Yellow node).

* M2: "Controllers down", "Flashing mode".

* M3: "Traffic Delays", "Gridlock", "Unmanaged junctions".

* **Footer Label:** "? Hard to break communities / intrinsic modularity"

**Column 3: HugRAG (Right)**

* **Visual:** A similar knowledge graph to Graph-based RAG, but with added elements illustrating causal reasoning.

* **Module Labels:** Same as Graph-based RAG (M1, M2, M3).

* **Node Labels:** Same as Graph-based RAG.

* **Additional Elements:**

* A **"Causal Gate"** icon (a blue gate symbol) placed on the connection between "Blackout" and "Controllers down".

* A **"Causal Path"** (a blue arrow) tracing the route: "Blackout" -> "Controllers down" -> "Flashing mode" -> "Gridlock".

* A small **hierarchical tree diagram** in the top-right corner with nodes labeled "M1", "M2", "M3".

* **Footer Label:** "✓ Break information isolation & Identify causal path"

**Legend (Bottom of Image):**

* **Symbols & Colors:**

* Dark Grey Circle: "Knowledge Graph"

* Blue Circle: "Seed Node"

* Light Blue Circle: "N-hop Nodes / Spurious Nodes"

* Light Grey Circle: "Module Graphs"

* Blue Gate Icon: "Causal Gate"

* Blue Arrow: "Causal Path"

### Detailed Analysis

The diagram systematically breaks down the problem-solving process for the given query.

**Standard RAG Analysis:**

* **Process:** Relies on semantic keyword search over text snippets.

* **Failure Mode:** It retrieves the initial cause ("Substation fault caused a citywide blackout") and the final outcome ("Stop and go backups..."), but misses the critical intermediate causal step ("Signal controller network lost power...") because it lacks the keyword "blackout." This creates a gap in the explanatory chain.

**Graph-based RAG Analysis:**

* **Process:** Represents information as a knowledge graph with nodes and edges, grouped into thematic modules (M1, M2, M3).

* **Strength:** Successfully integrates all relevant concepts (Blackout, Controllers down, Flashing mode, Gridlock) into a connected structure.

* **Limitation:** The graph's modular structure (M1, M2, M3) creates "communities" that can isolate information. While the data is present, the system may struggle to automatically identify the specific *causal pathway* through the graph that answers the "why" question, as noted by the label "Hard to break communities."

**HugRAG Analysis:**

* **Process:** Builds upon the graph-based approach by adding mechanisms to identify causal relationships.

* **Key Innovations:**

1. **Causal Gate:** Identifies a critical juncture in the graph (the link between "Blackout" and "Controllers down") where a causal relationship is established.

2. **Causal Path:** Explicitly traces and highlights the sequential chain of events: Blackout → Controllers down → Flashing mode → Gridlock. This path directly maps to the provided answer.

3. **Module Hierarchy:** The small tree (M1→M2→M3) suggests an understanding of the flow of causality between modules, from the power outage event, through the signal control failure, to the road traffic outcomes.

* **Outcome:** It "breaks information isolation" between modules and successfully "identifies the causal path," enabling it to generate the correct, stepwise explanation.

### Key Observations

1. **Color-Coded Semantics:** The legend defines a color scheme (blue for seed/N-hop nodes) that is consistently applied in the Graph-based and HugRAG diagrams. The "Blackout" node is yellow in the center diagram but blue in the right diagram, suggesting it may be treated as a "Seed Node" in the HugRAG process.

2. **Spatial Progression:** The three columns show a clear evolution from linear text retrieval (left), to interconnected but static knowledge representation (center), to dynamic causal reasoning over that knowledge (right).

3. **Visual Emphasis on Causality:** HugRAG uses distinct visual elements (gate icon, bold blue arrow) to draw attention to the causal mechanism, which is the core of the query.

4. **Modularity as a Double-Edged Sword:** The diagram posits that while modular knowledge graphs (M1, M2, M3) are useful for organization, they can inherently hinder the discovery of cross-module causal links unless specifically addressed, as HugRAG attempts to do.

### Interpretation

This diagram serves as a conceptual argument for advancing RAG systems beyond simple retrieval and towards **causal reasoning**. It demonstrates that for complex "why" questions, merely finding and connecting relevant facts (Graph-based RAG) is insufficient. The system must also understand the *direction* and *sequence* of influence between those facts.

The progression illustrates a Peircean investigative process:

1. **Standard RAG** represents a **Sign** (the text snippets) but fails to establish a coherent **Interpretant** (the full causal story) due to incomplete information.

2. **Graph-based RAG** establishes a network of **Signs** (the nodes) and their **Relations** (edges), creating a more complete representational field. However, it may lack the interpretive rule to extract the specific **Causal Legisign** (the general law of cause-effect) governing this event.

3. **HugRAG** attempts to apply that interpretive rule. By identifying the "Causal Gate" and tracing the "Causal Path," it actively constructs the **Dynamic Argument**—the chain of reasoning that leads from the initial event to the observed outcome. This moves from representing knowledge to *reasoning with* knowledge.

The "notable anomaly" is the missing link in the Standard RAG results, which perfectly illustrates the brittleness of pure semantic search for multi-step reasoning. The entire diagram argues that the future of effective AI question-answering, especially for diagnostic or explanatory tasks, lies in architectures that can explicitly model and traverse causal pathways within structured knowledge.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Comparison of RAG Methods for Blackout Analysis

### Overview

The image compares three Retrieval-Augmented Generation (RAG) methods—Standard RAG, Graph-based RAG, and HugRAG—using diagrams and text to explain how each handles a query about citywide commute delays caused by a blackout. The diagrams use color-coded nodes and connections to represent knowledge graphs, causal relationships, and modularity.

---

### Components/Axes

1. **Query & Answer Section**

- **Text**:

- Query: *"Why did citywide commute delays surge right after the blackout?"*

- Answer: *"Blackout knocked out signal controllers, intersections went flashing, gridlock spread."*

- **Highlighted Text**:

- *"Substation fault caused a citywide blackout"* (Standard RAG)

- *"Signal controller network lost power. Many junctions went flashing"* (Standard RAG)

2. **Standard RAG Diagram**

- **Text**:

- *"Substation fault caused a citywide blackout"*

- *"Stop and go backups and gridlock across major corridors"*

- *"Signal controller network lost power. Many junctions went flashing"*

- **Color Legend**:

- Black dots: Knowledge Graph

- Blue: Seed Node

- Yellow: N-hop Nodes (Spurious Nodes)

- Gray: Graph Modules

- Blue arrows: Causal Gate

- Blue arrows with checkmark: Causal Path

3. **Graph-based RAG Diagram**

- **Nodes**:

- **M1**: Power Outage (yellow), Substation restored (yellow), Blackout (yellow)

- **M2**: Signal Control (black), Controllers down (black), Flashing mode (black)

- **M3**: Road Outcomes (black), Traffic Delays (black), Unmanaged junctions (black)

- **Connections**:

- M1 → M2 (Power Outage → Signal Control)

- M2 → M3 (Gridlock → Traffic Delays)

- **Text**:

- *"Hard to break communities / intrinsic modularity"*

4. **HugRAG Diagram**

- **Nodes**:

- **M1**: Power Outage (blue), Substation restored (blue), Blackout (blue)

- **M2**: Signal Control (black), Controllers down (black), Flashing mode (black)

- **M3**: Road Outcomes (black), Traffic Delays (black), Unmanaged junctions (black)

- **Connections**:

- M1 → M2 → M3 (Causal Path: Power Outage → Signal Control → Traffic Delays)

- **Text**:

- *"Break information isolation & Identify causal path"*

---

### Detailed Analysis

1. **Standard RAG**

- **Text Content**:

- Highlights "Substation fault caused a citywide blackout" and "Signal controller network lost power."

- Misses key context: *"Semantic search misses key context"* (marked with an X).

- **Diagram**:

- No explicit nodes or connections. Relies on textual summaries.

2. **Graph-based RAG**

- **Nodes**:

- **M1 (Power Outage)**: Yellow (Seed Node)

- **M2 (Signal Control)**: Black (Knowledge Graph)

- **M3 (Road Outcomes)**: Black (Knowledge Graph)

- **Connections**:

- M1 → M2 (Power Outage → Signal Control)

- M2 → M3 (Gridlock → Traffic Delays)

- **Text**:

- *"Hard to break communities / intrinsic modularity"* (indicates limitations in modularity).

3. **HugRAG**

- **Nodes**:

- **M1 (Power Outage)**: Blue (N-hop Node)

- **M2 (Signal Control)**: Black (Knowledge Graph)

- **M3 (Road Outcomes)**: Black (Knowledge Graph)

- **Connections**:

- M1 → M2 → M3 (Causal Path: Power Outage → Signal Control → Traffic Delays)

- **Text**:

- *"Break information isolation & Identify causal path"* (emphasizes causal reasoning).

---

### Key Observations

1. **Standard RAG**

- Relies on text summaries but fails to capture causal relationships (e.g., "Semantic search misses key context").

- Highlights critical events (substation fault, signal controller failure) but lacks structural analysis.

2. **Graph-based RAG**

- Uses nodes to represent events (e.g., Power Outage, Signal Control) and connections to show relationships.

- Struggles with modularity ("Hard to break communities"), suggesting difficulty in isolating specific causal chains.

3. **HugRAG**

- Explicitly identifies a **causal path** (M1 → M2 → M3) using blue arrows with checkmarks.

- Combines modularity (graph modules) with causal reasoning (causal gates).

---

### Interpretation

1. **Standard RAG**

- Provides a basic summary but lacks depth in explaining causality. Its failure to capture key context (e.g., "signal controller network lost power") suggests limitations in retrieval precision.

2. **Graph-based RAG**

- Represents events as nodes and relationships as edges but cannot fully disentangle modular components (e.g., "intrinsic modularity" issue). This may lead to incomplete or fragmented explanations.

3. **HugRAG**

- Outperforms others by explicitly tracing the causal chain (Power Outage → Signal Control → Traffic Delays). The use of **causal gates** and **causal paths** indicates a structured approach to linking events, making it more effective for complex queries.

4. **Color Coding Significance**

- **Yellow (Seed Nodes)**: Highlight critical events (e.g., Power Outage) in Graph-based RAG.

- **Blue (N-hop Nodes)**: Represent intermediate steps in HugRAG’s causal path.

- **Black (Knowledge Graph)**: Core events (e.g., Signal Control) are consistently marked across methods.

---

### Conclusion

The diagram illustrates how HugRAG’s integration of causal reasoning and modularity surpasses Standard RAG and Graph-based RAG in explaining complex, interconnected events like blackouts. By breaking information isolation and identifying causal paths, HugRAG provides a more accurate and structured analysis of the root causes and consequences of citywide commute delays.

DECODING INTELLIGENCE...