## Heatmap: MLP and Attention Masks Across Clusters for Llama-3-8B and Qwen-3-8B

### Overview

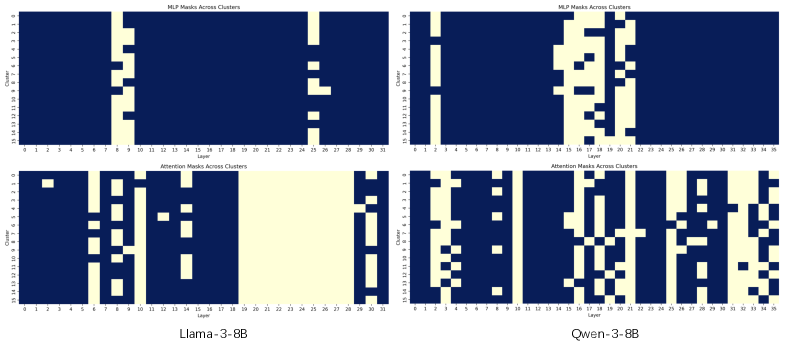

The image displays four heatmaps comparing **MLP masks** and **attention masks** across clusters for two language models: **Llama-3-8B** (bottom-left) and **Qwen-3-8B** (bottom-right). The top row shows MLP masks, while the bottom row shows attention masks. Each heatmap uses a **dark blue** color to indicate mask presence and **light yellow** for absence. Axes are labeled "Layer" (x-axis, 0–35) and "Cluster" (y-axis, 0–35).

---

### Components/Axes

- **X-axis (Layer)**: Ranges from 0 to 35, representing transformer layers.

- **Y-axis (Cluster)**: Ranges from 0 to 35, representing cluster indices.

- **Color Scheme**:

- **Dark Blue**: Mask presence (active).

- **Light Yellow**: Mask absence (inactive).

- **Titles**:

- Top-left: "MLP Masks Across Clusters" (Llama-3-8B).

- Top-right: "MLP Masks Across Clusters" (Qwen-3-8B).

- Bottom-left: "Attention Masks Across Clusters" (Llama-3-8B).

- Bottom-right: "Attention Masks Across Clusters" (Qwen-3-8B).

---

### Detailed Analysis

#### Llama-3-8B (Bottom-Left)

- **MLP Masks**:

- Vertical dark blue blocks dominate layers **7–9** and **20–22**, indicating concentrated mask activity.

- Sparse yellow regions in layers **10–15** and **23–25**.

- **Attention Masks**:

- Scattered dark blue blocks across layers **0–15**, with dense activity in clusters **5–10**.

- Minimal activity in layers **16–35**.

#### Qwen-3-8B (Bottom-Right)

- **MLP Masks**:

- Dense dark blue blocks in layers **0–5** and **25–30**, with sporadic activity in **10–15**.

- Light yellow dominates layers **6–9** and **20–24**.

- **Attention Masks**:

- Highly fragmented dark blue blocks across all layers, with dense clusters in **15–20** and **25–30**.

- Light yellow regions are sparse, especially in layers **0–5** and **20–25**.

---

### Key Observations

1. **Llama-3-8B**:

- MLP masks show **layer-specific clustering** (e.g., layers 7–9, 20–22).

- Attention masks exhibit **early-layer dominance** (layers 0–15) with sparse later-layer activity.

2. **Qwen-3-8B**:

- MLP masks display **broad layer coverage** (layers 0–5, 25–30) with gaps in mid-layers.

- Attention masks show **distributed activity** across all layers, with peaks in mid-layers (15–20, 25–30).

---

### Interpretation

- **MLP Masks**:

- Llama-3-8B’s concentrated blocks suggest **specialized processing** in specific layers, possibly for task-specific features.

- Qwen-3-8B’s broader distribution implies **generalized feature extraction** across multiple layers.

- **Attention Masks**:

- Llama-3-8B’s early-layer focus may indicate **initial token processing** dominates, while Qwen-3-8B’s distributed pattern suggests **dynamic, multi-layer attention**.

- **Model Differences**:

- Qwen-3-8B’s attention masks are more **uniformly active**, potentially enabling better context integration.

- Llama-3-8B’s MLP masks highlight **layer-specific specialization**, which might optimize efficiency for certain tasks.

---

### Notable Anomalies

- **Llama-3-8B Attention Masks**: Minimal activity in layers **16–35** could indicate **reduced relevance** of deeper layers for this model.

- **Qwen-3-8B MLP Masks**: Gaps in layers **6–9** and **20–24** might reflect **architectural trade-offs** for computational efficiency.

---

### Summary

The heatmaps reveal distinct masking strategies between Llama-3-8B and Qwen-3-8B. Llama-3-8B emphasizes **layer-specific specialization**, while Qwen-3-8B adopts a **distributed, multi-layer approach**. These differences likely influence their performance on tasks requiring contextual understanding versus specialized feature extraction.