\n

## Diagram: Neural Architecture Search Process

### Overview

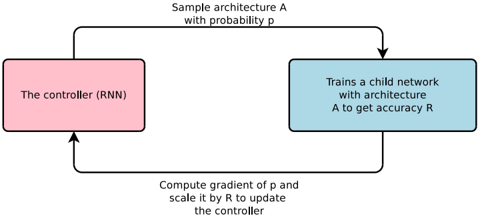

The image depicts a diagram illustrating a process for neural architecture search. It shows a cyclical flow between a controller (RNN) and a child network, with feedback used to update the controller. The diagram outlines a reinforcement learning approach to designing neural network architectures.

### Components/Axes

The diagram consists of two main rectangular blocks connected by directed arrows, representing a feedback loop. Text labels are associated with each block and the arrows, describing the actions performed in each step.

* **Block 1 (Pink):** "The controller (RNN)"

* **Block 2 (Blue):** "Trains a child network with architecture A to get accuracy R"

* **Arrow 1 (Top):** "Sample architecture A with probability p"

* **Arrow 2 (Bottom):** "Compute gradient of p and scale it by R to update the controller"

### Detailed Analysis or Content Details

The diagram illustrates a closed-loop process:

1. The controller, which is a Recurrent Neural Network (RNN), samples a neural network architecture (A) with a certain probability (p).

2. The sampled architecture (A) is used to train a child network.

3. The child network's performance is evaluated, resulting in an accuracy score (R).

4. The gradient of the probability (p) is computed and scaled by the accuracy (R).

5. This scaled gradient is then used to update the controller (RNN).

6. The process repeats, iteratively refining the controller's ability to sample effective architectures.

### Key Observations

The diagram highlights a reinforcement learning paradigm where the controller learns to generate architectures that yield high accuracy. The accuracy (R) serves as a reward signal, guiding the controller's learning process. The use of gradients suggests a differentiable approach to architecture search.

### Interpretation

This diagram represents a method for automating the design of neural network architectures. Instead of manually designing architectures, the controller learns to explore the architecture space and identify promising configurations. The feedback loop, driven by the accuracy of the child networks, allows the controller to adapt and improve its architecture sampling strategy over time. This approach can potentially discover architectures that outperform manually designed ones, especially for complex tasks. The diagram suggests a computationally intensive process, as it involves training multiple child networks. The use of an RNN as the controller implies that the architecture search process can leverage sequential dependencies and potentially discover architectures with complex structures. The scaling of the gradient by the accuracy (R) indicates that architectures with higher accuracy have a greater influence on updating the controller, effectively prioritizing the exploration of promising architecture regions.