TECHNICAL ASSET FINGERPRINT

c4e114a36edc6a5279d38303

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

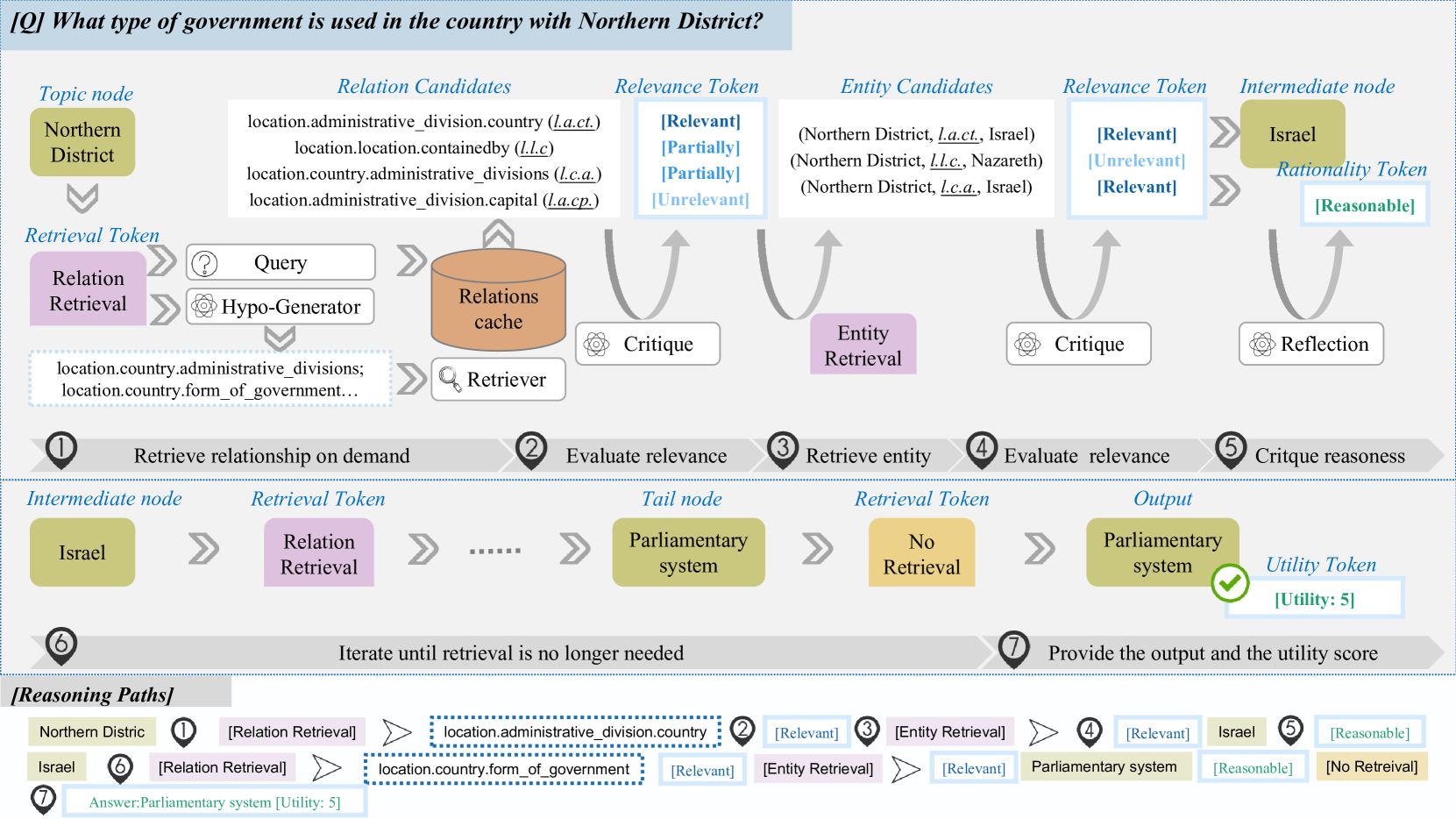

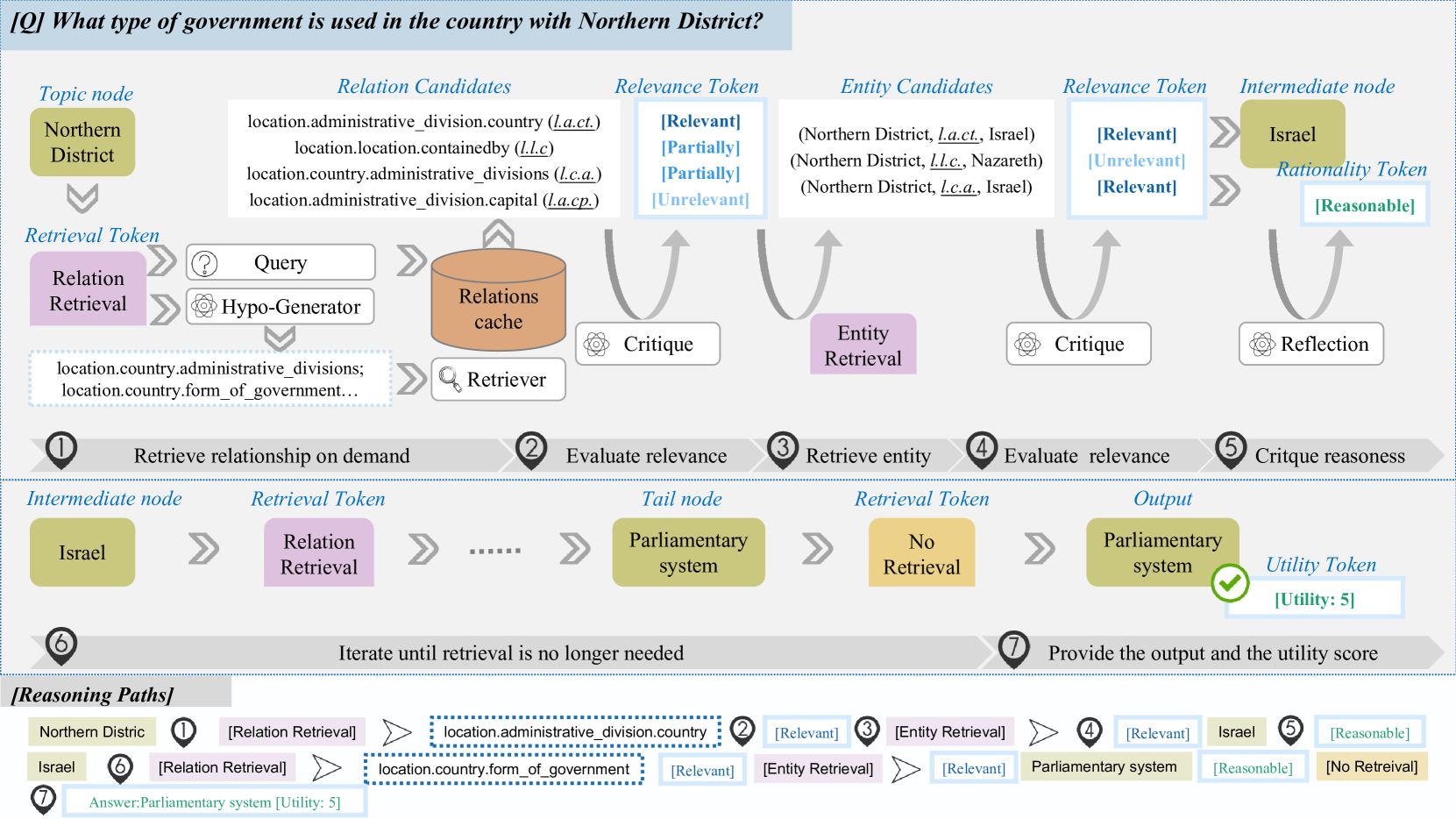

## [Diagram]: Multi-Step Reasoning Process for Question Answering

### Overview

The image is a detailed flowchart illustrating a multi-step reasoning and retrieval process used by an AI system to answer a factual question. The process begins with a question and a topic node, proceeds through iterative cycles of relation and entity retrieval, relevance evaluation, and critique, and concludes with a final answer and a utility score. The diagram is structured horizontally, with a primary flow from left to right across the top half, and a secondary, iterative loop in the bottom half. A "[Reasoning Paths]" section at the very bottom summarizes the successful path taken.

### Components/Axes

The diagram is composed of several distinct visual components and text labels:

**1. Header/Question:**

* **Text:** `[Q] What type of government is used in the country with Northern District?`

* **Position:** Top-left, in a light blue banner.

**2. Primary Flow (Top Half):**

This section depicts the initial reasoning steps to identify a relevant country.

* **Topic node:** A green box labeled `Northern District`.

* **Relation Candidates:** A list of potential database relations:

* `location.administrative_division.country (l.a.ct.)`

* `location.location.containedby (l.l.c)`

* `location.country.administrative_divisions (l.c.a.)`

* `location.administrative_division.capital (l.a.cp.)`

* **Relevance Token (for Relations):** A vertical list evaluating the above relations: `[Relevant]`, `[Partially]`, `[Partially]`, `[Unrelevant]`.

* **Entity Candidates:** A list of potential entities derived from the relevant relation:

* `(Northern District, l.a.ct., Israel)`

* `(Northern District, l.l.c., Nazareth)`

* `(Northern District, l.c.a., Israel)`

* **Relevance Token (for Entities):** A vertical list evaluating the above entities: `[Relevant]`, `[Unrelevant]`, `[Relevant]`.

* **Intermediate node:** A green box labeled `Israel`.

* **Rationality Token:** A blue box labeled `[Reasonable]`.

* **Process Blocks (Purple):** `Relation Retrieval` and `Entity Retrieval`.

* **Process Blocks (White):** `Query`, `Hypo-Generator`, `Retriever`, `Critique` (x2), `Reflection`.

* **Data Store:** An orange cylinder labeled `Relations cache`.

* **Numbered Steps (1-5):** Grey circles with numbers, each with a descriptive label:

1. `Retrieve relationship on demand`

2. `Evaluate relevance`

3. `Retrieve entity`

4. `Evaluate relevance`

5. `Critique reasoness` [sic - likely "reasoning"]

**3. Iterative Loop (Bottom Half):**

This section shows the process continuing from the intermediate node to find the final answer.

* **Intermediate node:** A green box labeled `Israel`.

* **Tail node:** A green box labeled `Parliamentary system`.

* **Output:** A green box labeled `Parliamentary system` with a green checkmark.

* **Retrieval Token:** A purple box labeled `Relation Retrieval` and a yellow box labeled `No Retrieval`.

* **Utility Token:** A blue box labeled `[Utility: 5]`.

* **Numbered Steps (6-7):**

6. `Iterate until retrieval is no longer needed`

7. `Provide the output and the utility score`

**4. Reasoning Paths (Bottom Section):**

A summary box titled `[Reasoning Paths]` visually maps the successful sequence:

* **Path 1:** `Northern Distric` [sic] -> `[Relation Retrieval]` -> `location.administrative_division.country` -> `[Relevant]` -> `[Entity Retrieval]` -> `[Relevant]` -> `Israel` -> `[Reasonable]`

* **Path 2:** `Israel` -> `[Relation Retrieval]` -> `location.country.form_of_government` -> `[Relevant]` -> `[Entity Retrieval]` -> `[Relevant]` -> `Parliamentary system` -> `[Reasonable]` -> `[No Retrieval]`

* **Final Output:** `Answer:Parliamentary system [Utility: 5]`

### Detailed Analysis

The diagram meticulously breaks down the question-answering process into discrete, numbered stages:

1. **Stage 1 (Retrieve relationship):** Starting with the topic "Northern District," the system generates a query and uses a "Hypo-Generator" to propose potential relations from a "Relations cache." The relation `location.administrative_division.country` is identified as `[Relevant]`.

2. **Stage 2 (Evaluate relevance):** The relevance of the proposed relations is critiqued.

3. **Stage 3 (Retrieve entity):** Using the relevant relation, the system retrieves entity candidates. The triple `(Northern District, l.a.ct., Israel)` is evaluated as `[Relevant]`.

4. **Stage 4 (Evaluate relevance):** The relevance of the entity candidates is critiqued.

5. **Stage 5 (Critique reasoness):** The intermediate node "Israel" is generated and its rationality is assessed as `[Reasonable]`.

6. **Stage 6 (Iterate):** The process repeats from the new intermediate node "Israel." A new relation, `location.country.form_of_government`, is retrieved and deemed `[Relevant]`. This leads to the tail node "Parliamentary system." The system then determines `[No Retrieval]` is needed further.

7. **Stage 7 (Provide output):** The final answer "Parliamentary system" is output with a `[Utility: 5]` score, indicating high confidence or usefulness.

### Key Observations

* **Color Coding:** Green boxes represent knowledge nodes (Topic, Intermediate, Tail, Output). Purple boxes denote active retrieval processes. Blue boxes are evaluation tokens (Relevance, Rationality, Utility). Orange represents a data store.

* **Flow Direction:** The primary flow is linear (Steps 1-5), followed by an iterative loop (Steps 6-7). Arrows clearly indicate the direction of data and control flow.

* **Textual Detail:** All candidate relations and entities are explicitly listed with their abbreviations (e.g., `l.a.ct.`). The relevance evaluations are shown for each candidate.

* **Error/Typo:** The word "reasoness" in Step 5 is likely a typo for "reasoning." The name "Northern Distric" in the Reasoning Paths section is missing a 't'.

* **Utility Scoring:** The process concludes with a quantified utility score (`5`), suggesting a metric for answer quality.

### Interpretation

This diagram is a technical schematic of a **neuro-symbolic or retrieval-augmented generation (RAG) reasoning system**. It demonstrates how such a system can decompose a complex factual question ("What type of government...") into a series of simpler, verifiable sub-tasks.

* **What it demonstrates:** The system doesn't just know the answer; it *derives* it through a transparent, auditable process. It first grounds the ambiguous term "Northern District" by finding its containing country (Israel) using a knowledge base relation. It then queries the knowledge base again for that country's form of government.

* **Relationships between elements:** The "Relations cache" is the core knowledge source. The "Critique" and "Reflection" modules act as quality control gates, ensuring each step is logically sound before proceeding. The "Utility Token" provides a final confidence metric.

* **Notable patterns:** The process is **iterative and conditional**. Step 6 explicitly states "Iterate until retrieval is no longer needed," showing the system can chain multiple reasoning steps. The separation of "Relation Retrieval" and "Entity Retrieval" highlights a structured approach to querying knowledge graphs.

* **Underlying purpose:** The diagram argues for the robustness and interpretability of this AI architecture. By making each inference step explicit—from candidate generation to relevance scoring—it contrasts with "black box" models and provides a pathway for debugging and trust. The high utility score (5) for the correct answer ("Parliamentary system") validates the effectiveness of the depicted pipeline.

DECODING INTELLIGENCE...