\n

## Diagram: Neural Network Representation

### Overview

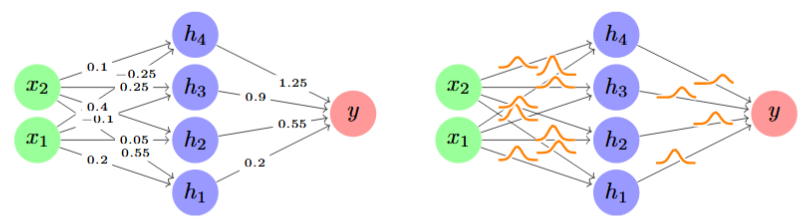

The image presents two diagrams illustrating a neural network structure. The left diagram depicts a network with explicitly labeled weights between nodes, while the right diagram shows the same network with connections represented by curved lines, suggesting a more general representation of weighted connections. Both diagrams represent the same underlying network topology.

### Components/Axes

The diagrams consist of the following components:

* **Input Nodes:** `x1`, `x2` (light green)

* **Hidden Nodes:** `h1`, `h2`, `h3`, `h4` (blue)

* **Output Node:** `y` (red)

* **Weights:** Numerical values associated with the connections between nodes.

### Detailed Analysis or Content Details

**Left Diagram (Explicit Weights):**

* **x1 to h1:** Weight = 0.2

* **x1 to h2:** Weight = 0.05

* **x1 to h3:** Weight = 0.4

* **x2 to h1:** Weight = -0.1

* **x2 to h2:** Weight = 0.25

* **x2 to h3:** Weight = 0.25

* **x2 to h4:** Weight = 0.1

* **h1 to y:** Weight = 0.55

* **h2 to y:** Weight = 0.2

* **h3 to y:** Weight = 1.25

* **h4 to y:** Weight = 0.9

**Right Diagram (Curved Connections):**

This diagram shows the same network structure as the left, but the weights are not explicitly labeled. The connections are represented by curved, orange lines. The connections mirror those in the left diagram.

### Key Observations

* The network has two input nodes, four hidden nodes, and one output node.

* The weights vary in sign (positive and negative), indicating both excitatory and inhibitory connections.

* The weight values range from -0.1 to 1.25.

* The right diagram provides a more abstract representation of the network, focusing on connectivity rather than specific weight values.

### Interpretation

The diagrams illustrate a simple feedforward neural network. The left diagram provides a detailed view of the network's weights, which determine the strength of the connections between nodes. The right diagram offers a more generalized representation, emphasizing the network's architecture. The weights represent the learned parameters of the network, and their values influence the network's output based on the input values. The positive and negative weights suggest that some inputs contribute to activating the output node, while others inhibit it. The varying magnitudes of the weights indicate the relative importance of each connection. The network could be used for a variety of tasks, such as classification or regression, depending on the activation functions used in the nodes and the training data.