## Diagram: Memory-Augmented Neural Network Architecture

### Overview

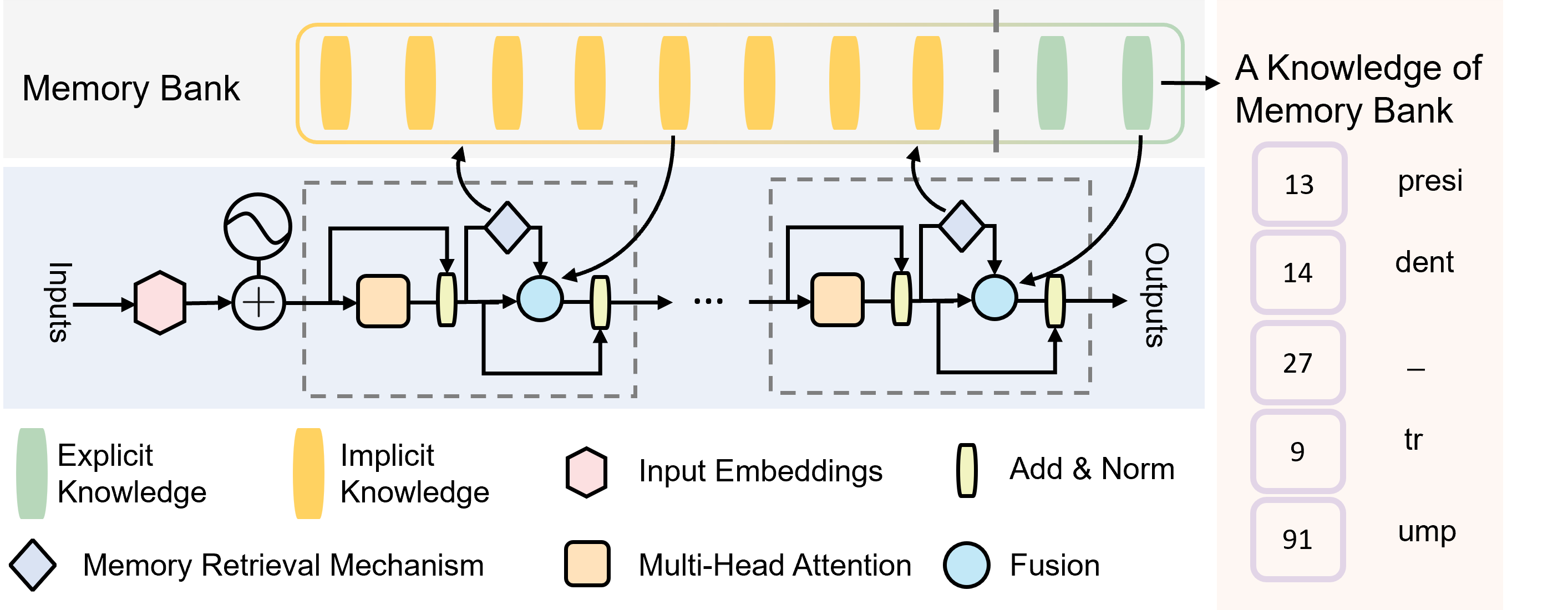

The image is a technical diagram illustrating a neural network architecture that incorporates a "Memory Bank" for storing and retrieving knowledge. The system processes inputs through a series of layers, retrieves relevant information from memory, and produces outputs. The diagram is divided into three main visual regions: a top "Memory Bank" section, a central processing pipeline, and a bottom legend. A separate panel on the right contains textual annotations.

### Components/Axes

**Top Region: Memory Bank**

* **Label:** "Memory Bank" (top-left).

* **Structure:** A horizontal array of vertical bars, divided by a vertical dashed line.

* **Left Section (Yellow Bars):** 8 yellow bars. According to the legend, these represent "Implicit Knowledge".

* **Right Section (Green Bars):** 2 green bars. According to the legend, these represent "Explicit Knowledge".

* **Output Arrow:** An arrow points from the rightmost green bar to the text "A Knowledge of Memory Bank" on the far right.

**Central Region: Processing Pipeline**

* **Flow Direction:** Left to right.

* **Input:** Labeled "Inputs" on the far left.

* **Output:** Labeled "Outputs" on the far right.

* **Components (in order from left to right):**

1. A pink hexagon: "Input Embeddings" (per legend).

2. A circle with a plus sign: An addition operation.

3. A dashed box containing a repeating block. The block includes:

* An orange rounded rectangle: "Multi-Head Attention" (per legend).

* A vertical yellow rectangle: "Add & Norm" (per legend).

* A light blue circle: "Fusion" (per legend).

* A diamond shape: "Memory Retrieval Mechanism" (per legend). This diamond has a bidirectional arrow connecting it to the Memory Bank above.

4. An ellipsis ("...") indicating the repeating block occurs multiple times.

5. A final instance of the repeating block before the "Outputs".

* **Connections:** Arrows show data flow between components. The "Memory Retrieval Mechanism" (diamond) has arrows pointing both to and from the Memory Bank, indicating a two-way interaction.

**Bottom Region: Legend**

* A key explaining the symbols used in the diagram.

* **Symbols and Labels:**

* Green vertical bar: "Explicit Knowledge"

* Yellow vertical bar: "Implicit Knowledge"

* Pink hexagon: "Input Embeddings"

* Vertical yellow rectangle: "Add & Norm"

* Diamond: "Memory Retrieval Mechanism"

* Orange rounded rectangle: "Multi-Head Attention"

* Light blue circle: "Fusion"

**Right Panel: Text Annotations**

* **Header:** "A Knowledge of Memory Bank"

* **List:** A vertical list of five items, each with a number in a purple box and associated text.

1. `13` - "presi"

2. `14` - "dent"

3. `27` - "—"

4. `9` - "tr"

5. `91` - "ump"

### Detailed Analysis

The diagram depicts a structured process:

1. **Input Processing:** Raw "Inputs" are first converted into "Input Embeddings".

2. **Core Processing Loop:** The embeddings enter a sequence of identical processing blocks. Each block performs:

* Multi-Head Attention on the input.

* An "Add & Norm" step (likely residual connection and layer normalization).

* A "Fusion" operation.

* A "Memory Retrieval Mechanism" that queries the external "Memory Bank" and integrates retrieved information back into the processing stream.

3. **Memory Bank Interaction:** The Memory Bank is a static repository split into "Implicit Knowledge" (majority, yellow) and "Explicit Knowledge" (minority, green). The retrieval mechanism dynamically accesses this bank.

4. **Output Generation:** After passing through multiple blocks, the processed data is emitted as "Outputs".

The text panel on the right appears to be a separate annotation or example. The fragments "presi", "dent", "tr", and "ump" could be parts of words (e.g., "president", "trust", "trump"), but without further context, their precise meaning is unclear. The numbers (13, 14, 27, 9, 91) and the em dash ("—") are transcribed exactly as shown.

### Key Observations

* **Knowledge Dichotomy:** The architecture explicitly separates knowledge into "Implicit" and "Explicit" types within its memory, suggesting a design choice for different retrieval or usage paradigms.

* **Modular and Repetitive Design:** The core processing is built from repeated, identical blocks, a common pattern in deep learning architectures like Transformers.

* **Dynamic Memory Access:** The "Memory Retrieval Mechanism" is not a passive storage but an active component that interacts bidirectionally with the Memory Bank during processing.

* **Ambiguous Annotations:** The text on the right panel is fragmentary. The words are truncated, and the list's purpose (e.g., is it a key, an example, or a code?) is not defined within the diagram itself.

### Interpretation

This diagram illustrates a **memory-augmented neural network** designed to leverage both implicit (likely learned, distributed representations) and explicit (possibly structured, factual) knowledge. The core innovation highlighted is the integrated **Memory Retrieval Mechanism**, which allows the model to dynamically consult an external knowledge store during its forward pass, rather than relying solely on knowledge encoded in its static weights.

The separation of knowledge types in the Memory Bank implies the system may handle different kinds of information differently—perhaps using implicit knowledge for pattern completion and explicit knowledge for factual recall. The repeating block structure suggests this retrieval and fusion process happens at multiple stages of processing, allowing for deep integration of retrieved knowledge.

The fragmentary text on the right ("presi", "dent", etc.) may be an example of the kind of textual data the system processes or stores, but its connection to the main diagram is not visually explicit. It could represent tokens, keys, or values associated with memory entries. Overall, the diagram conveys a sophisticated architecture aimed at enhancing a neural network's capabilities by giving it structured, retrievable memory.