## Bar Chart: KGoT Performance Improvement vs. HF Agents

### Overview

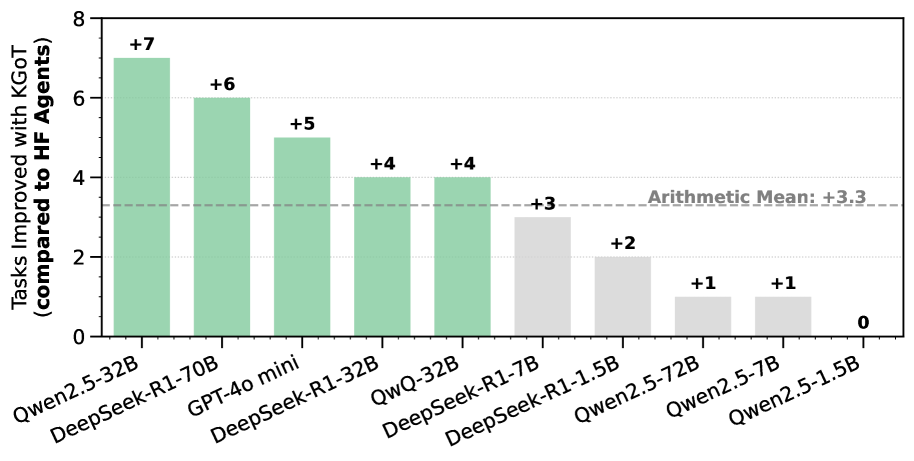

This is a vertical bar chart comparing the performance improvement of various large language models (LLMs) when using "KGoT" (likely a method or framework) versus "HF Agents" (likely Hugging Face Agents). The chart quantifies the number of additional tasks each model successfully completes with KGoT. The data is presented in descending order of improvement.

### Components/Axes

* **Y-Axis (Vertical):** Labeled "Tasks Improved with KGoT (compared to HF Agents)". The scale runs from 0 to 8, with major tick marks at intervals of 2 (0, 2, 4, 6, 8).

* **X-Axis (Horizontal):** Lists the names of 10 different AI models. The labels are rotated approximately 45 degrees for readability.

* **Data Series:** A single series of bars representing the improvement score for each model.

* **Reference Line:** A horizontal dashed gray line labeled "Arithmetic Mean: +3.3" is positioned at the y-value of 3.3.

* **Bar Labels:** Each bar has a numerical value label directly above it (e.g., "+7", "+6").

* **Color Coding:** Bars are colored in two distinct shades. The first five bars (from left) are a light green/teal color. The remaining five bars are a light gray color. The color change appears to correspond to whether the value is above (green) or below/at (gray) the arithmetic mean line.

### Detailed Analysis

The chart displays the following data points, from left to right:

1. **Qwen2.5-32B:** Green bar. Value: **+7**. This is the highest improvement shown.

2. **DeepSeek-R1-70B:** Green bar. Value: **+6**.

3. **GPT-4o mini:** Green bar. Value: **+5**.

4. **DeepSeek-R1-32B:** Green bar. Value: **+4**.

5. **QwQ-32B:** Green bar. Value: **+4**.

6. **DeepSeek-R1-7B:** Gray bar. Value: **+3**. This is the first bar below the mean line.

7. **DeepSeek-R1-1.5B:** Gray bar. Value: **+2**.

8. **Qwen2.5-72B:** Gray bar. Value: **+1**.

9. **Qwen2.5-7B:** Gray bar. Value: **+1**.

10. **Qwen2.5-1.5B:** Gray bar. Value: **0**. This model shows no improvement.

**Trend Verification:** The visual trend is a clear, step-wise descending staircase from left to right. The tallest bar is on the far left, and the bars generally decrease in height, with the final bar on the far right having zero height. The two bars for "DeepSeek-R1-32B" and "QwQ-32B" are of equal height, as are the two bars for "Qwen2.5-72B" and "Qwen2.5-7B".

### Key Observations

* **Performance Spread:** There is a significant range in KGoT's effectiveness, from a high of +7 additional tasks to a low of 0.

* **Model Size vs. Improvement:** There is no strict linear correlation between model parameter size (e.g., 70B, 32B, 7B) and improvement score. For example, the 70B DeepSeek model shows +6 improvement, while the 72B Qwen model shows only +1. The 32B Qwen model shows the highest improvement (+7).

* **Clustering:** The top five performers (all above the mean) are a mix of models from different families (Qwen, DeepSeek, GPT, QwQ). The bottom five performers (at or below the mean) are exclusively from the DeepSeek-R1 and Qwen2.5 families, but include both small and large variants (e.g., 72B and 1.5B).

* **Mean Benchmark:** The arithmetic mean improvement across all listed models is +3.3 tasks. Five models perform above this average, and five perform at or below it.

### Interpretation

The data suggests that the KGoT method provides a measurable performance boost over standard HF Agents for the majority of the tested models, with an average gain of over 3 tasks. However, its efficacy is highly model-dependent.

The lack of a clear size-to-benefit relationship implies that KGoT's advantages may stem from architectural compatibility, training data alignment, or specific capabilities of the base model rather than raw scale. The fact that the largest model tested (Qwen2.5-72B) shows minimal gain (+1) while a mid-sized model (Qwen2.5-32B) shows the maximum gain (+7) is a critical finding. It indicates that simply scaling up a model does not guarantee better utilization of the KGoT framework.

The zero improvement for Qwen2.5-1.5B suggests a potential lower-bound threshold for model capability or size below which KGoT offers no advantage. This chart would be essential for a technical audience deciding which models to pair with the KGoT system for optimal task performance, highlighting that model selection is a crucial factor beyond just choosing the largest available model.