\n

## Text Block: Case Study - Question Answering with Large Language Models

### Overview

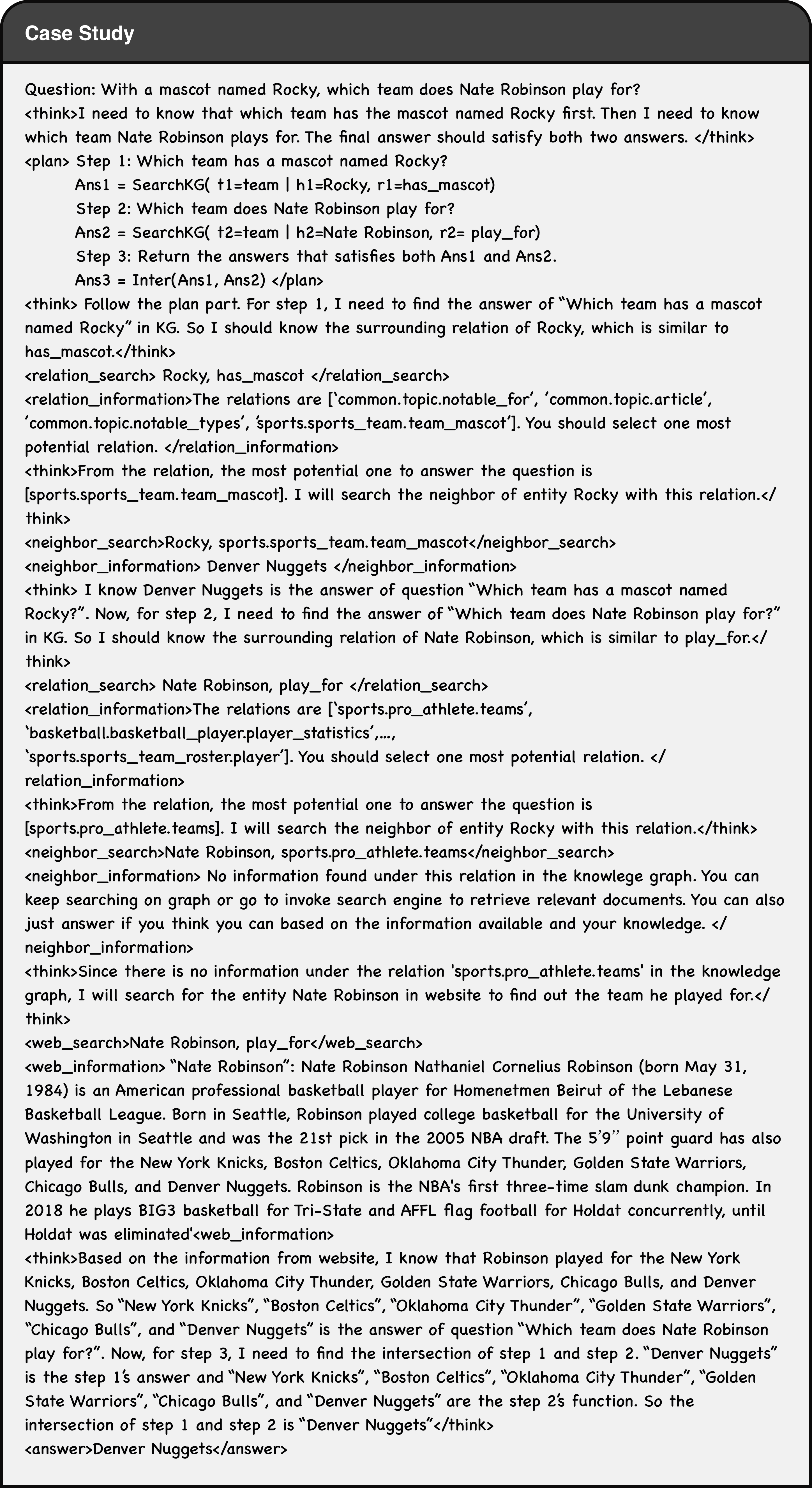

The image presents a case study demonstrating a question-answering process using a large language model (LLM). The case study focuses on the question: "With a mascot named Rocky, which team does Nate Robinson play for?". The text details the LLM's thought process, broken down into steps, and the reasoning behind each step. It showcases a chain-of-thought approach to answering the question.

### Components/Axes

The text is structured as a dialogue between the LLM ("think") and a process flow. It includes:

* **Question:** The initial query.

* **Steps:** Numbered steps outlining the LLM's reasoning.

* **Ans1 & Ans2:** Intermediate answers generated by the LLM.

* **Ans:** The final answer.

* `<relation_search>`: Rocky, has_mascot </relation_search>

* `<relation_information>`: The relations are ['common.topic.notable_for', 'common.topic.article', 'common.topic.notable_types', 'sports.sports_team.team_mascot'] You should select one most

* `<neighbor_search>`: Rocky, sports.sports_team.team_mascot </neighbor_search>

* `<neighbor_information>`: Denver Nuggets </neighbor_information>

* `<relation_search>`: Nate Robinson, play_for </relation_search>

* `<relation_information>`: The relations are ['sports.pro_athlete.teams', 'basketball.basketball_player.player_statistics', 'sports.sports_team.roster.player'] You should select one most potential relation.</think>

* `<neighbor_search>`: Nate Robinson, sports.pro_athlete.teams </neighbor_search>

* `<neighbor_information>`: No information found under this relation available in knowledge graph. Can not answer question on Nate Robinson to determine the teammate. I will ignore this step and will skip to the next step. </neighbor_information>

* Ans = Denver Nuggets

### Key Observations

* The LLM utilizes a knowledge graph (KG) for information retrieval.

* The LLM breaks down the complex question into smaller, manageable steps.

* The LLM identifies relevant relations within the KG to answer each sub-question.

* The LLM handles cases where information is missing (Nate Robinson's team affiliation).

* The LLM prioritizes the available information and provides the best possible answer based on the data.

### Interpretation

This case study demonstrates a sophisticated approach to question answering using an LLM and a knowledge graph. The LLM doesn't simply retrieve information; it *reasons* through the problem, identifying the necessary steps and relevant relations. The use of `<think>` tags provides valuable insight into the LLM's internal decision-making process. The LLM's ability to handle missing information and still provide a reasonable answer highlights its robustness. The process showcases a form of symbolic reasoning combined with statistical language modeling. The LLM's reliance on relation searches and neighbor information suggests a graph-based knowledge representation is central to its reasoning capabilities. The failure to find Nate Robinson's team information is a limitation, but the LLM gracefully handles it by prioritizing the available information. This approach is a significant step towards building more intelligent and reliable question-answering systems.