\n

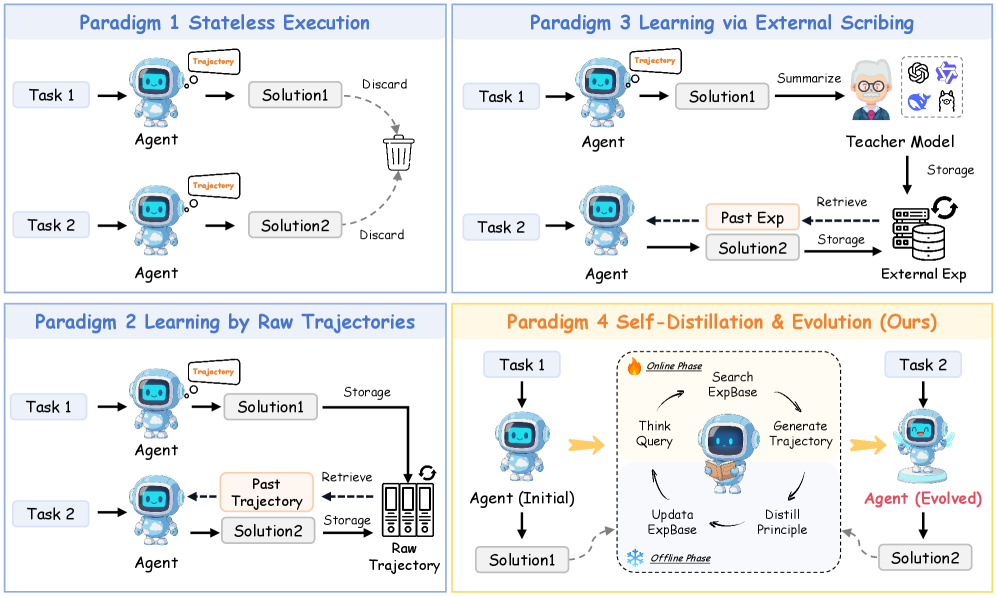

## Diagram: Comparison of Four Agent Learning Paradigms

### Overview

The image is a technical diagram comparing four distinct paradigms for training or operating an AI agent. It is divided into four rectangular panels, each illustrating a different approach. The top-left panel (Paradigm 1) shows a stateless, discard-based method. The bottom-left panel (Paradigm 2) introduces learning from stored raw trajectories. The top-right panel (Paradigm 3) depicts learning via an external "Teacher Model" and curated experience. The bottom-right panel (Paradigm 4), highlighted with a yellow border and labeled "(Ours)", presents a novel "Self-Distillation & Evolution" method with distinct online and offline phases.

### Components/Axes

The diagram is structured as a 2x2 grid of panels. Each panel contains:

* **Title:** A blue-text heading at the top (e.g., "Paradigm 1 Stateless Execution").

* **Core Components:** Icons representing an "Agent" (a blue robot), "Task" inputs, "Solution" outputs, and various storage or processing elements.

* **Flow Arrows:** Solid black arrows indicating the primary process flow. Dashed arrows indicate secondary flows like storage, retrieval, or feedback.

* **Text Labels:** Precise labels for every component and process step.

### Detailed Analysis

#### **Paradigm 1: Stateless Execution (Top-Left Panel)**

* **Flow:** Linear and non-persistent.

* **Process for Task 1:** `Task 1` → `Agent` (with a thought bubble labeled "Trajectory") → `Solution1` → A dashed arrow labeled "Discard" points to a trash can icon.

* **Process for Task 2:** Identical flow: `Task 2` → `Agent` (with "Trajectory") → `Solution2` → "Discard" to trash can.

* **Key Text:** "Paradigm 1 Stateless Execution", "Task 1", "Task 2", "Agent", "Trajectory", "Solution1", "Solution2", "Discard".

#### **Paradigm 2: Learning by Raw Trajectories (Bottom-Left Panel)**

* **Flow:** Introduces persistent storage of complete interaction histories.

* **Process for Task 1:** `Task 1` → `Agent` ("Trajectory") → `Solution1`. A solid arrow labeled "Storage" leads from `Solution1` to a server rack icon labeled "Raw Trajectory".

* **Process for Task 2:** `Task 2` → `Agent`. A dashed arrow labeled "Retrieve" points from the "Raw Trajectory" storage to the Agent, with a label "Past Trajectory" in a beige box. The Agent then produces `Solution2`, which is also stored.

* **Key Text:** "Paradigm 2 Learning by Raw Trajectories", "Storage", "Retrieve", "Past Trajectory", "Raw Trajectory".

#### **Paradigm 3: Learning via External Scribing (Top-Right Panel)**

* **Flow:** Involves an external "Teacher Model" to curate experiences.

* **Process for Task 1:** `Task 1` → `Agent` ("Trajectory") → `Solution1`. A solid arrow labeled "Summarize" leads to a "Teacher Model" icon (a person with glasses). The Teacher Model is associated with logos for ChatGPT, Claude, and others.

* **Process for Task 2:** The Teacher Model has a "Storage" arrow pointing to a database icon labeled "External Exp". For `Task 2`, a dashed "Retrieve" arrow brings "Past Exp" (beige box) from this database to the Agent, which then produces `Solution2`. The solution is also sent back for "Storage".

* **Key Text:** "Paradigm 3 Learning via External Scribing", "Summarize", "Teacher Model", "Storage", "Retrieve", "Past Exp", "External Exp".

#### **Paradigm 4: Self-Distillation & Evolution (Ours) (Bottom-Right Panel)**

* **Flow:** A cyclical, self-improving process split into "Online Phase" and "Offline Phase".

* **Initial State:** `Task 1` → `Agent (Initial)` → `Solution1`.

* **Online Phase (Dashed Box):** The core loop. The Agent performs: `Think Query` → `Search ExpBase` → `Generate Trajectory` → `Distill Principle`. This loop is labeled with a flame icon and "Online Phase".

* **Offline Phase:** The "Distill Principle" step feeds into `Update ExpBase`, labeled with a snowflake icon and "Offline Phase".

* **Evolved State:** After the process, the agent is labeled `Agent (Evolved)` (in red text). It then handles `Task 2` to produce `Solution2`.

* **Key Text:** "Paradigm 4 Self-Distillation & Evolution (Ours)", "Task 1", "Agent (Initial)", "Solution1", "Online Phase", "Think Query", "Search ExpBase", "Generate Trajectory", "Distill Principle", "Offline Phase", "Update ExpBase", "Agent (Evolved)", "Task 2", "Solution2".

### Key Observations

1. **Progression of Complexity:** The paradigms evolve from simple, forgetful execution (1) to internal memory (2), to externally guided learning (3), and finally to autonomous self-improvement (4).

2. **Storage Evolution:** Storage changes from a trash can (1), to raw data servers (2), to a curated external database (3), to an internal "ExpBase" that is updated via self-distilled principles (4).

3. **Agent State:** The agent is static in Paradigms 1-3. Only in Paradigm 4 does it explicitly transform from an "Initial" to an "Evolved" state.

4. **Visual Emphasis:** Paradigm 4 is visually set apart with a yellow border, a more complex internal loop diagram, and the red "Evolved" label, marking it as the proposed, superior method.

5. **Direction of Learning:** Learning is passive in 1 (none), reactive in 2 & 3 (using stored past data), and proactive/self-initiated in 4.

### Interpretation

This diagram argues for a paradigm shift in agent design. It posits that traditional methods are limited: Paradigm 1 learns nothing, Paradigm 2 is burdened by noisy raw data, and Paradigm 3 depends on costly external models. The proposed Paradigm 4, "Self-Distillation & Evolution," is presented as the solution. It suggests that for an agent to truly improve, it must engage in a continuous, internal cycle of experience ("Generate Trajectory"), reflection ("Distill Principle"), and knowledge base refinement ("Update ExpBase"). The "Online/Offline" phase distinction implies a separation between active task engagement and background consolidation of learning. The ultimate goal, visualized by the "Agent (Evolved)", is an agent that becomes fundamentally more capable over time through its own operations, reducing reliance on external supervision or static datasets. The diagram is a conceptual blueprint for creating self-improving AI systems.