## Diagram: Pruning Method for Large Transformer-Based Model

### Overview

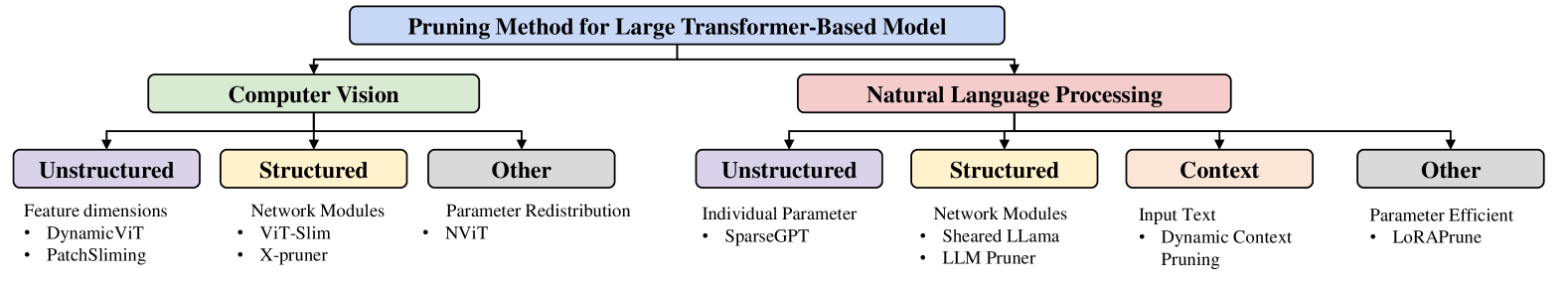

This is a hierarchical diagram outlining different pruning methods for large transformer-based models. The diagram branches into two main application areas: Computer Vision and Natural Language Processing. Each of these areas is further categorized into sub-types of pruning, with specific examples listed under each sub-type.

### Components/Axes

The diagram consists of rectangular nodes with rounded corners, connected by arrows indicating a hierarchical relationship. The nodes are color-coded to distinguish different levels and categories.

* **Top Level (Blue):** "Pruning Method for Large Transformer-Based Model" - This is the root node.

* **Second Level (Green and Pink):**

* "Computer Vision" (Green)

* "Natural Language Processing" (Pink)

* **Third Level (Purple, Yellow, Grey, Peach):** These nodes represent categories of pruning methods within each application area.

* **Under Computer Vision:**

* "Unstructured" (Purple)

* "Structured" (Yellow)

* "Other" (Grey)

* **Under Natural Language Processing:**

* "Unstructured" (Purple)

* "Structured" (Yellow)

* "Context" (Peach)

* "Other" (Grey)

* **Fourth Level (Textual Lists):** These are lists of specific pruning techniques or characteristics associated with the third-level categories.

### Content Details

**Under "Computer Vision":**

* **Unstructured (Purple):**

* Feature dimensions

* DynamicViT

* PatchSliming

* **Structured (Yellow):**

* Network Modules

* ViT-Slim

* X-pruner

* **Other (Grey):**

* Parameter Redistribution

* NViT

**Under "Natural Language Processing":**

* **Unstructured (Purple):**

* Individual Parameter

* SparseGPT

* **Structured (Yellow):**

* Network Modules

* Sheared Llama

* LLM Pruner

* **Context (Peach):**

* Input Text

* Dynamic Context Pruning

* **Other (Grey):**

* Parameter Efficient

* LoRAPrune

### Key Observations

The diagram clearly separates pruning methods based on their application domain (Computer Vision vs. Natural Language Processing). Within each domain, pruning is further classified into common categories like "Unstructured," "Structured," and "Other." The "Natural Language Processing" domain also includes a specific "Context" category, suggesting a distinct approach for handling contextual information in NLP models. The diagram provides concrete examples of pruning techniques for each sub-category.

### Interpretation

This diagram serves as a taxonomy for understanding the landscape of pruning methods applied to large transformer-based models. It highlights that the choice of pruning method is often dictated by the application domain. The distinction between "Unstructured" and "Structured" pruning is a fundamental concept, with "Unstructured" typically referring to the removal of individual weights or parameters, and "Structured" referring to the removal of entire structures like neurons, channels, or layers. The inclusion of "Other" categories suggests that there are methods that do not fit neatly into the primary classifications. The "Context" category under NLP is particularly interesting, implying that methods specifically designed to leverage or prune contextual information are relevant in this domain. The specific examples provided (e.g., DynamicViT, SparseGPT, LoRAPrune) offer concrete instances of these pruning strategies, which would be valuable for researchers and practitioners looking to implement or compare different pruning techniques. The overall structure suggests a systematic approach to categorizing and understanding the diverse methods available for model compression in large transformer architectures.