## Line Chart: Model Accuracy Comparison

### Overview

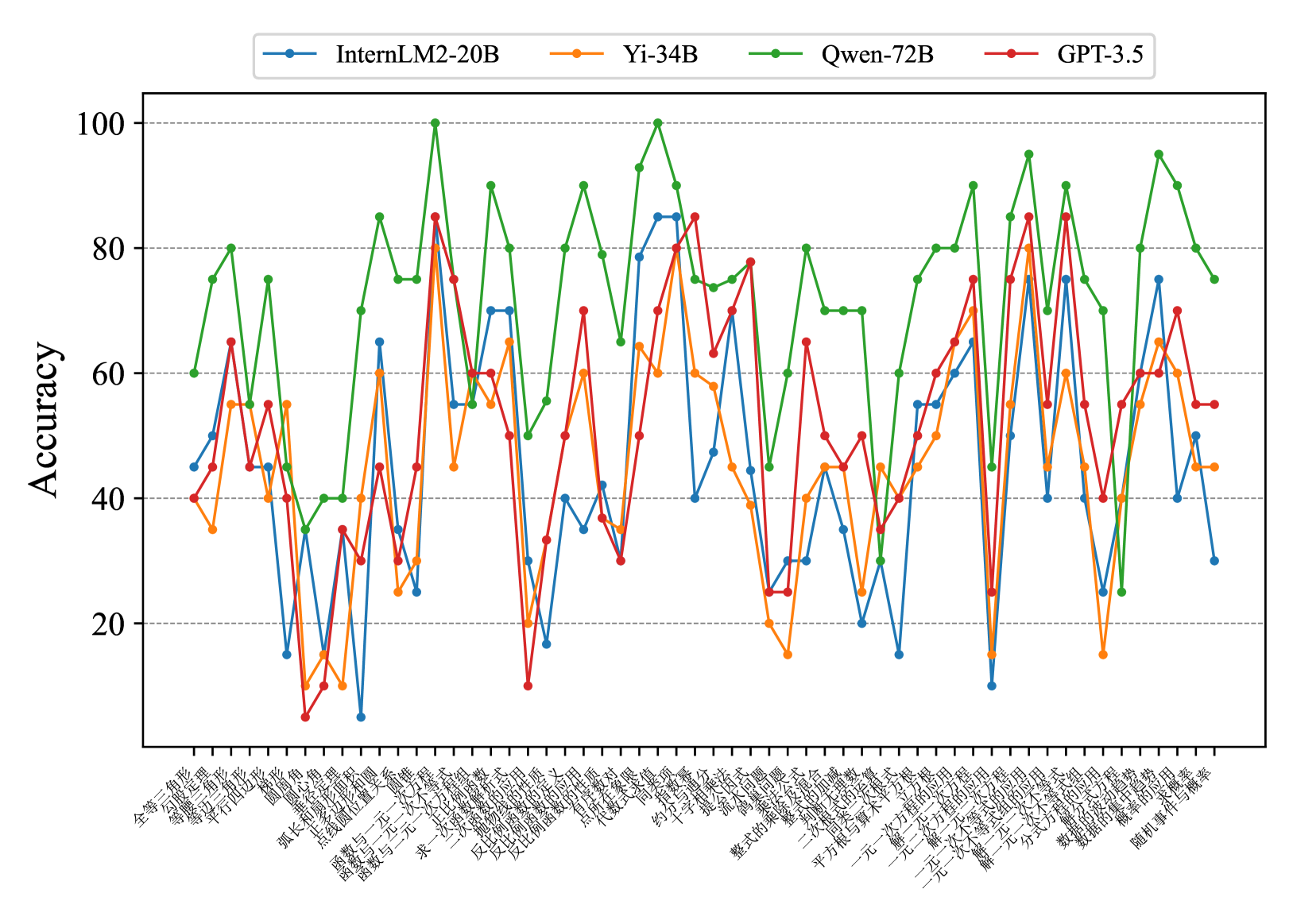

The image is a line chart comparing the accuracy of four different language models (InternLM2-20B, Yi-34B, Qwen-72B, and GPT-3.5) across a range of mathematical problem types. The y-axis represents accuracy, ranging from 0 to 100. The x-axis represents different problem types, labeled in Chinese.

### Components/Axes

* **Title:** There is no explicit title on the chart.

* **X-axis:** Represents different mathematical problem types, labeled in Chinese. The labels are densely packed and rotated to fit.

* **Y-axis:** Represents "Accuracy," ranging from 0 to 100 in increments of 20. Horizontal gridlines are present at each increment.

* **Legend:** Located at the top of the chart.

* Blue line: InternLM2-20B

* Orange line: Yi-34B

* Green line: Qwen-72B

* Red line: GPT-3.5

### Detailed Analysis

**X-Axis Labels (Chinese with approximate English Translation):**

The x-axis labels are in Chinese. Here's a transcription and approximate translation:

1. 全等三角形 (Quán děng sānjiǎoxíng): Congruent triangles

2. 三角形判定定理 (Sānjiǎoxíng pàndìng dìnglǐ): Triangle determination theorem

3. 等腰三角形 (Děng yāo sānjiǎoxíng): Isosceles triangle

4. 平行四边形 (Píngxíng sìbiānxíng): Parallelogram

5. 圆 (Yuán): Circle

6. 弧长与扇形面积 (Hú cháng yǔ shànxíng miànjī): Arc length and sector area

7. 函数与一次函数 (Hánshù yǔ yīcì hánshù): Function and linear function

8. 反比例函数 (Fǎn bǐlì hánshù): Inverse proportional function

9. 一次函数与方程 (Yīcì hánshù yǔ fāngchéng): Linear function and equation

10. 求一次函数解析式 (Qiú yīcì hánshù jiěxī shì): Finding the analytical expression of a linear function

11. 代数式 (Dàishùshì): Algebraic expression

12. 整式的乘法 (Zhěng shì de chéngfǎ): Multiplication of polynomials

13. 约分 (Yuē fēn): Reduction of fractions

14. 平方根 (Píngfāng gēn): Square root

15. 一元一次不等式 (Yī yuán yīcì bù děngshì): Linear inequality in one variable

16. 一元一次方程 (Yī yuán yīcì fāngchéng): Linear equation in one variable

17. 二元一次方程组 (Èr yuán yīcì fāngchéng zǔ): System of linear equations in two variables

18. 分式的运算 (Fēnshì de yùsuàn): Operations with fractions

19. 数据的整理 (Shùjù de zhěnglǐ): Data organization

20. 随机事件与概率 (Suíjī shìjiàn yǔ gàilǜ): Random events and probability

**Data Series Analysis:**

* **InternLM2-20B (Blue):** The accuracy fluctuates significantly across different problem types, ranging from approximately 10 to 70. There are several sharp drops and rises.

* **Yi-34B (Orange):** Similar to InternLM2-20B, the accuracy varies considerably. The range is approximately 30 to 75.

* **Qwen-72B (Green):** Generally shows higher accuracy compared to the other models, with values ranging from approximately 40 to 100. The fluctuations are still present, but the overall performance is better.

* **GPT-3.5 (Red):** The accuracy fluctuates, with values ranging from approximately 5 to 85.

**Specific Data Points (Approximate):**

It's difficult to provide precise data points due to the resolution and density of the chart, but here are some approximate values for the first and last problem types:

* **Problem 1 (全等三角形):**

* InternLM2-20B: ~52

* Yi-34B: ~40

* Qwen-72B: ~60

* GPT-3.5: ~45

* **Problem 20 (随机事件与概率):**

* InternLM2-20B: ~40

* Yi-34B: ~45

* Qwen-72B: ~80

* GPT-3.5: ~65

### Key Observations

* Qwen-72B generally outperforms the other models across most problem types.

* All models exhibit significant fluctuations in accuracy depending on the problem type.

* There are specific problem types where certain models perform particularly poorly (e.g., GPT-3.5 on problem 16).

* The performance of InternLM2-20B and Yi-34B is relatively similar.

### Interpretation

The chart demonstrates the varying performance of different language models on a range of mathematical problems. The fluctuations in accuracy highlight the models' strengths and weaknesses in specific areas of mathematical reasoning. Qwen-72B's consistently higher accuracy suggests it is a more robust model for these types of problems compared to InternLM2-20B, Yi-34B, and GPT-3.5. The data suggests that no single model excels at all problem types, indicating the need for specialized models or ensemble approaches for optimal performance across diverse mathematical tasks. The specific nature of the Chinese-labeled problem types would need further investigation to understand the exact challenges they pose to the models.