## Diagram: Learnable Dimension Pruning with Rank Loss

### Overview

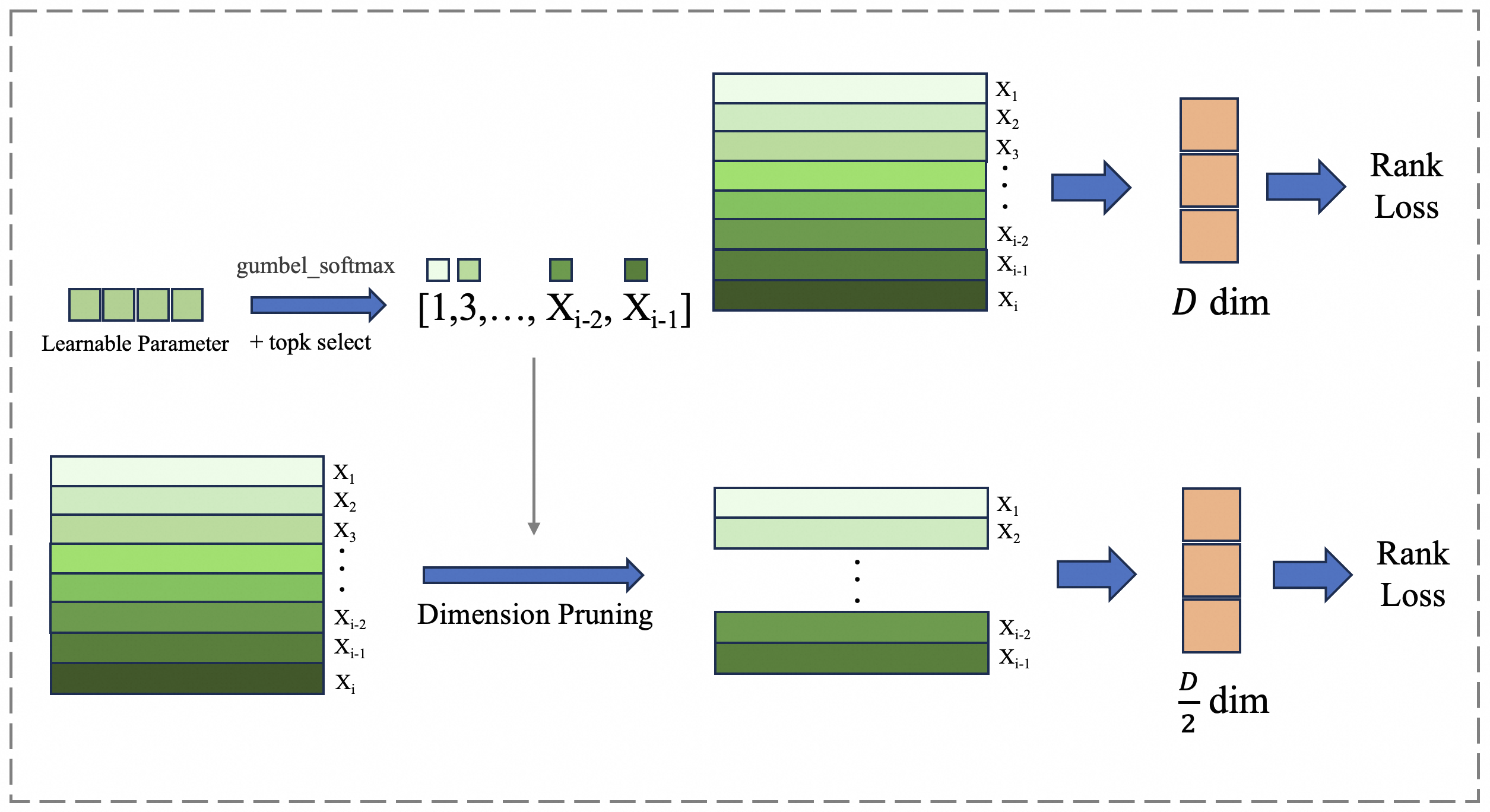

The image is a technical flowchart illustrating a machine learning process for dimensionality reduction or feature selection. It depicts a two-pathway system where a learnable parameter guides the selection of specific dimensions (features) from an input data stack, followed by a pruning step that reduces the dimensionality. The process is evaluated using a "Rank Loss" function at two different stages.

### Components/Axes

The diagram is organized into two main horizontal pathways (top and bottom) within a dashed-line border. All text is in English.

**Key Components & Labels:**

1. **Learnable Parameter:** A block of four light-green squares in the top-left.

2. **Selection Mechanism:** An arrow labeled `gumbel_softmax + topk select` points from the "Learnable Parameter" to a list.

3. **Selected Indices:** The list is denoted as `[1,3,..., X_{i-2}, X_{i-1}]`. Small colored squares (light green, medium green, dark green) are shown above this list.

4. **Input Data Stacks:** Two identical vertical stacks of colored rectangles, representing data dimensions or features. They are labeled from top to bottom:

* `X_1` (lightest green)

* `X_2`

* `X_3`

* `...` (ellipsis)

* `X_{i-2}`

* `X_{i-1}`

* `X_i` (darkest green)

* One stack is in the top-center, the other in the bottom-left.

5. **Dimension Pruning:** A blue arrow labeled `Dimension Pruning` points from the selected indices list to the bottom data stack.

6. **Output Dimension Blocks:** Two orange vertical blocks.

* Top pathway: Labeled `D dim`.

* Bottom pathway: Labeled `D/2 dim`.

7. **Loss Function:** The text `Rank Loss` appears twice, at the end of both the top and bottom pathways.

8. **Flow Arrows:** Blue arrows indicate the direction of data/process flow throughout the diagram.

### Detailed Analysis

**Spatial Layout and Flow:**

* **Top Pathway (Full Dimension):** Starts with the "Learnable Parameter" (top-left). The selection mechanism (`gumbel_softmax + topk select`) produces a list of selected indices. This list points to the top "Input Data Stack" (top-center), implying these indices select specific rows (`X` features) from the stack. The selected data flows (blue arrow) into the `D dim` block (top-right), which then flows to the `Rank Loss` calculation.

* **Bottom Pathway (Pruned Dimension):** Starts with the second "Input Data Stack" (bottom-left). The same list of selected indices from the top pathway points down to this stack via the `Dimension Pruning` arrow. This results in a reduced stack (bottom-center) containing only the selected rows (e.g., `X_1`, `X_2`, `...`, `X_{i-2}`, `X_{i-1}`). This pruned data flows into the `D/2 dim` block (bottom-right), which then flows to a second `Rank Loss` calculation.

**Component Relationships:**

* The "Learnable Parameter" and `gumbel_softmax + topk select` mechanism are the control unit, determining which dimensions (`X` features) are important.

* The two "Input Data Stacks" represent the same original high-dimensional data.

* "Dimension Pruning" is the action that physically removes the unselected dimensions, creating a smaller dataset.

* The `D dim` and `D/2 dim` blocks represent the data after selection (full set of selected dimensions) and after pruning (a reduced set, hypothetically half the original dimension `D`), respectively.

* `Rank Loss` is the objective function applied to both the selected full-dimension representation and the pruned representation, likely to ensure the pruning preserves the relative ranking or structural information of the data.

### Key Observations

1. **Color Coding:** A consistent green gradient is used for data dimensions (`X_1` light to `X_i` dark). The selection list uses matching colored squares. Orange is used for dimensionality blocks (`D dim`, `D/2 dim`). Blue is used for process arrows.

2. **Dimension Reduction:** The bottom pathway explicitly reduces the data from a stack of `i` dimensions to a smaller stack. The label `D/2 dim` suggests the output dimension is half of some original dimension `D`.

3. **Differentiable Selection:** The use of `gumbel_softmax` indicates this is a method for making discrete selection (top-k) differentiable, allowing the "Learnable Parameter" to be trained via backpropagation.

4. **Dual Evaluation:** The process is evaluated with `Rank Loss` at two points: on the data after selection but before pruning (top), and on the data after pruning (bottom). This suggests the loss is used to train the learnable parameters to select dimensions that are important for maintaining the data's rank structure.

### Interpretation

This diagram illustrates a **learnable feature selection or dimension pruning technique** for machine learning models. The core idea is to use a small set of learnable parameters, optimized via a Gumbel-Softmax trick, to automatically identify and select the most important input dimensions (`X` features). The selected dimensions are then used to create a pruned, lower-dimensional representation of the data (`D/2 dim`).

The use of **Rank Loss** is critical. It implies the goal is not merely to reconstruct the input data, but to preserve the *relative ordering* or *similarity structure* within the data after dimensionality reduction. This is common in tasks like retrieval, ranking, or metric learning. By applying the loss to both the selected and pruned representations, the model likely ensures that the selection process itself is optimal for the final, compressed output.

The process flow suggests an end-to-end trainable system where the selection mechanism and the downstream task (modeled by the Rank Loss) are optimized jointly. The "Dimension Pruning" step is the practical application, resulting in a more efficient model with fewer input features (`D/2` instead of `D`), while the dual loss calculation ensures fidelity to the original data's structure.