\n

## Diagram: AI Reasoning Process - Decision Making, Programming, and Reasoning

### Overview

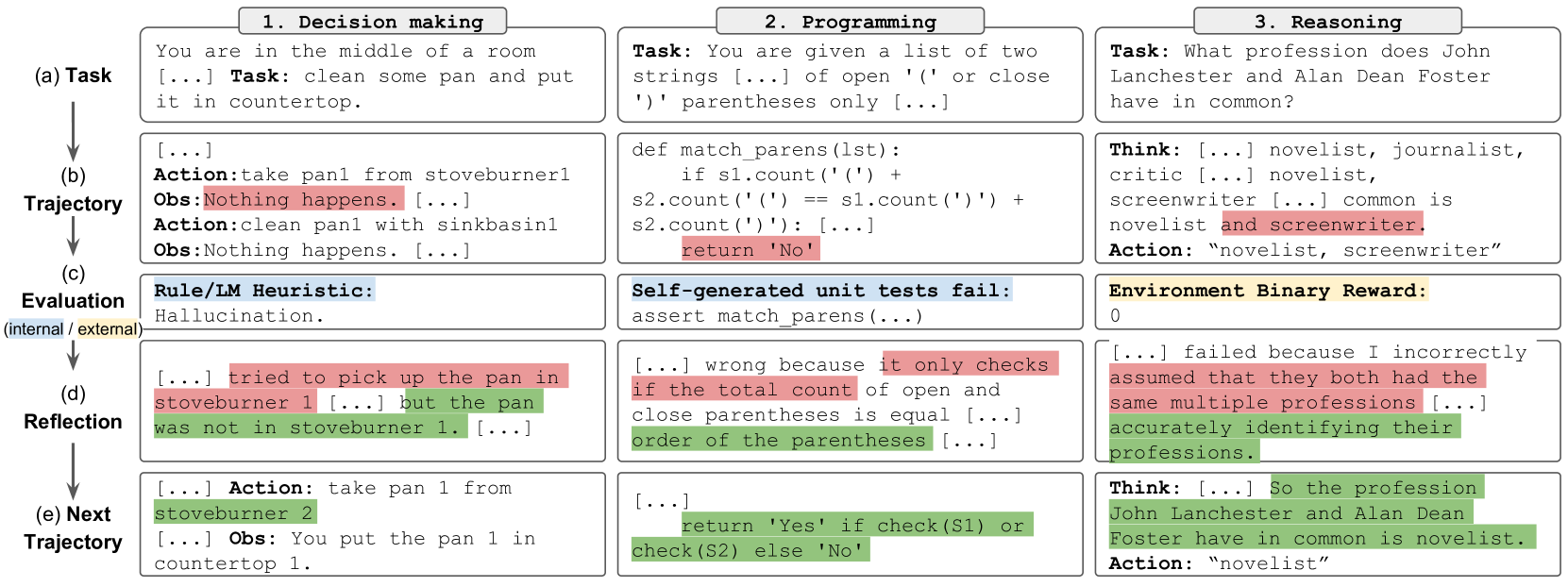

The image presents a diagram illustrating a comparison of an AI's reasoning process across three tasks: Decision Making, Programming, and Reasoning. Each task is broken down into five stages: (a) Task, (b) Trajectory, (c) Evaluation, (d) Reflection, and (e) Next Trajectory. The diagram showcases the AI's internal thought process, actions, observations, and self-evaluation at each stage for each task.

### Components/Axes

The diagram is structured into three columns, each representing a different task:

1. **Decision Making:** Focuses on a simple room cleaning task.

2. **Programming:** Focuses on a parenthesis matching function.

3. **Reasoning:** Focuses on identifying common professions between two individuals.

Each column is further divided into five rows, representing the stages of the AI's process:

* **(a) Task:** Describes the initial problem presented to the AI.

* **(b) Trajectory:** Shows the AI's initial actions and observations.

* **(c) Evaluation:** Displays the AI's internal evaluation of its performance, including any heuristics used.

* **(d) Reflection:** Shows the AI's analysis of its mistakes and understanding of why they occurred.

* **(e) Next Trajectory:** Presents the AI's revised actions and observations based on its reflection.

The diagram uses arrows to indicate the flow of the process from one stage to the next. The "Rule/IM Heuristic" in the Decision Making column is labeled as "(internal) hallucination". "Environment Binary Reward" is present in the Reasoning column.

### Detailed Analysis or Content Details

**1. Decision Making:**

* **(a) Task:** "You are in the middle of a room [...] Task: clean some pan and put it in countertop."

* **(b) Trajectory:** "Action: take pan from stoveburner1 Obs: Nothing happens. [...] Action: clean pan with sinkbasin1 Obs: Nothing happens."

* **(c) Evaluation:** "Rule/IM Heuristic: hallucination."

* **(d) Reflection:** "[...] tried to pick up the pan in stoveburner 1 [...] but the pan was not in stoveburner 1. [...]"

* **(e) Next Trajectory:** "Action: take pan 1 from stoveburner 2 [...] Obs: You put the pan 1 in countertop 1."

**2. Programming:**

* **(a) Task:** "You are given a list of two strings [...] of open '(' or close ')' parentheses only [...]"

* **(b) Trajectory:** `def match_parens(lst): if s1.count('(') + s2.count('(') == s1.count(')') + s2.count(')'): return 'No' `

* **(c) Evaluation:** "Self-generated unit tests fail: assert match_parens(...) "

* **(d) Reflection:** "[...] wrong because it only checks if the total count of open and close parentheses is equal [...] order of the parentheses [...]"

* **(e) Next Trajectory:** `return 'Yes' if check(S1) or check(S2) else 'No'`

**3. Reasoning:**

* **(a) Task:** "What profession does John Lanchester and Alan Dean Foster have in common?"

* **(b) Trajectory:** "Think: [...] novelist, journalist, critic [...] novelist, screenwriter [...] common is novelist and screenwriter. Action: 'novelist, screenwriter'"

* **(c) Evaluation:** "Environment Binary Reward: 0"

* **(d) Reflection:** "[...] failed because I incorrectly assumed that they both had the same multiple professions [...] accurately identifying their professions."

* **(e) Next Trajectory:** "Think: [...] So the profession John Lanchester and Alan Dean Foster have in common is novelist. Action: 'novelist'"

### Key Observations

* The AI initially makes mistakes in all three tasks, demonstrating the need for reflection and correction.

* The "hallucination" label in the Decision Making task suggests the AI is prone to making assumptions not grounded in reality.

* The Programming task highlights the importance of considering the order of operations, not just the overall count.

* The Reasoning task shows the AI initially overcomplicates the problem by identifying multiple common professions before narrowing it down to the correct answer.

* The Environment Binary Reward of 0 in the Reasoning task indicates the initial attempt was incorrect.

### Interpretation

This diagram illustrates the iterative nature of AI reasoning. The AI doesn't arrive at the correct answer immediately but rather through a process of trial and error, self-evaluation, and refinement. The diagram highlights the challenges of building AI systems that can reason effectively, particularly in situations that require common sense or nuanced understanding. The inclusion of "hallucination" as a heuristic suggests that current AI models can sometimes generate responses that are not based on factual information. The diagram demonstrates the importance of incorporating mechanisms for reflection and correction into AI systems to improve their accuracy and reliability. The comparison across three different tasks suggests that the underlying principles of AI reasoning are consistent, regardless of the specific domain. The diagram is a valuable tool for understanding the inner workings of AI and for identifying areas where further research is needed.