## Line Chart: MathVista Accuracy vs. Number of Solutions per Problem

### Overview

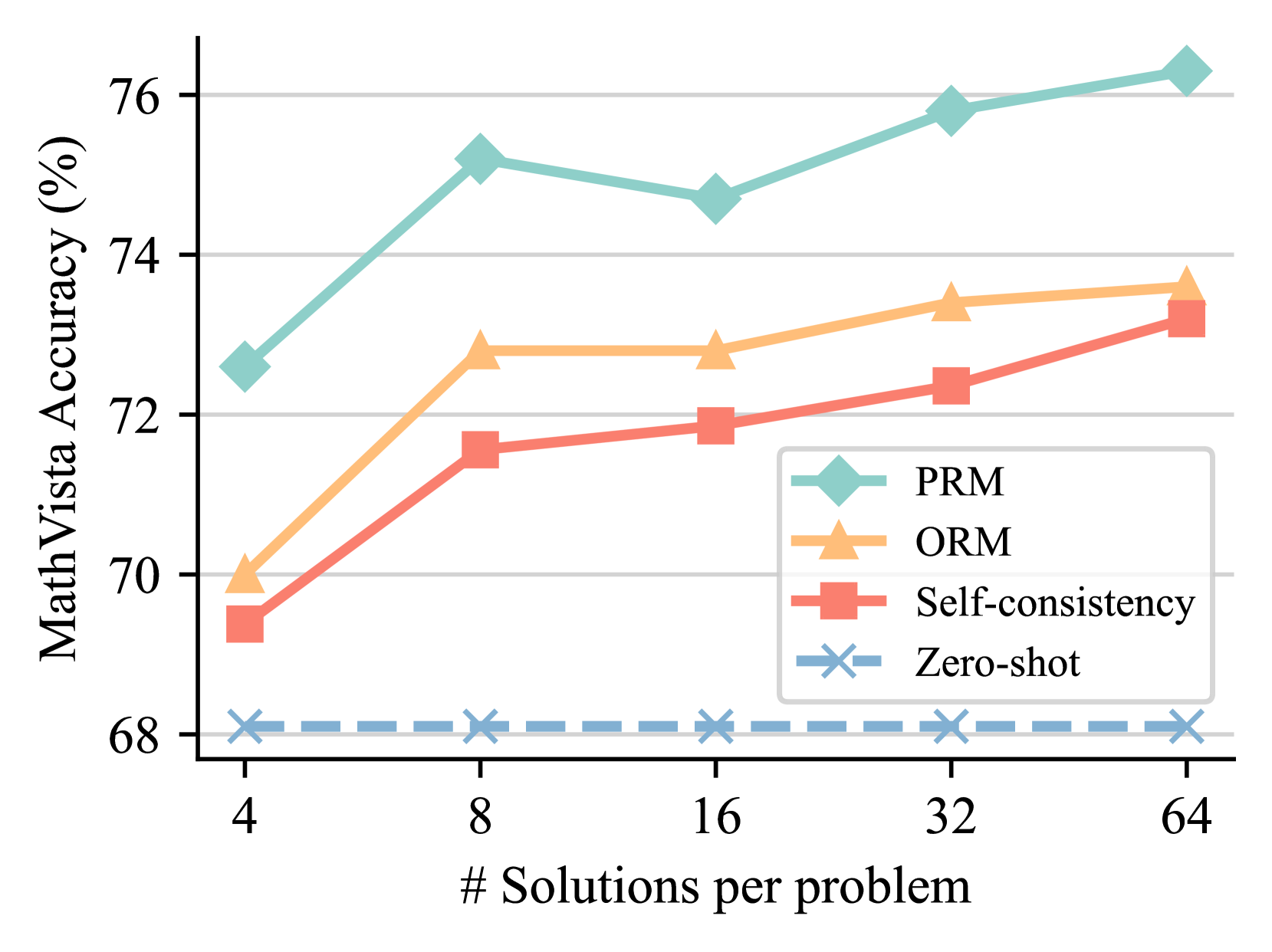

The image is a line chart comparing the performance of four different methods on the MathVista benchmark. The chart plots accuracy (as a percentage) against the number of solutions generated per problem. The data suggests that generating more solutions generally improves accuracy for most methods, but with varying degrees of effectiveness and diminishing returns.

### Components/Axes

* **Chart Type:** Multi-series line chart.

* **Y-Axis (Vertical):**

* **Label:** `MathVista Accuracy (%)`

* **Scale:** Linear, ranging from approximately 68% to 76%.

* **Major Ticks:** 68, 70, 72, 74, 76.

* **X-Axis (Horizontal):**

* **Label:** `# Solutions per problem`

* **Scale:** Logarithmic (base 2), with discrete points.

* **Data Points (Categories):** 4, 8, 16, 32, 64.

* **Legend:**

* **Position:** Bottom-right corner of the plot area.

* **Series & Markers:**

1. **PRM:** Teal line with diamond markers (◆).

2. **ORM:** Orange line with upward-pointing triangle markers (▲).

3. **Self-consistency:** Red line with square markers (■).

4. **Zero-shot:** Blue dashed line with 'X' markers (✕).

### Detailed Analysis

**Trend Verification & Data Points (Approximate Values):**

1. **PRM (Teal, Diamond ◆):**

* **Trend:** Shows the highest overall accuracy. It increases sharply from 4 to 8 solutions, dips slightly at 16, then rises steadily to its peak at 64 solutions.

* **Data Points:**

* 4 solutions: ~72.5%

* 8 solutions: ~75.2%

* 16 solutions: ~74.7%

* 32 solutions: ~75.8%

* 64 solutions: ~76.3%

2. **ORM (Orange, Triangle ▲):**

* **Trend:** Starts lower than PRM but follows a similar upward trajectory. It sees a significant jump from 4 to 8 solutions, plateaus between 8 and 16, then increases gradually.

* **Data Points:**

* 4 solutions: ~70.0%

* 8 solutions: ~72.8%

* 16 solutions: ~72.8%

* 32 solutions: ~73.4%

* 64 solutions: ~73.7%

3. **Self-consistency (Red, Square ■):**

* **Trend:** Shows a consistent, nearly linear upward trend across all data points. It starts as the lowest-performing method at 4 solutions but closes the gap significantly by 64 solutions.

* **Data Points:**

* 4 solutions: ~69.4%

* 8 solutions: ~71.6%

* 16 solutions: ~71.9%

* 32 solutions: ~72.3%

* 64 solutions: ~73.2%

4. **Zero-shot (Blue Dashed, Cross ✕):**

* **Trend:** This is the baseline method. Its performance is essentially flat, showing no improvement as the number of solutions per problem increases. It remains constant at approximately 68.1% across all x-axis values.

### Key Observations

* **Performance Hierarchy:** PRM is consistently the top-performing method at every data point. Zero-shot is consistently the lowest.

* **Impact of Scaling:** For PRM, ORM, and Self-consistency, increasing the number of solutions from 4 to 8 yields the most substantial accuracy gains. The rate of improvement generally diminishes beyond 8 or 16 solutions.

* **Convergence:** The performance gap between ORM and Self-consistency narrows considerably as the number of solutions increases. At 4 solutions, ORM is ~0.6% higher; at 64 solutions, the difference is only ~0.5%.

* **Anomaly:** The PRM method shows a slight performance dip at 16 solutions compared to 8, which is not observed in the other improving methods.

* **Baseline Behavior:** The Zero-shot line serves as a control, demonstrating that the improvements seen in the other methods are due to the multi-solution strategy (and the associated ranking/selection mechanisms like PRM/ORM), not simply from generating more samples.

### Interpretation

This chart demonstrates the effectiveness of "generate-and-rank" strategies for improving mathematical reasoning in AI models. The key takeaways are:

1. **Multi-Solution Generation is Beneficial:** Simply generating multiple candidate solutions (Self-consistency) and selecting the best one improves accuracy over a single (zero-shot) attempt.

2. **Advanced Ranking Models Add Significant Value:** Using a dedicated Process Reward Model (PRM) or Outcome Reward Model (ORM) to rank the generated solutions provides a substantial additional boost in accuracy over simple self-consistency voting. PRM appears to be the most effective ranking method shown.

3. **Diminishing Returns:** There is a clear point of diminishing returns. The most cost-effective gains are achieved by moving from 4 to 8 solutions per problem. Scaling to 64 solutions provides further improvement but at a much lower rate, which must be weighed against the increased computational cost.

4. **The Value of Process vs. Outcome:** The consistent lead of PRM over ORM suggests that evaluating the reasoning *process* step-by-step may be more reliable for mathematical problems than evaluating only the final *outcome*, especially as the number of candidate solutions grows.

In summary, the data argues for a strategy that combines generating a moderate number of solution candidates (e.g., 8-16) with a sophisticated process-based reward model to select the best one, as this approach yields the highest accuracy on the MathVista benchmark.