## Diagram: Neural Turing Machine Addressing Mechanism

### Overview

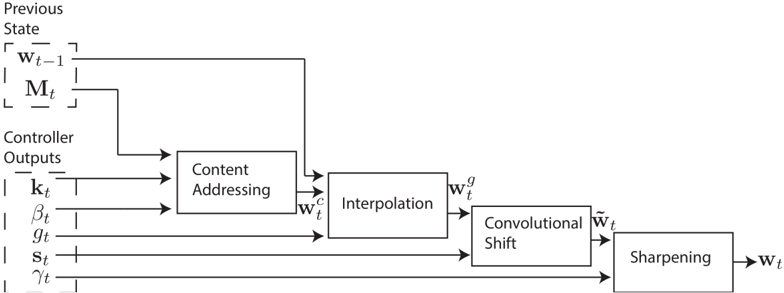

The image is a block diagram illustrating the addressing mechanism within a Neural Turing Machine (NTM). It shows the flow of information from previous states and controller outputs through several processing stages to determine the current weight vector.

### Components/Axes

* **Previous State (Top-Left)**:

* `w_{t-1}` (Weight vector at time t-1) - enclosed in a dashed box.

* `M_t` (Memory matrix at time t) - enclosed in a dashed box.

* **Controller Outputs (Left)**:

* `k_t` (Key vector at time t)

* `β_t` (Key strength at time t)

* `g_t` (Interpolation gate at time t)

* `s_t` (Shift weighting at time t)

* `γ_t` (Sharpening factor at time t)

* **Processing Blocks (Center)**:

* Content Addressing

* Interpolation

* Convolutional Shift

* Sharpening

* **Intermediate Weight Vectors**:

* `w_t^c` (Content-based weight vector)

* `w_t^g` (Gated weight vector)

* `\tilde{w}_t` (Shifted weight vector)

* **Output (Right)**:

* `w_t` (Weight vector at time t)

### Detailed Analysis

1. **Previous State**: The weight vector from the previous time step (`w_{t-1}`) and the memory matrix (`M_t`) are inputs to the Content Addressing block.

2. **Controller Outputs**: The controller outputs (`k_t`, `β_t`, `g_t`, `s_t`, `γ_t`) are used to modulate the addressing process.

3. **Content Addressing**: The `k_t` and `β_t` controller outputs, along with `w_{t-1}` and `M_t` are inputs to the "Content Addressing" block, which outputs `w_t^c`.

4. **Interpolation**: The `g_t` controller output and `w_t^c` are inputs to the "Interpolation" block, which outputs `w_t^g`.

5. **Convolutional Shift**: The `s_t` controller output and `w_t^g` are inputs to the "Convolutional Shift" block, which outputs `\tilde{w}_t`.

6. **Sharpening**: The `γ_t` controller output and `\tilde{w}_t` are inputs to the "Sharpening" block, which outputs `w_t`.

7. **Feedback Loop**: The output of the "Content Addressing" block, `w_t^c`, is fed back into the "Interpolation" block.

### Key Observations

* The diagram illustrates a sequential process where the weight vector is refined through multiple stages.

* Controller outputs play a crucial role in modulating each stage of the addressing mechanism.

* The previous state (`w_{t-1}` and `M_t`) influences the current weight vector (`w_t`).

### Interpretation

The diagram represents the addressing mechanism of a Neural Turing Machine, which allows the NTM to selectively read from and write to its external memory. The process starts with the previous state and controller outputs, which are then used to compute a content-based weight vector (`w_t^c`). This vector is then interpolated, shifted, and sharpened to produce the final weight vector (`w_t`), which determines the focus of attention on the memory. The feedback loop from the "Content Addressing" block to the "Interpolation" block suggests that the content-based weight vector influences the subsequent interpolation process. The controller outputs (`k_t`, `β_t`, `g_t`, `s_t`, `γ_t`) provide the NTM with the flexibility to dynamically adjust its addressing strategy based on the current task.