## Block Diagram: Control System Processing Pipeline

### Overview

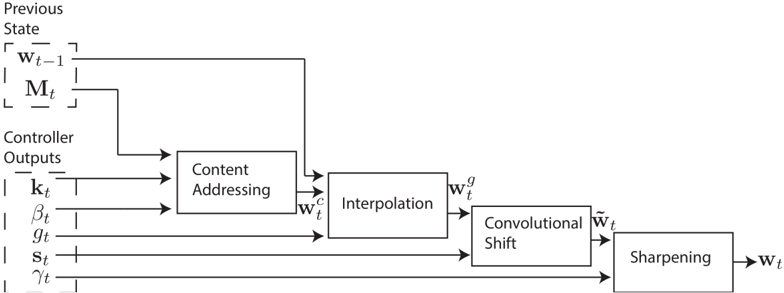

The diagram illustrates a sequential data processing pipeline for a control system. It begins with inputs from a "Previous State" and "Controller Outputs," which are processed through five stages: Content Addressing, Interpolation, Convolutional Shift, and Sharpening. The final output is labeled **w_t**, representing the processed state at time **t**.

---

### Components/Axes

1. **Previous State**

- Contains two variables:

- **w_t-1**: Previous weight vector (input to Content Addressing).

- **M_t**: Matrix associated with the previous state (input to Content Addressing).

2. **Controller Outputs**

- Five variables feeding into Content Addressing:

- **k_t**: Gain parameter.

- **β_t**: Beta parameter.

- **g_t**: Gamma parameter.

- **s_t**: Sigma parameter.

- **γ_t**: Additional control signal.

3. **Content Addressing**

- Inputs: **w_t-1**, **M_t**, **k_t**, **β_t**, **g_t**, **s_t**, **γ_t**.

- Output: **w_c** (content-addressed vector).

4. **Interpolation**

- Input: **w_c**.

- Output: **w_g** (interpolated vector).

5. **Convolutional Shift**

- Input: **w_g**.

- Output: **w_t~** (convolutionally shifted vector).

6. **Sharpening**

- Input: **w_t~**.

- Output: **w_t** (final processed state).

---

### Flow and Relationships

- **Data Flow**:

1. **Previous State** and **Controller Outputs** are combined as inputs to **Content Addressing**.

2. **Content Addressing** processes these inputs to produce **w_c**, which is passed to **Interpolation**.

3. **Interpolation** generates **w_g**, which is fed into **Convolutional Shift**.

4. **Convolutional Shift** outputs **w_t~**, which is refined by **Sharpening** to produce **w_t**.

- **Temporal Dependency**:

The use of **w_t-1** (previous state) suggests the system incorporates historical data for decision-making.

---

### Key Observations

1. **Modular Design**: Each block performs a distinct transformation (e.g., interpolation, convolutional operations).

2. **Controller Integration**: Controller outputs (**k_t**, **β_t**, etc.) directly influence the Content Addressing stage, enabling real-time adjustments.

3. **Temporal Context**: The system retains memory of the previous state (**w_t-1**, **M_t**) to inform current processing.

4. **Final Output**: **w_t** represents the culmination of all transformations, likely used for system control or feedback.

---

### Interpretation

This pipeline appears to model a **reinforcement learning** or **adaptive control system** where:

- **Content Addressing** selects relevant features from historical data (**w_t-1**, **M_t**) and controller signals.

- **Interpolation** and **Convolutional Shift** refine these features spatially or temporally.

- **Sharpening** enhances critical details in the output (**w_t**), possibly for decision-making or actuation.

The system’s reliance on **w_t-1** and **M_t** implies it operates in a dynamic environment requiring memory of past states. The inclusion of multiple controller parameters (**k_t**, **β_t**, etc.) suggests fine-grained tunability for optimization or stability.

No numerical data or trends are present in the diagram; it focuses on architectural relationships and data flow.