## Diagram: LLM Text and Entity Prediction with Knowledge Graph

### Overview

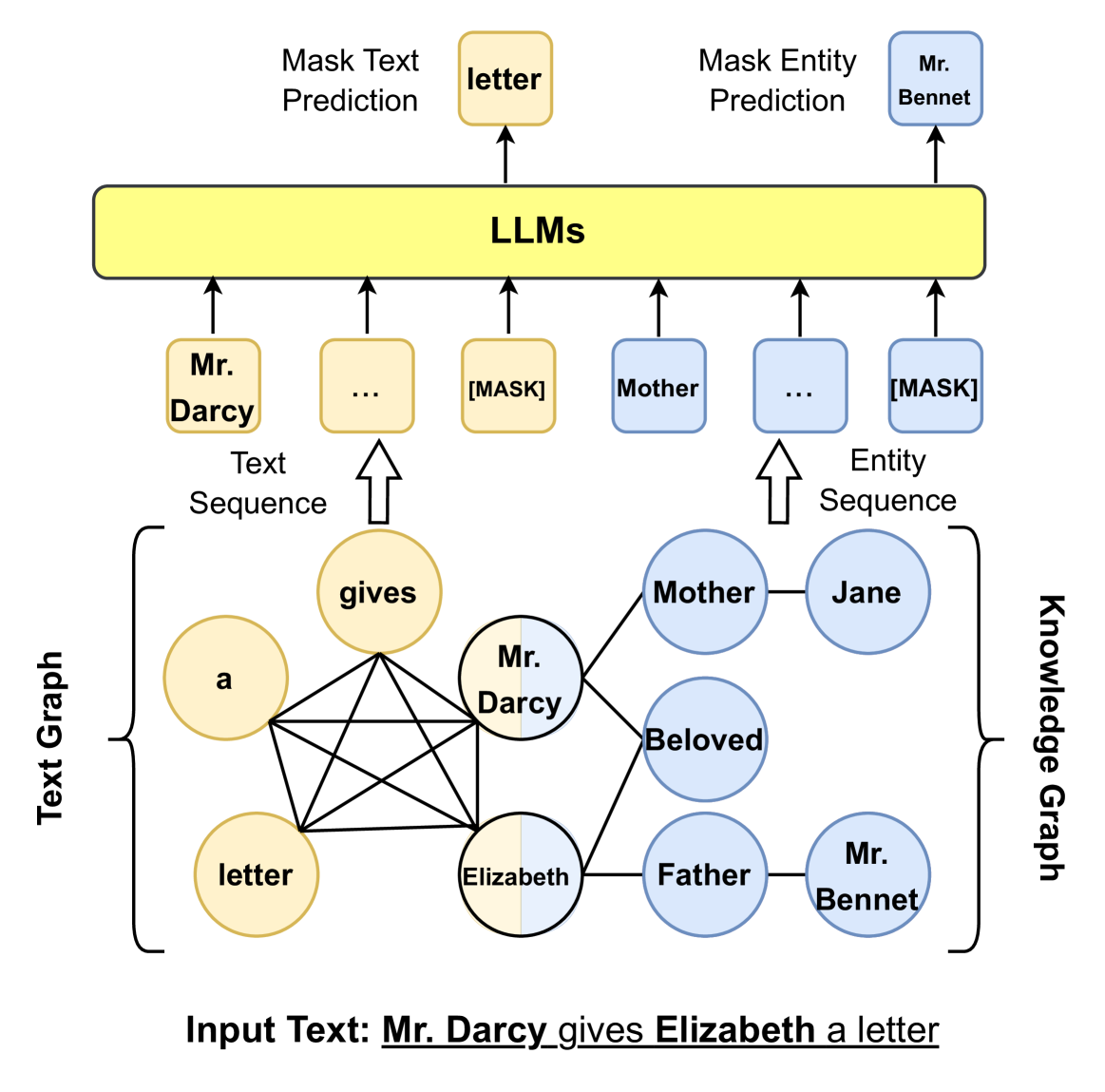

The image illustrates how Large Language Models (LLMs) can be used for both text and entity prediction, leveraging a knowledge graph derived from an input text. The diagram shows the flow of information from the input text, through the knowledge graph, to the LLMs, and finally to the predicted text and entities.

### Components/Axes

* **Input Text:** "Mr. Darcy gives Elizabeth a letter"

* **Text Graph:** A network of nodes representing words from the input text, with edges indicating relationships between them. Nodes are colored yellow, except for "Elizabeth" which is half yellow and half blue.

* **Knowledge Graph:** A network of nodes representing entities and their relationships. Nodes are colored blue.

* **LLMs:** A yellow rectangular block representing Large Language Models.

* **Text Sequence:** A sequence of nodes derived from the text graph, feeding into the LLMs. Nodes are colored yellow.

* **Entity Sequence:** A sequence of nodes derived from the knowledge graph, feeding into the LLMs. Nodes are colored blue.

* **Mask Text Prediction:** The output of the LLMs, predicting masked words in the text sequence. The predicted word is "letter" (yellow).

* **Mask Entity Prediction:** The output of the LLMs, predicting masked entities in the entity sequence. The predicted entity is "Mr. Bennet" (blue).

### Detailed Analysis

* **Input Text:** The input text is "Mr. Darcy gives Elizabeth a letter".

* **Text Graph:**

* Nodes: "a", "gives", "Mr. Darcy", "letter", "Elizabeth"

* Edges: All nodes are interconnected.

* **Knowledge Graph:**

* Nodes: "Mother", "Jane", "Beloved", "Father", "Mr. Bennet", "Mr. Darcy", "Elizabeth"

* Edges:

* "Mr. Darcy" is connected to "Mother" and "Beloved".

* "Elizabeth" is connected to "Father" and "Mr. Darcy".

* "Mother" is connected to "Jane".

* "Father" is connected to "Mr. Bennet".

* **Text Sequence:**

* Nodes: "Mr. Darcy" (yellow), "..." (yellow), "[MASK]" (yellow)

* **Entity Sequence:**

* Nodes: "Mother" (blue), "..." (blue), "[MASK]" (blue)

* **Mask Text Prediction:** Predicts "letter" (yellow).

* **Mask Entity Prediction:** Predicts "Mr. Bennet" (blue).

### Key Observations

* The diagram shows a clear flow of information from the input text to the LLMs.

* The text graph and knowledge graph represent different aspects of the input text.

* The LLMs are used to predict both text and entities.

* The color coding (yellow for text, blue for entities) helps to distinguish between the different types of information.

* "Elizabeth" is represented as half yellow and half blue, suggesting it is both a text element and an entity.

### Interpretation

The diagram illustrates a system where an input text is processed to create both a text graph and a knowledge graph. These graphs are then used to generate text and entity sequences, which are fed into LLMs. The LLMs predict masked words and entities, demonstrating their ability to understand and generate both text and knowledge. The connection between the text graph and knowledge graph allows the LLMs to leverage both textual and semantic information for prediction. The use of masking suggests a pre-training or fine-tuning task where the LLM learns to fill in missing information based on context. The diagram highlights the potential of LLMs to integrate textual and knowledge-based information for various natural language processing tasks.