## Diagram: Causal Inference with Language Models

### Overview

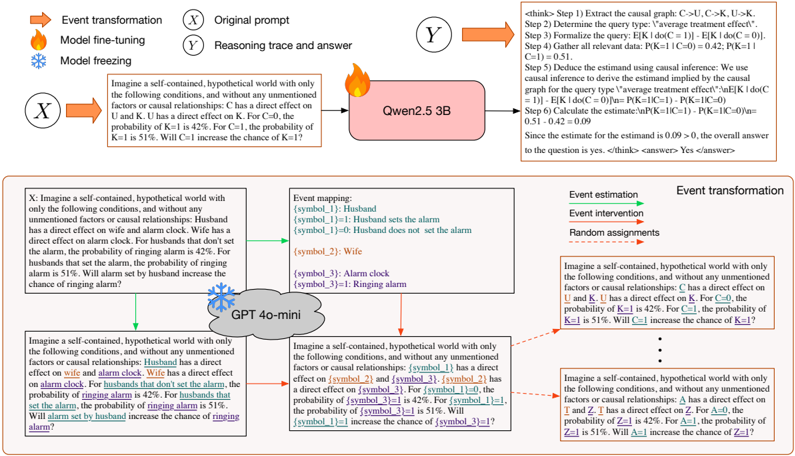

The image illustrates a causal inference process using language models. It shows how an original prompt is transformed, processed by different language models (Qwen2.5 3B and GPT 4o-mini), and how the reasoning trace and answer are generated. The diagram also includes event mapping and estimation steps.

### Components/Axes

* **Top-Left:**

* **Legend:**

* Orange Arrow: Event transformation

* Flame Icon: Model fine-tuning

* Snowflake Icon: Model freezing

* **X (in circle):** Original prompt

* Text: Imagine a self-contained, hypothetical world with only the following conditions, and without any unmentioned factors or causal relationships: C has a direct effect on U and K. U has a direct effect on K. For C=0, the probability of K=1 is 42%. For C=1, the probability of K=1 is 51%. Will C=1 increase the chance of K=1?

* **Top-Right:**

* **Y (in circle):** Reasoning trace and answer

* Text: ` <answer> Yes </answer>`

* **Center:**

* Pink Rectangle: Qwen2.5 3B

* Flame Icon above the rectangle

* **Bottom-Left:**

* **X:** Imagine a self-contained, hypothetical world with only the following conditions, and without any unmentioned factors or causal relationships: Husband has a direct effect on wife and alarm clock. Wife has a direct effect on alarm clock. For husbands that don't set the alarm, the probability of ringing alarm is 42%. For husbands that set the alarm, the probability of ringing alarm is 51%. Will alarm set by husband increase the chance of ringing alarm?

* **Bottom-Center:**

* **Event mapping:**

* {symbol\_1}: Husband

* {symbol\_1}=1: Husband sets the alarm

* {symbol\_1}=0: Husband does not set the alarm

* {symbol\_2}: Wife

* {symbol\_3}: Alarm clock

* {symbol\_3}=1: Ringing alarm

* Cloud Shape: GPT 4o-mini

* Snowflake Icon above the cloud

* Text: Imagine a self-contained, hypothetical world with only the following conditions, and without any unmentioned factors or causal relationships: {symbol\_1} has a direct effect on {symbol\_2} and {symbol\_3}. {symbol\_2} has a direct effect on {symbol\_3}. For {symbol\_1}=0, the probability of {symbol\_3}=1 is 42%. For {symbol\_1}=1, the probability of {symbol\_3}=1 is 51%. Will {symbol\_1}=1 increase the chance of {symbol\_3}=1?

* **Bottom-Right:**

* **Event estimation**

* Event intervention

* Random assignments

* **Event transformation**

* Text: Imagine a self-contained, hypothetical world with only the following conditions, and without any unmentioned factors or causal relationships: C has a direct effect on U and K. U has a direct effect on K. For C=0, the probability of K=1 is 42%. For C=1, the probability of K=1 is 51%. Will C=1 increase the chance of K=1?

* Text: Imagine a self-contained, hypothetical world with only the following conditions, and without any unmentioned factors or causal relationships: A has a direct effect on T and Z. T has a direct effect on Z. For A=0, the probability of Z=1 is 42%. For A=1, the probability of Z=1 is 51%. Will A=1 increase the chance of Z=1?

### Detailed Analysis or ### Content Details

* **Original Prompt (Top-Left):** The prompt describes a hypothetical world where variable C affects U and K, and U affects K. It provides probabilities for K=1 given C=0 (42%) and C=1 (51%), asking if C=1 increases the chance of K=1.

* **Qwen2.5 3B (Center):** This language model processes the original prompt.

* **Reasoning Trace and Answer (Top-Right):** The model extracts the causal graph, determines the query type as "average treatment effect," formalizes the query, gathers relevant data (P(K=1|C=0)=0.42, P(K=1|C=1)=0.51), and deduces the estimand using causal inference. It calculates the estimate as 0.09, concluding that the answer is yes.

* **GPT 4o-mini (Bottom-Center):** This model processes a similar prompt related to husband, wife, and alarm clock. It maps the entities to symbols and provides probabilities for the ringing alarm based on whether the husband sets the alarm or not.

* **Event Transformation (Arrows):** Orange arrows indicate event transformation. Green arrows indicate event estimation.

* **Model States:** The flame icon represents model fine-tuning, while the snowflake icon represents model freezing.

### Key Observations

* The diagram showcases the use of language models for causal inference.

* Two different language models (Qwen2.5 3B and GPT 4o-mini) are used to process different prompts.

* The reasoning trace provides a step-by-step explanation of how the language model arrives at the answer.

* The event mapping and estimation steps are crucial for translating the natural language prompt into a formal causal inference problem.

### Interpretation

The diagram demonstrates how language models can be used to perform causal inference by extracting causal relationships from text, formalizing queries, and calculating estimates. The use of different models and prompts highlights the versatility of this approach. The reasoning trace provides transparency into the model's decision-making process, which is important for building trust in AI systems. The diagram suggests that language models can be valuable tools for causal reasoning and decision-making in various domains. The consistent probability increase (from 42% to 51%) across different scenarios (C affecting K, husband setting alarm affecting ringing alarm, etc.) suggests a robust pattern recognition capability of the models.