## Heatmap Grid: Heads Importance by Task and Layer

### Overview

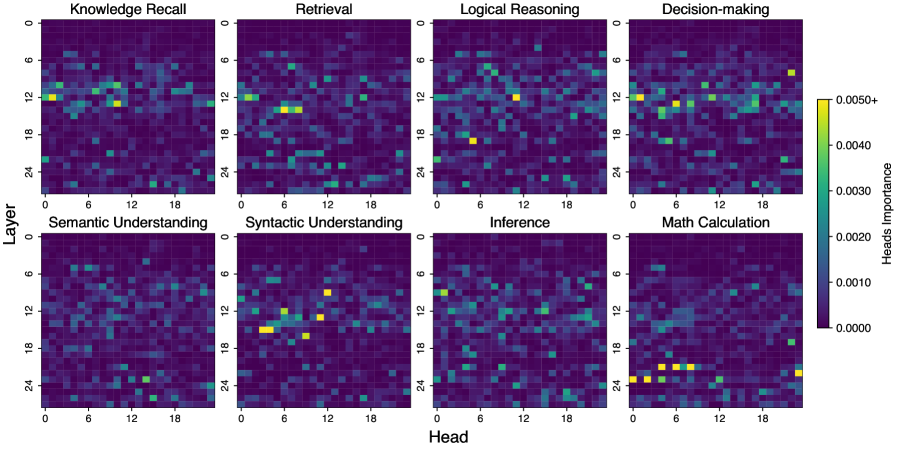

The image presents a series of heatmaps, arranged in a 2x4 grid, visualizing the importance of different "heads" (likely referring to attention heads in a neural network) across various layers for different tasks. Each heatmap represents a specific task, with the y-axis indicating the layer number (0 to 24) and the x-axis representing the head number (0 to 18). The color intensity indicates the importance of a particular head in a specific layer for the given task.

### Components/Axes

* **Titles:** The heatmaps are titled with the following tasks: "Knowledge Recall", "Retrieval", "Logical Reasoning", "Decision-making", "Semantic Understanding", "Syntactic Understanding", "Inference", and "Math Calculation".

* **X-axis:** Labeled "Head", with ticks at 0, 6, 12, and 18. Represents the index of the attention head.

* **Y-axis:** Labeled "Layer", with ticks at 0, 6, 12, 18, and 24. Represents the layer number in the neural network.

* **Colorbar (Heads Importance):** Located on the right side of the "Decision-making" heatmap. It ranges from 0.0000 (dark purple) to 0.0050+ (yellow). Intermediate values are 0.0010, 0.0020, 0.0030, and 0.0040, with corresponding color gradations.

### Detailed Analysis

Each heatmap represents the importance of each head at each layer for a specific task. The color intensity indicates the level of importance.

* **Knowledge Recall:** Shows some concentration of importance around layer 12, with a few heads showing higher importance.

* **Retrieval:** Similar to Knowledge Recall, with some heads showing slightly higher importance around layer 12.

* **Logical Reasoning:** Shows a more dispersed pattern of importance, with a few heads showing higher importance across different layers.

* **Decision-making:** Shows some concentration of importance in the upper layers (around layer 6 and 12), with a few heads showing higher importance.

* **Semantic Understanding:** Shows a dispersed pattern of importance, with a few heads showing higher importance across different layers.

* **Syntactic Understanding:** Shows a concentration of importance around layer 12, with a few heads showing significantly higher importance.

* **Inference:** Shows a dispersed pattern of importance, with a few heads showing higher importance across different layers.

* **Math Calculation:** Shows a concentration of importance in the lower layers (around layer 18 and 24), with a few heads showing significantly higher importance.

### Key Observations

* **Layer Specificity:** Some tasks, like "Syntactic Understanding" and "Math Calculation", show a clear concentration of importance in specific layers. "Syntactic Understanding" is concentrated around layer 12, while "Math Calculation" is concentrated in the lower layers (18-24).

* **Head Importance:** Within each task, only a subset of heads appears to be highly important.

* **Color Scale:** The color scale is consistent across all heatmaps, allowing for direct comparison of head importance across different tasks and layers.

### Interpretation

The heatmaps provide insights into which attention heads in a neural network are most important for different tasks at different layers. The concentration of importance in specific layers for certain tasks suggests that the network may be learning hierarchical representations, with different layers specializing in different aspects of the task. For example, the concentration of importance in lower layers for "Math Calculation" might indicate that these layers are responsible for low-level feature extraction, while the concentration in higher layers for "Syntactic Understanding" might indicate that these layers are responsible for more abstract reasoning. The fact that only a subset of heads is highly important suggests that the network may be learning sparse representations, with only a few heads being actively used for each task. This information could be used to optimize the network architecture or to gain a better understanding of how the network is solving the task.