\n

## Diagram: Benchmark Generation Pipeline

### Overview

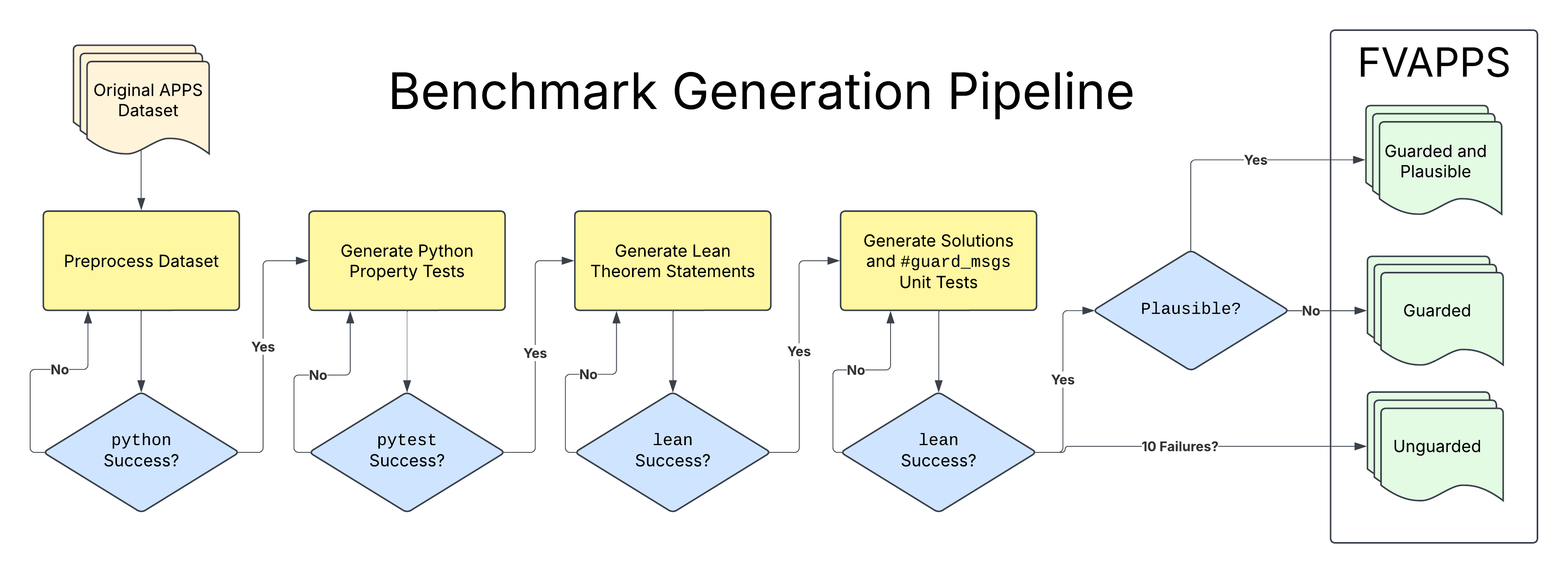

This diagram illustrates a pipeline for benchmark generation, specifically for the FVAPPS project. It depicts a series of processing steps, decision points, and outcomes related to creating and validating benchmarks. The pipeline begins with an original dataset and progresses through several stages of test generation and verification, ultimately resulting in either "Guarded and Plausible", "Guarded", or "Unguarded" benchmarks.

### Components/Axes

The diagram consists of rectangular process blocks, diamond-shaped decision points, and rounded rectangle output states. Arrows indicate the flow of the pipeline. The key components are:

* **Original APPS Dataset:** The starting point of the pipeline.

* **Preprocess Dataset:** A processing step.

* **Generate Python Property Tests:** A processing step.

* **Generate Lean Theorem Statements:** A processing step.

* **Generate Solutions and #guard_msgs Unit Tests:** A processing step.

* **Plausible?:** A decision point.

* **python Success?:** A decision point.

* **pytest Success?:** A decision point.

* **lean Success?:** A decision point.

* **lean Success?:** A decision point.

* **Guarded and Plausible:** An output state.

* **Guarded:** An output state.

* **Unguarded:** An output state.

* **FVAPPS:** A label in the top-right corner.

### Detailed Analysis or Content Details

The pipeline flow is as follows:

1. The process begins with the "Original APPS Dataset".

2. The dataset is fed into the "Preprocess Dataset" block.

3. From "Preprocess Dataset", the flow splits based on the "python Success?" decision point.

* If "Yes", the flow proceeds to "Generate Python Property Tests".

* If "No", the flow returns to "Preprocess Dataset".

4. From "Generate Python Property Tests", the flow splits based on the "pytest Success?" decision point.

* If "Yes", the flow proceeds to "Generate Lean Theorem Statements".

* If "No", the flow returns to "Preprocess Dataset".

5. From "Generate Lean Theorem Statements", the flow splits based on the "lean Success?" decision point.

* If "Yes", the flow proceeds to "Generate Solutions and #guard_msgs Unit Tests".

* If "No", the flow returns to "Preprocess Dataset".

6. From "Generate Solutions and #guard_msgs Unit Tests", the flow splits based on the "lean Success?" decision point.

* If "Yes", the flow proceeds to the "Plausible?" decision point.

* If "No", the flow returns to "Preprocess Dataset".

7. From "Plausible?", the flow splits:

* If "Yes", the flow proceeds to "Guarded and Plausible".

* If "No", the flow proceeds to a check for "10 Failures?".

8. If "10 Failures?" is true, the flow proceeds to "Unguarded".

9. If "10 Failures?" is false, the flow proceeds to "Guarded".

### Key Observations

The pipeline is cyclical, with multiple paths returning to the "Preprocess Dataset" block, indicating an iterative refinement process. The decision points ("python Success?", "pytest Success?", "lean Success?", "Plausible?") act as gatekeepers, determining whether the generated benchmarks meet certain criteria. The final output states ("Guarded and Plausible", "Guarded", "Unguarded") represent the quality and reliability of the generated benchmarks.

### Interpretation

This diagram represents a robust benchmark generation process designed to produce high-quality, reliable benchmarks for the FVAPPS project. The iterative nature of the pipeline suggests a focus on continuous improvement and validation. The multiple decision points and output states indicate a nuanced approach to benchmark assessment, considering factors such as Python test success, Lean theorem verification, and overall plausibility. The "Unguarded" state suggests that benchmarks failing certain criteria are still retained, potentially for debugging or further analysis. The pipeline aims to create benchmarks that are both "Guarded" (meaning they are protected against errors) and "Plausible" (meaning they are realistic and meaningful). The presence of "#guard_msgs" in the "Generate Solutions and #guard_msgs Unit Tests" block suggests that the generated unit tests include mechanisms for detecting and handling potential errors or vulnerabilities.