## Diagram: Benchmark Generation Pipeline

### Overview

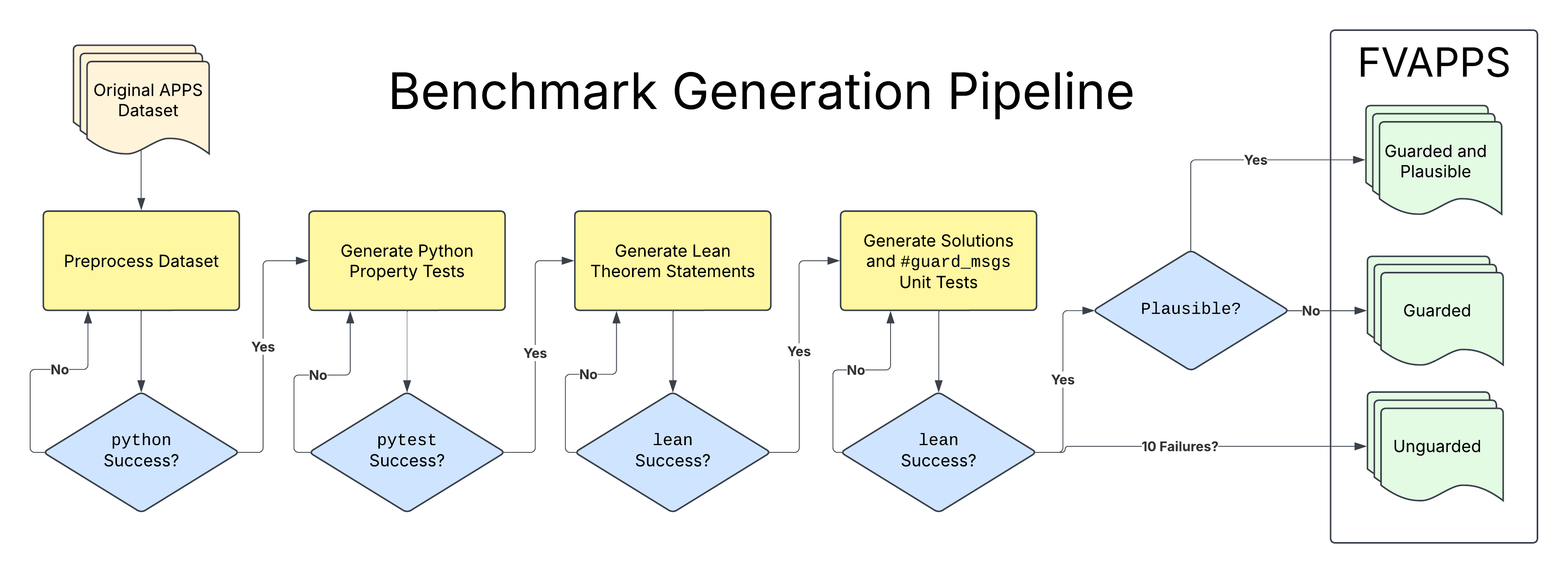

This image is a flowchart diagram illustrating a multi-stage pipeline for generating a benchmark dataset called "FVAPPS" from an initial source, the "Original APPS Dataset." The process involves sequential data transformation steps, each followed by a validation check. The final output is categorized into three distinct types based on the results of the validation stages.

### Components/Axes

The diagram is structured as a left-to-right flowchart with the following key components:

1. **Title:** "Benchmark Generation Pipeline" (centered at the top).

2. **Input:** A document stack icon labeled "Original APPS Dataset" (top-left).

3. **Process Steps (Yellow Rectangles):**

* Preprocess Dataset

* Generate Python Property Tests

* Generate Lean Theorem Statements

* Generate Solutions and #guard_msgs Unit Tests

4. **Decision Points (Blue Diamonds):**

* python Success?

* pytest Success?

* lean Success? (appears twice)

* Plausible?

5. **Output Section (Right Side):** A large container labeled "FVAPPS" containing three document stack icons representing the final output categories:

* Guarded and Plausible (top)

* Guarded (middle)

* Unguarded (bottom)

6. **Flow Control:** Arrows connect the components, labeled with "Yes" or "No" to indicate the path taken based on the outcome of a decision point. One arrow is labeled "10 Failures?".

### Detailed Analysis

The pipeline proceeds through the following sequential stages:

1. **Input:** The process begins with the "Original APPS Dataset."

2. **Stage 1 - Preprocessing:**

* **Process:** "Preprocess Dataset."

* **Decision:** "python Success?"

* **Flow:** If "No," the process loops back to "Preprocess Dataset." If "Yes," it proceeds to the next stage.

3. **Stage 2 - Python Test Generation:**

* **Process:** "Generate Python Property Tests."

* **Decision:** "pytest Success?"

* **Flow:** If "No," the process loops back to "Generate Python Property Tests." If "Yes," it proceeds.

4. **Stage 3 - Theorem Generation:**

* **Process:** "Generate Lean Theorem Statements."

* **Decision:** "lean Success?"

* **Flow:** If "No," the process loops back to "Generate Lean Theorem Statements." If "Yes," it proceeds.

5. **Stage 4 - Solution & Guard Generation:**

* **Process:** "Generate Solutions and #guard_msgs Unit Tests."

* **Decision:** "lean Success?"

* **Flow:** This decision has two exit paths:

* **Path A (Failure):** An arrow labeled "10 Failures?" leads directly to the "Unguarded" output category.

* **Path B (Success):** An arrow labeled "Yes" leads to the next decision point, "Plausible?".

6. **Final Classification:**

* **Decision:** "Plausible?"

* **Flow:** This decision classifies the successfully generated item into one of two final categories:

* If "Yes," the output is categorized as "Guarded and Plausible."

* If "No," the output is categorized as "Guarded."

### Key Observations

* **Iterative Refinement:** The first three stages (Preprocessing, Python Test Generation, Theorem Generation) feature feedback loops. Failure at the validation step ("No") causes the process to retry the same generation step, suggesting an iterative or corrective approach.

* **Critical Failure Path:** The "Generate Solutions and #guard_msgs Unit Tests" stage has a distinct failure mode. If it results in "10 Failures?" (the exact meaning of this threshold is not defined in the diagram), the item is immediately classified as "Unguarded," bypassing the "Plausible?" check.

* **Two-Tiered Success:** Passing all technical validations (python, pytest, lean) does not guarantee the highest-quality output. A final "Plausible?" check separates "Guarded and Plausible" from merely "Guarded" outputs.

* **Output Hierarchy:** The three output categories in the "FVAPPS" box imply a quality or reliability hierarchy: "Guarded and Plausible" (highest), "Guarded" (middle), and "Unguarded" (lowest).

### Interpretation

This diagram details a technical pipeline for creating a validated benchmark (FVAPPS) from a source dataset (APPS). The process is designed to automatically generate and verify code-related artifacts (property tests, theorem statements, solutions with guard messages).

The pipeline's structure reveals its core purpose: **to filter and classify programming problems or solutions based on their verifiable correctness and plausibility.** The multiple "Success?" checks act as gates ensuring technical soundness (code runs, tests pass, theorems are valid). The final "Plausible?" gate introduces a semantic or logical check beyond mere technical execution.

The existence of the "Unguarded" category for items that fail catastrophically (10 failures) suggests the pipeline is robust enough to handle and categorize failure cases rather than discarding them. This creates a benchmark with graded difficulty or reliability levels, which is valuable for evaluating AI systems—some problems are fully verified ("Guarded and Plausible"), some are partially verified ("Guarded"), and some are known to be problematic ("Unguarded"). The name "FVAPPS" likely stands for "Formally Verified APPS" or a similar concept, emphasizing the formal methods (Lean theorems) used in the generation process.