\n

## Chart: Gradient Size and Variance vs. Epochs

### Overview

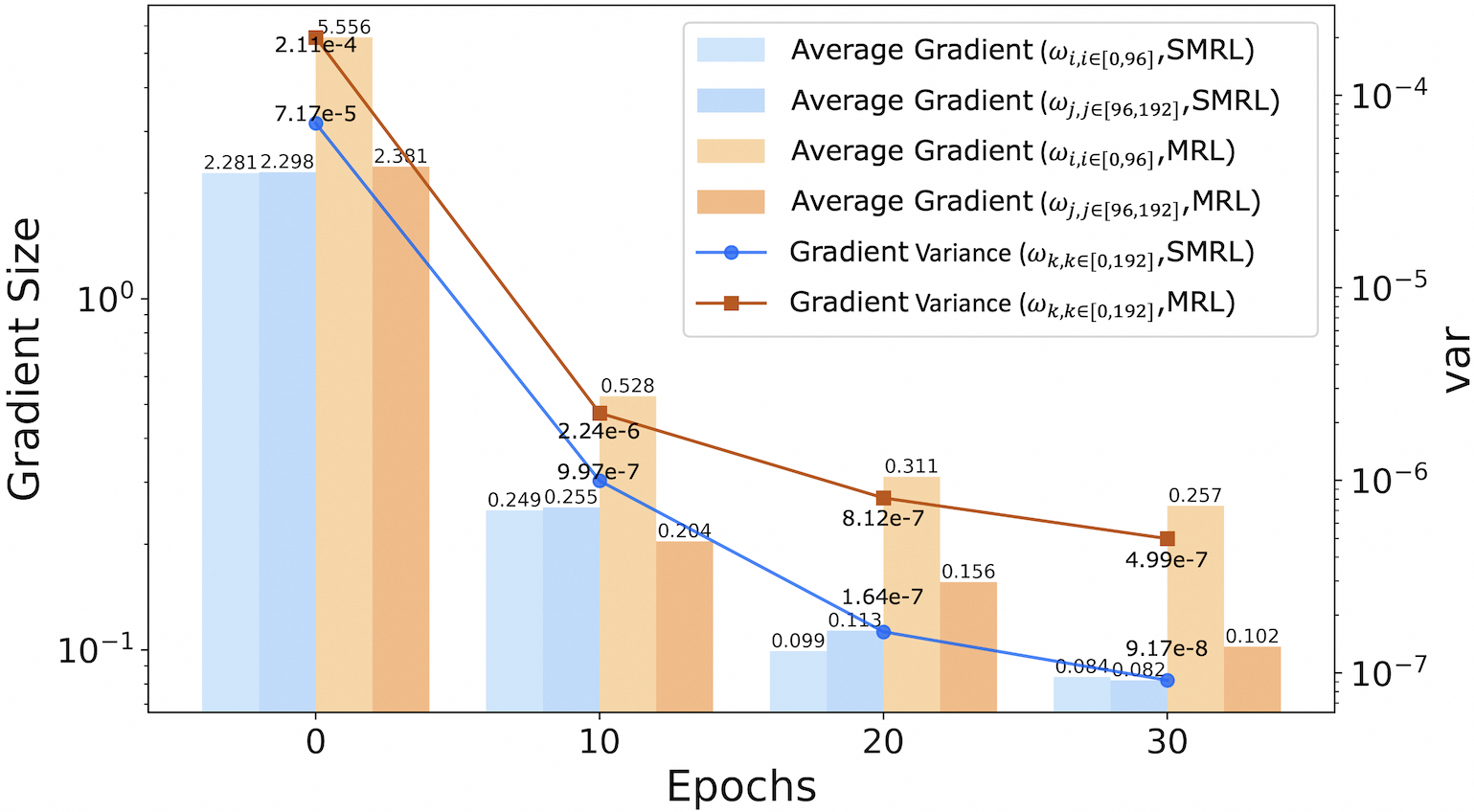

The image presents a dual-axis chart illustrating the relationship between Epochs and both Gradient Size and Variance. Four lines represent Average Gradient for different configurations, while two lines represent Gradient Variance for different configurations. The chart uses a logarithmic scale for the Gradient Size (y-axis on the left) and a linear scale for Variance (y-axis on the right).

### Components/Axes

* **X-axis:** Epochs, ranging from approximately -2 to 35.

* **Left Y-axis:** Gradient Size, on a logarithmic scale (base 10). Scale ranges from approximately 10<sup>-8</sup> to 10<sup>-4</sup>. Label: "Gradient Size".

* **Right Y-axis:** Variance (var), on a linear scale. Scale ranges from approximately 1x10<sup>-7</sup> to 1x10<sup>-4</sup>. Label: "var".

* **Legend:** Located in the top-right corner. Contains the following entries:

* Average Gradient (ω<sub>i,j,e</sub>[0.96],SMRL) - Blue line

* Average Gradient (ω<sub>i,j,e</sub>[96,192],SMRL) - Orange line

* Average Gradient (ω<sub>i,j,e</sub>[0.96],MRL) - Teal line

* Average Gradient (ω<sub>i,j,e</sub>[96,192],MRL) - Yellow line

* Gradient Variance (ω<sub>k,k,e</sub>[0.192],SMRL) - Blue circles

* Gradient Variance (ω<sub>k,k,e</sub>[0.192],MRL) - Orange squares

### Detailed Analysis

**Average Gradient Lines:**

* **Blue Line (ω<sub>i,j,e</sub>[0.96],SMRL):** The line slopes sharply downward from approximately 2.11e-4 at Epoch -2 to approximately 9.17e-8 at Epoch 30. Data points: (-2, 2.11e-4), (0, 7.17e-5), (10, 2.24e-6), (20, 1.64e-7), (30, 9.17e-8).

* **Orange Line (ω<sub>i,j,e</sub>[96,192],SMRL):** The line slopes downward, but less steeply than the blue line, starting at approximately 2.381 at Epoch -2 and ending at approximately 0.102 at Epoch 30. Data points: (-2, 2.381), (0, 2.381), (10, 0.249), (20, 0.156), (30, 0.102).

* **Teal Line (ω<sub>i,j,e</sub>[0.96],MRL):** The line slopes downward, starting at approximately 2.98 at Epoch -2 and ending at approximately 0.082 at Epoch 30. Data points: (-2, 2.98), (0, 2.812), (10, 0.528), (20, 0.099), (30, 0.082).

* **Yellow Line (ω<sub>i,j,e</sub>[96,192],MRL):** The line slopes downward, starting at approximately 5.556 at Epoch -2 and ending at approximately 0.257 at Epoch 30. Data points: (-2, 5.556), (0, 2.11e-4), (10, 0.204), (20, 0.311), (30, 0.257).

**Gradient Variance Lines:**

* **Blue Circles (ω<sub>k,k,e</sub>[0.192],SMRL):** The line slopes downward, starting at approximately 2.98 at Epoch -2 and ending at approximately 4.99e-7 at Epoch 30. Data points: (-2, 2.98), (0, 7.17e-5), (10, 9.97e-7), (20, 1.64e-7), (30, 4.99e-7).

* **Orange Squares (ω<sub>k,k,e</sub>[0.192],MRL):** The line slopes downward, starting at approximately 2.381 at Epoch -2 and ending at approximately 9.17e-8 at Epoch 30. Data points: (-2, 2.381), (0, 2.381), (10, 0.249), (20, 0.156), (30, 0.082).

### Key Observations

* All Average Gradient lines exhibit a decreasing trend as Epochs increase, indicating a reduction in gradient size over time.

* The blue line (ω<sub>i,j,e</sub>[0.96],SMRL) shows the most rapid decrease in gradient size.

* The orange line (ω<sub>i,j,e</sub>[96,192],SMRL) shows the slowest decrease in gradient size.

* The Gradient Variance lines also generally decrease with increasing Epochs, but the rate of decrease is less pronounced than for the Average Gradient lines.

* The variance values are significantly smaller than the gradient size values, as expected given the different scales.

### Interpretation

The chart demonstrates the behavior of gradient size and variance during the training process (represented by Epochs). The decreasing trend in both gradient size and variance suggests that the model is converging as training progresses. The different lines represent different configurations (SMRL vs. MRL, and different parameter settings within those configurations). The varying rates of decrease indicate that these configurations have different convergence properties. The SMRL configuration with [0.96] parameters appears to converge most rapidly, while the MRL configuration with [96,192] parameters converges the slowest. The difference in the scales of the y-axes highlights that gradient size is typically much larger than gradient variance. The logarithmic scale on the Gradient Size axis emphasizes the initial large values and the subsequent rapid reduction. The chart provides valuable insights into the training dynamics of the model and can be used to optimize the training process by selecting appropriate configurations and monitoring convergence.