## Bar Chart: KV Cache Length Comparison

### Overview

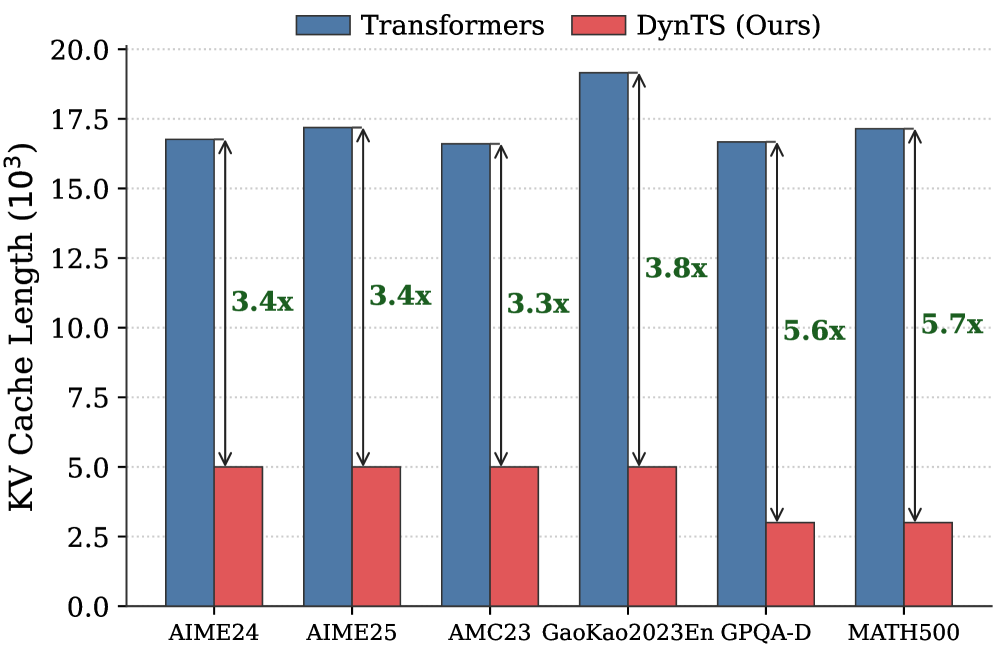

The image is a bar chart comparing the KV Cache Length (in thousands) of "Transformers" and "DynTS (Ours)" across six different datasets: AIME24, AIME25, AMC23, GaoKao2023En, GPQA-D, and MATH500. The chart highlights the reduction in KV Cache Length achieved by DynTS compared to Transformers, with numerical factors indicating the reduction magnitude above each pair of bars.

### Components/Axes

* **Title:** KV Cache Length (10^3)

* **X-axis:** Datasets (AIME24, AIME25, AMC23, GaoKao2023En, GPQA-D, MATH500)

* **Y-axis:** KV Cache Length (10^3), with a scale from 0.0 to 20.0, incrementing by 2.5.

* **Legend:** Located at the top of the chart.

* Blue bars: Transformers

* Red bars: DynTS (Ours)

### Detailed Analysis

The chart presents a side-by-side comparison of KV Cache Length for Transformers and DynTS across the six datasets.

* **AIME24:**

* Transformers: Approximately 16.8 x 10^3

* DynTS: Approximately 5.0 x 10^3

* Reduction factor: 3.4x

* **AIME25:**

* Transformers: Approximately 17.2 x 10^3

* DynTS: Approximately 5.0 x 10^3

* Reduction factor: 3.4x

* **AMC23:**

* Transformers: Approximately 16.7 x 10^3

* DynTS: Approximately 5.0 x 10^3

* Reduction factor: 3.3x

* **GaoKao2023En:**

* Transformers: Approximately 19.2 x 10^3

* DynTS: Approximately 5.0 x 10^3

* Reduction factor: 3.8x

* **GPQA-D:**

* Transformers: Approximately 16.7 x 10^3

* DynTS: Approximately 3.0 x 10^3

* Reduction factor: 5.6x

* **MATH500:**

* Transformers: Approximately 17.2 x 10^3

* DynTS: Approximately 3.0 x 10^3

* Reduction factor: 5.7x

### Key Observations

* DynTS consistently achieves a lower KV Cache Length compared to Transformers across all datasets.

* The reduction factor varies across datasets, ranging from 3.3x to 5.7x.

* The largest reduction is observed in the MATH500 dataset (5.7x), followed by GPQA-D (5.6x).

* The KV Cache Length for Transformers is relatively consistent across all datasets, hovering around 17 x 10^3, with GaoKao2023En being a slight outlier at 19.2 x 10^3.

* The KV Cache Length for DynTS varies more significantly, ranging from 3.0 x 10^3 to 5.0 x 10^3.

### Interpretation

The bar chart demonstrates the effectiveness of DynTS in reducing KV Cache Length compared to Transformers. The reduction factors indicate the magnitude of this improvement, suggesting that DynTS is particularly effective on the MATH500 and GPQA-D datasets. The consistent KV Cache Length for Transformers across datasets suggests a stable baseline, while the variability in DynTS's KV Cache Length indicates that its performance is more sensitive to the specific dataset. This data suggests that DynTS could offer significant memory savings and potentially improved performance in certain applications compared to traditional Transformers.