\n

## Diagram: Neural Network Layer Architecture

### Overview

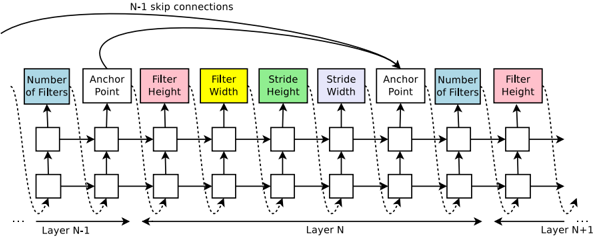

The image depicts a diagram illustrating the architecture of consecutive layers (N-1, N, and N+1) in a neural network, likely a convolutional neural network (CNN). It highlights the flow of information and the parameters associated with each layer, including the number of filters, anchor point, filter height, filter width, and stride. Skip connections are also shown.

### Components/Axes

The diagram consists of three main sections representing Layer N-1, Layer N, and Layer N+1, arranged horizontally. Each layer contains several blocks representing different parameters. The parameters are:

* **Number of Filters:** Represented by light blue blocks.

* **Anchor Point:** Represented by green blocks.

* **Filter Height:** Represented by grey blocks, with one in red for Layer N+1.

* **Filter Width:** Represented by yellow blocks.

* **Stride Height:** Represented by teal blocks.

* **Stride Width:** Represented by purple blocks.

Dotted arrows indicate the flow of data between blocks within each layer. Curved arrows labeled "N-1 skip connections" show connections between Layer N-1 and Layer N+1. The horizontal axis represents the progression through layers.

### Detailed Analysis or Content Details

The diagram shows a consistent structure across the three layers. Each layer receives input from the previous layer and passes output to the next. The parameters within each layer appear to be processed sequentially.

* **Layer N-1:** The leftmost layer shows the flow of data through the parameters.

* **Layer N:** The central layer shows the flow of data through the parameters.

* **Layer N+1:** The rightmost layer shows the flow of data through the parameters. The "Filter Height" block is colored red, potentially indicating a specific or highlighted parameter.

The skip connections bypass Layer N, directly connecting Layer N-1 to Layer N+1. This suggests a residual connection, a common technique in deep learning to mitigate the vanishing gradient problem.

The diagram does not provide specific numerical values for any of the parameters. It only illustrates the conceptual flow and the types of parameters involved.

### Key Observations

* The diagram emphasizes the modularity of neural network layers.

* The skip connections suggest a residual network architecture.

* The consistent structure across layers indicates a repeating pattern of processing.

* The red "Filter Height" block in Layer N+1 might signify a specific configuration or a point of interest.

### Interpretation

This diagram illustrates a common architectural pattern in deep convolutional neural networks. The parameters (number of filters, anchor point, filter height/width, stride) define the convolutional operations performed in each layer. The skip connections are a key feature of residual networks, allowing gradients to flow more easily through the network and enabling the training of deeper models. The diagram is a high-level representation and does not provide specific details about the network's functionality or performance. It serves as a visual aid for understanding the overall structure and data flow within the network. The diagram is a conceptual illustration, and does not contain any factual data. It is a schematic representation of a neural network architecture.