## Diagram: Chain-of-Thought (CoT) vs. Chain of Continuous Thought (COCONUT)

### Overview

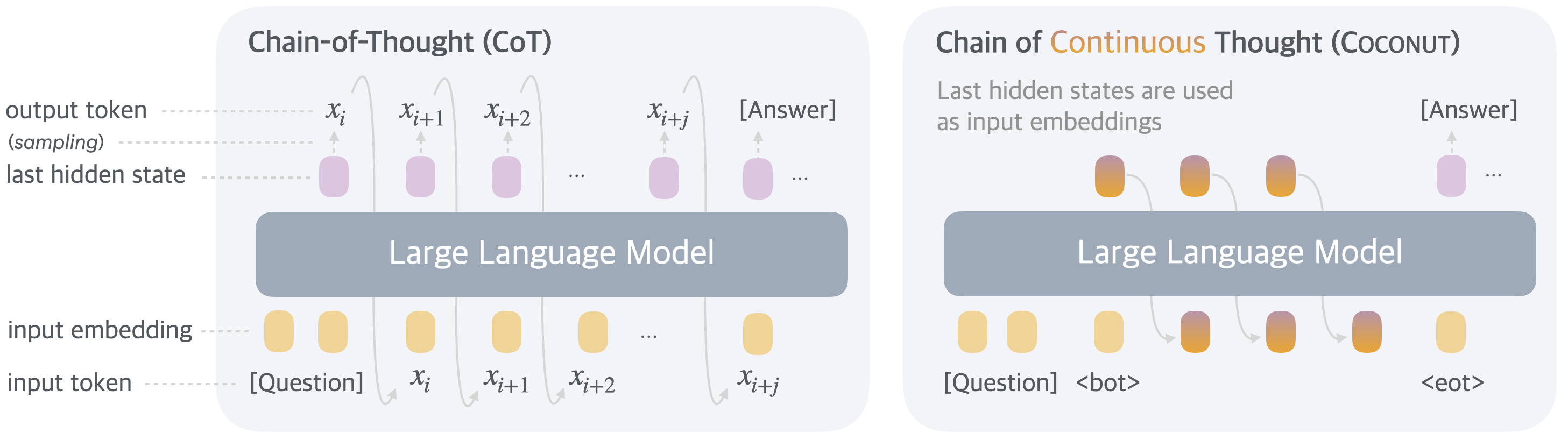

The image presents two diagrams illustrating the difference between Chain-of-Thought (CoT) and Chain of Continuous Thought (COCONUT) approaches in language models. Both diagrams depict the flow of information through a Large Language Model, highlighting the input and output tokens, hidden states, and embeddings.

### Components/Axes

**Left Diagram: Chain-of-Thought (CoT)**

* **Title:** Chain-of-Thought (CoT)

* **Components:**

* Input Token: \[Question], x<sub>i</sub>, x<sub>i+1</sub>, x<sub>i+2</sub>, x<sub>i+j</sub>

* Input Embedding: Represented by yellow rounded rectangles corresponding to each input token.

* Large Language Model: A central gray rounded rectangle.

* Last Hidden State: Represented by purple rounded rectangles above the Large Language Model, corresponding to each input token.

* Output Token (sampling): \[Answer]

* **Flow:** The input tokens are fed into the Large Language Model, which generates hidden states. These hidden states are then used to sample output tokens.

**Right Diagram: Chain of Continuous Thought (COCONUT)**

* **Title:** Chain of Continuous Thought (COCONUT)

* **Text Description:** "Last hidden states are used as input embeddings"

* **Components:**

* Input Token: \[Question], <bot>, <eot>

* Input Embedding: Represented by gradient (yellow to purple) rounded rectangles corresponding to each input token.

* Large Language Model: A central gray rounded rectangle.

* Last Hidden State: Represented by gradient (yellow to purple) rounded rectangles above the Large Language Model, corresponding to each input token.

* Output Token (sampling): \[Answer]

* **Flow:** The input tokens are fed into the Large Language Model, which generates hidden states. These hidden states are then used as input embeddings for the next step.

### Detailed Analysis or Content Details

**Chain-of-Thought (CoT):**

* The input tokens \[Question], x<sub>i</sub>, x<sub>i+1</sub>, x<sub>i+2</sub>, and x<sub>i+j</sub> are converted into input embeddings (yellow rounded rectangles).

* These embeddings are fed into the Large Language Model (gray rounded rectangle).

* The Large Language Model generates last hidden states (purple rounded rectangles) for each input token.

* The last hidden states are used to sample the output token \[Answer].

**Chain of Continuous Thought (COCONUT):**

* The input tokens \[Question], <bot>, and <eot> are initially converted into input embeddings (yellow rounded rectangles).

* These embeddings are fed into the Large Language Model (gray rounded rectangle).

* The Large Language Model generates last hidden states (gradient rounded rectangles) for each input token.

* The last hidden states are then used as input embeddings for the next step, creating a continuous chain of thought.

* The final output token is \[Answer].

### Key Observations

* **CoT:** Uses the last hidden states to sample output tokens directly.

* **COCONUT:** Uses the last hidden states as input embeddings for the next step, creating a continuous chain.

* The COCONUT diagram explicitly states that "Last hidden states are used as input embeddings."

* The input embeddings in COCONUT are represented with a gradient, visually indicating the transformation of hidden states into embeddings.

### Interpretation

The diagrams illustrate a key difference between Chain-of-Thought (CoT) and Chain of Continuous Thought (COCONUT) approaches. CoT treats each step independently, using the hidden states to directly sample output tokens. In contrast, COCONUT leverages the hidden states as input embeddings for subsequent steps, creating a continuous flow of information and potentially allowing the model to maintain context and build upon previous reasoning steps. The use of gradient colors in the COCONUT diagram visually emphasizes the transformation of hidden states into input embeddings, highlighting the continuous nature of the process. The <bot> and <eot> tokens in COCONUT likely represent the beginning and end of the thought process, respectively.