\n

## Diagram: Distributed Training Data Flow

### Overview

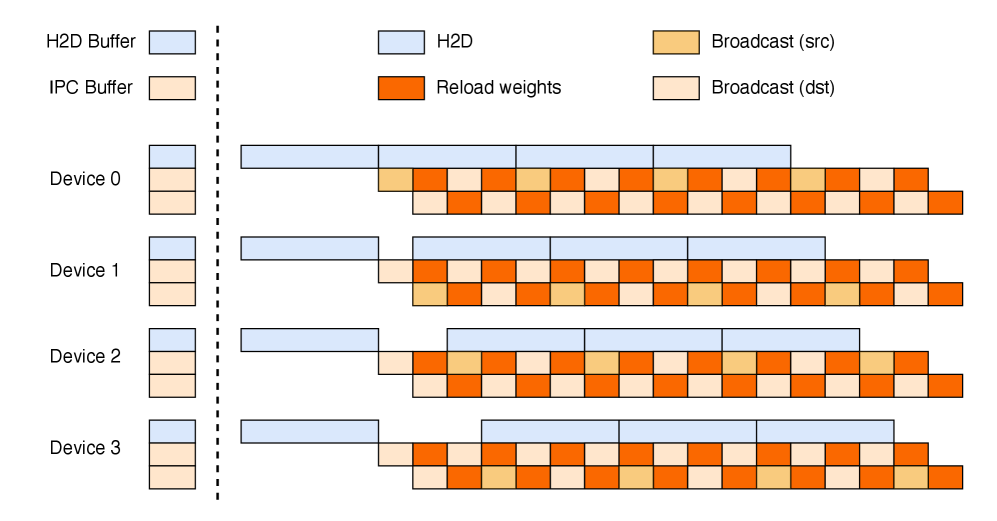

The image depicts a diagram illustrating the data flow in a distributed training scenario, likely involving multiple devices (Device 0-3) and data transfer between a host (H2D) and devices, as well as inter-device communication. The diagram shows the sequence of operations: H2D data transfer, reloading weights, and broadcast operations (source and destination).

### Components/Axes

The diagram is structured with the following components:

* **Vertical Axis:** Represents the different devices involved in the distributed training process: Device 0, Device 1, Device 2, and Device 3. Also includes H2D Buffer and IPC Buffer.

* **Horizontal Axis:** Represents the sequence of operations or time steps.

* **Legend (Top-Right):**

* White: H2D (Host to Device)

* Yellow: Broadcast (src) - Broadcast from source

* Orange: Reload weights

* Light Yellow: Broadcast (dst) - Broadcast to destination

* **Vertical Dashed Line:** Separates the H2D Buffer and IPC Buffer from the Devices.

### Detailed Analysis

The diagram shows the following sequence of operations for each device:

* **H2D Buffer:** A white block representing data transfer from the host to the device. The length of the block is approximately the same for all devices.

* **IPC Buffer:** A white block representing data transfer from the host to the device. The length of the block is approximately the same for all devices.

* **Device 0:**

* H2D: A white block, approximately 2 units long.

* Reload weights: An orange block, approximately 1 unit long.

* Broadcast (src): A yellow block, approximately 2 units long.

* Broadcast (dst): A light yellow block, approximately 2 units long.

* **Device 1:**

* H2D: A white block, approximately 2 units long.

* Reload weights: An orange block, approximately 1 unit long.

* Broadcast (src): A yellow block, approximately 2 units long.

* Broadcast (dst): A light yellow block, approximately 2 units long.

* **Device 2:**

* H2D: A white block, approximately 2 units long.

* Reload weights: An orange block, approximately 1 unit long.

* Broadcast (src): A yellow block, approximately 2 units long.

* Broadcast (dst): A light yellow block, approximately 2 units long.

* **Device 3:**

* H2D: A white block, approximately 2 units long.

* Reload weights: An orange block, approximately 1 unit long.

* Broadcast (src): A yellow block, approximately 2 units long.

* Broadcast (dst): A light yellow block, approximately 2 units long.

The sequence of operations (H2D, Reload weights, Broadcast (src), Broadcast (dst)) is consistent across all devices. The relative lengths of the blocks representing each operation are also consistent across all devices.

### Key Observations

* The diagram illustrates a synchronous or semi-synchronous distributed training process, where all devices perform the same sequence of operations.

* The H2D transfer appears to be a necessary initial step for each device.

* The "Reload weights" operation likely refers to updating the model weights on each device.

* The "Broadcast (src)" and "Broadcast (dst)" operations suggest a communication pattern where weights or gradients are broadcast from one or more source devices to other destination devices.

* The diagram does not provide any information about the size of the data being transferred or the duration of each operation.

### Interpretation

This diagram likely represents a step in a distributed deep learning training process. The H2D transfer brings the model or data to the device. The reload weights step updates the model parameters. The broadcast operations are crucial for synchronizing the model across multiple devices, enabling parallel training. The consistent sequence of operations across all devices suggests a coordinated training process. The diagram highlights the communication overhead involved in distributed training, specifically the data transfer and broadcast operations. The diagram does not provide quantitative data, but it visually conveys the flow of data and operations in a distributed training system. The IPC buffer suggests that there is some inter-process communication happening.