\n

## Pie Charts: Error Analysis of LLM Responses

### Overview

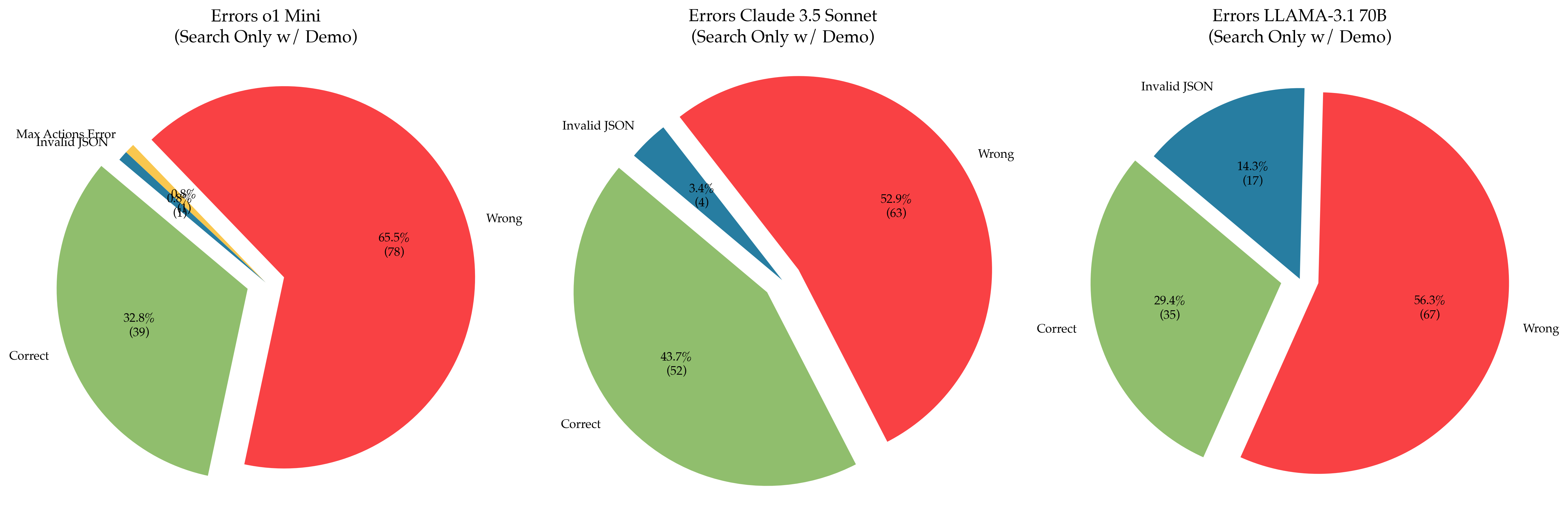

The image presents three pie charts, each representing the error distribution for a different Large Language Model (LLM): `ol Mini`, `Claude 3.5 Sonnet`, and `LLAMA-3.1 70B`. All charts are titled "Errors [Model Name] (Search Only w/ Demo)". The charts categorize errors into "Correct", "Wrong", "Invalid JSON", and "Max Actions Error" (only present in the first chart). The data appears to represent the results of a search-only demonstration.

### Components/Axes

Each chart consists of a circular pie divided into segments representing different error types. The percentage and count of each error type are displayed within each segment. There are no explicit axes, but the pie chart itself represents the proportion of each error type relative to the total number of responses.

* **Chart 1 (ol Mini):**

* Categories: Correct, Wrong, Invalid JSON, Max Actions Error

* **Chart 2 (Claude 3.5 Sonnet):**

* Categories: Correct, Wrong, Invalid JSON

* **Chart 3 (LLAMA-3.1 70B):**

* Categories: Correct, Wrong, Invalid JSON

### Detailed Analysis or Content Details

**Chart 1: Errors ol Mini (Search Only w/ Demo)**

* **Correct:** 32.8% (39) - Light Green segment, positioned at the bottom-left.

* **Wrong:** 65.5% (78) - Red segment, occupying the majority of the chart, positioned at the top-right.

* **Invalid JSON:** 1.0% (1) - Dark Blue segment, small segment at the top.

* **Max Actions Error:** 0.6% (1) - Yellow segment, small segment at the bottom-right.

**Chart 2: Errors Claude 3.5 Sonnet (Search Only w/ Demo)**

* **Correct:** 43.7% (52) - Light Green segment, positioned at the bottom.

* **Wrong:** 52.9% (63) - Red segment, occupying the majority of the chart, positioned at the top.

* **Invalid JSON:** 3.4% (4) - Dark Blue segment, small segment at the top-left.

**Chart 3: Errors LLAMA-3.1 70B (Search Only w/ Demo)**

* **Correct:** 29.4% (35) - Light Green segment, positioned at the bottom-left.

* **Wrong:** 56.3% (67) - Red segment, occupying the majority of the chart, positioned at the top-right.

* **Invalid JSON:** 14.3% (17) - Dark Blue segment, positioned at the top.

### Key Observations

* All three models exhibit a higher percentage of "Wrong" responses than "Correct" responses.

* `ol Mini` has the highest percentage of "Wrong" responses (65.5%).

* `Claude 3.5 Sonnet` has the highest percentage of "Correct" responses (43.7%).

* `LLAMA-3.1 70B` has the highest percentage of "Invalid JSON" responses (14.3%).

* `ol Mini` is the only model that exhibits "Max Actions Error".

### Interpretation

The data suggests that, in this search-only demonstration, none of the LLMs consistently provide correct responses. The "Wrong" category dominates across all models, indicating a significant failure rate in generating accurate results. The presence of "Invalid JSON" errors, particularly in `LLAMA-3.1 70B`, suggests issues with the model's ability to format its output correctly. The "Max Actions Error" in `ol Mini` might indicate a limitation in the model's ability to handle complex search queries or actions.

The relatively higher "Correct" response rate of `Claude 3.5 Sonnet` suggests it performs better than the other two models in this specific scenario. However, even this model still produces more "Wrong" responses than "Correct" ones.

The fact that all charts are labeled "(Search Only w/ Demo)" is crucial. This implies the results are specific to a particular use case (search) and a demonstration setting, and may not generalize to other tasks or real-world applications. The demo setting may also introduce biases or limitations that affect the error rates. The counts provided alongside the percentages (e.g., 39, 63, 17) indicate the sample size for each model, which is important for assessing the statistical significance of the observed differences.