## Bar Chart: Comparison of Overall Accuracy for Three Methods

### Overview

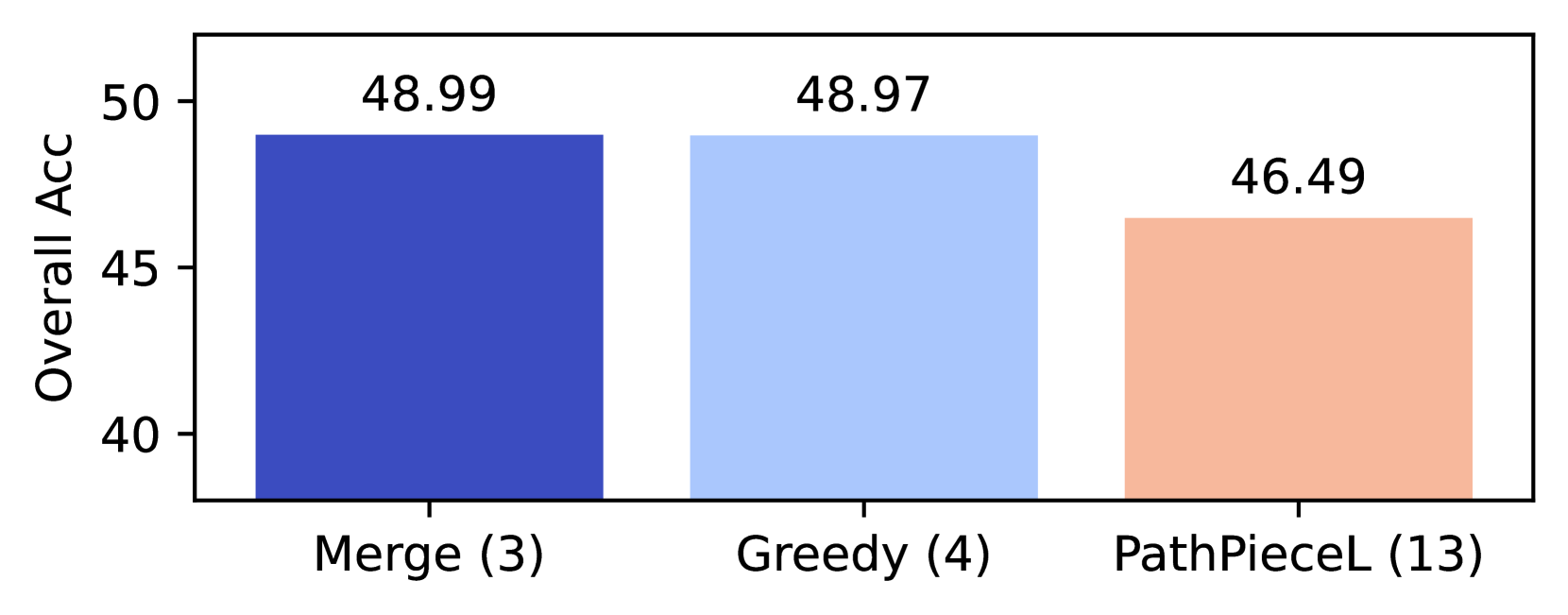

The image displays a vertical bar chart comparing the "Overall Acc" (Overall Accuracy) of three distinct methods or algorithms. The chart is presented on a white background with a simple black border. The visual design uses color-coded bars to differentiate between the methods, with the exact accuracy value annotated above each bar.

### Components/Axes

* **Y-Axis (Vertical):**

* **Label:** "Overall Acc"

* **Scale:** Linear scale ranging from 40 to 50.

* **Major Tick Marks:** At 40, 45, and 50.

* **X-Axis (Horizontal):**

* **Categories:** Three distinct methods are listed.

* **Labels (from left to right):**

1. "Merge (3)"

2. "Greedy (4)"

3. "PathPieceL (13)"

* The numbers in parentheses (3, 4, 13) are part of the category labels but their specific meaning (e.g., parameter count, iteration number, variant ID) is not defined within the chart itself.

* **Data Series (Bars):**

* **Bar 1 (Left):** Dark blue bar corresponding to "Merge (3)".

* **Bar 2 (Center):** Light blue bar corresponding to "Greedy (4)".

* **Bar 3 (Right):** Peach/light orange bar corresponding to "PathPieceL (13)".

* **Data Labels:** The precise numerical value of "Overall Acc" is displayed directly above each bar.

### Detailed Analysis

The chart presents a direct comparison of a single performance metric across three items.

1. **Merge (3):**

* **Color:** Dark blue.

* **Reported Value:** 48.99

* **Visual Position:** The tallest bar, located on the far left. Its top aligns just below the 50 mark on the y-axis.

2. **Greedy (4):**

* **Color:** Light blue.

* **Reported Value:** 48.97

* **Visual Position:** The middle bar. Its height is visually almost identical to the "Merge (3)" bar, with a negligible difference of 0.02 in the reported value.

3. **PathPieceL (13):**

* **Color:** Peach/light orange.

* **Reported Value:** 46.49

* **Visual Position:** The shortest bar, located on the far right. It is noticeably shorter than the other two bars, sitting between the 45 and 50 marks on the y-axis.

### Key Observations

* **Performance Cluster:** The "Merge (3)" and "Greedy (4)" methods form a high-performance cluster with nearly indistinguishable accuracy (48.99 vs. 48.97). The difference is within a hundredth of a percent.

* **Performance Drop:** The "PathPieceL (13)" method shows a clear and significant drop in performance compared to the other two. Its accuracy is approximately 2.5 percentage points lower than the leading methods.

* **Label Notation:** All category labels include a number in parentheses. The sequence (3, 4, 13) is not monotonic with performance, suggesting these numbers are not a direct ranking or a simple scaling factor for accuracy.

### Interpretation

This chart likely comes from a technical or research context, comparing the effectiveness of different algorithms, models, or strategies on a common task where "Overall Accuracy" is the primary success metric.

* **What the data suggests:** The "Merge" and "Greedy" approaches are essentially equivalent in terms of final accuracy for this specific evaluation. Choosing between them would depend on other factors not shown here, such as computational cost, speed, memory usage, or performance on secondary metrics. The "PathPieceL" method, while potentially offering other advantages (perhaps related to the number "13" in its label, such as using 13 components or steps), is less effective according to this accuracy measure.

* **How elements relate:** The chart is designed for quick, at-a-glance comparison. The use of distinct colors and direct value labeling eliminates ambiguity in reading the results. The close pairing of the first two bars visually emphasizes their similarity, while the gap to the third bar highlights its inferior performance on this metric.

* **Notable anomalies:** The primary anomaly is the minimal difference between the first two methods. In many experimental contexts, a difference of 0.02% would be considered statistically insignificant, suggesting these two methods may be functionally identical for this task. The meaning of the parenthetical numbers is the largest open question; without a caption or accompanying text, their significance is speculative (e.g., they could denote model size, number of training epochs, or a hyperparameter setting).