## Diagram: AI Navigation and Perception Enhancement System

### Overview

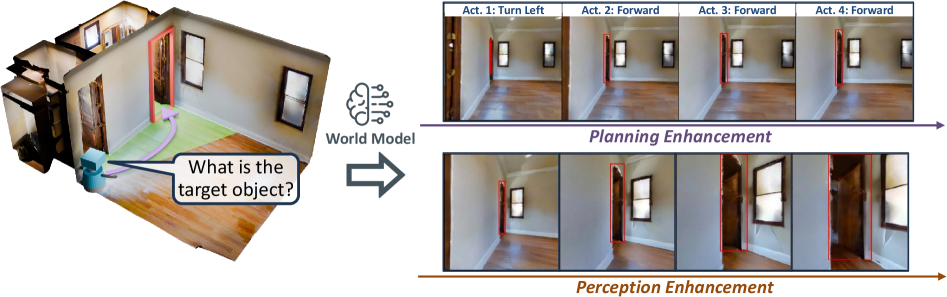

The image is a technical diagram illustrating a robotic or AI agent's process for navigating an indoor environment. It shows a system that uses a "World Model" to enhance both planning (action sequence generation) and perception (visual understanding) in response to a query about a target object. The diagram flows from left to right, starting with an initial state and query, processing through a central model, and outputting two enhanced sequences.

### Components/Axes

The diagram is segmented into three main regions:

1. **Left Region (Initial State & Query):**

* A 3D isometric floor plan of a room with wooden flooring, white walls, and multiple doorways/windows.

* A small, blue, wheeled robot is positioned near the bottom-left corner.

* A light green, semi-transparent path with a purple arrow originates from the robot, curves through the room, and points towards a doorway outlined in red.

* A speech bubble emanates from the robot containing the text: **"What is the target object?"**

2. **Central Region (Processing Unit):**

* An icon depicting a brain with circuit-like connections, labeled **"World Model"**.

* A large, gray, right-pointing arrow connects the left region to the right region, passing through the "World Model" icon.

3. **Right Region (Enhanced Outputs):**

* This region is divided into two horizontal sequences, each containing four image frames.

* **Top Sequence (Planning Enhancement):**

* A purple arrow runs beneath the frames, pointing right, labeled **"Planning Enhancement"**.

* Each frame is labeled with an action:

* Frame 1: **"Act. 1: Turn Left"**

* Frame 2: **"Act. 2: Forward"**

* Frame 3: **"Act. 3: Forward"**

* Frame 4: **"Act. 4: Forward"**

* The frames show a first-person perspective from the robot, progressing through the room. A red outline highlights the doorway/frame the robot is approaching or moving through in each step.

* **Bottom Sequence (Perception Enhancement):**

* A brown/orange arrow runs beneath the frames, pointing right, labeled **"Perception Enhancement"**.

* The four frames show the same first-person perspective as the top sequence but at different moments.

* Red outlines highlight different architectural features (door frames, window frames) in each frame, suggesting the system is actively identifying and segmenting key environmental structures.

### Detailed Analysis

* **Flow and Relationship:** The diagram depicts a cause-and-effect flow. The agent's query ("What is the target object?") and its initial state (position and path in the 3D map) are input into the "World Model." This model then generates two parallel enhancements:

1. **Planning Enhancement:** Produces a discrete, four-step action plan (Turn Left, then move Forward three times) to navigate towards the area of interest (the red-outlined doorway in the initial path).

2. **Perception Enhancement:** Produces a sequence of visual frames where relevant environmental features (doorways, windows) are highlighted with red outlines, indicating improved scene understanding for navigation.

* **Spatial Grounding:** The red outline in the initial 3D map (left region) corresponds to the target doorway. In the "Planning Enhancement" sequence, the red outline consistently marks the doorway the agent is navigating through. In the "Perception Enhancement" sequence, the red outlines shift to highlight various structural elements in the agent's field of view.

* **Trend Verification:** The planning sequence shows a logical spatial progression: a turn followed by forward movement. The perception sequence shows a trend of the agent's viewpoint moving forward through the room, with the system consistently identifying and outlining structural boundaries.

### Key Observations

1. **Dual Enhancement Pathways:** The core concept is that a single "World Model" simultaneously improves both high-level action planning and low-level visual perception.

2. **Action-Perception Coupling:** The actions in the top sequence (e.g., "Forward") directly correspond to the changing viewpoints in the bottom sequence, demonstrating the link between planned movement and perceptual input.

3. **Focus on Structural Navigation:** The system's perception enhancement appears focused on identifying navigable portals (doors) and boundaries (windows), which are critical for indoor robot navigation.

4. **Query-Driven Process:** The entire process is initiated by a specific, goal-oriented question from the agent.

### Interpretation

This diagram illustrates a sophisticated AI architecture for embodied agents (like robots). It suggests that effective navigation in complex environments requires more than just path planning; it requires a **world model** that integrates:

* **Spatial Reasoning:** To generate a feasible action sequence (Planning Enhancement).

* **Semantic Scene Understanding:** To parse the visual input into meaningful, navigable components like doors and windows (Perception Enhancement).

The "World Model" acts as a central cognitive unit that transforms a vague goal ("find the target object") into concrete, executable steps while simultaneously sharpening the agent's "vision" to recognize the features necessary to execute those steps. The red outlines are key—they represent the model's internal representation of important environmental features, bridging the gap between raw pixels and actionable knowledge. This closed-loop system, where planning informs perception and vice-versa, is fundamental to creating autonomous agents that can operate reliably in human spaces.