## Pie Charts: Error Distribution Comparison for Three AI Models

### Overview

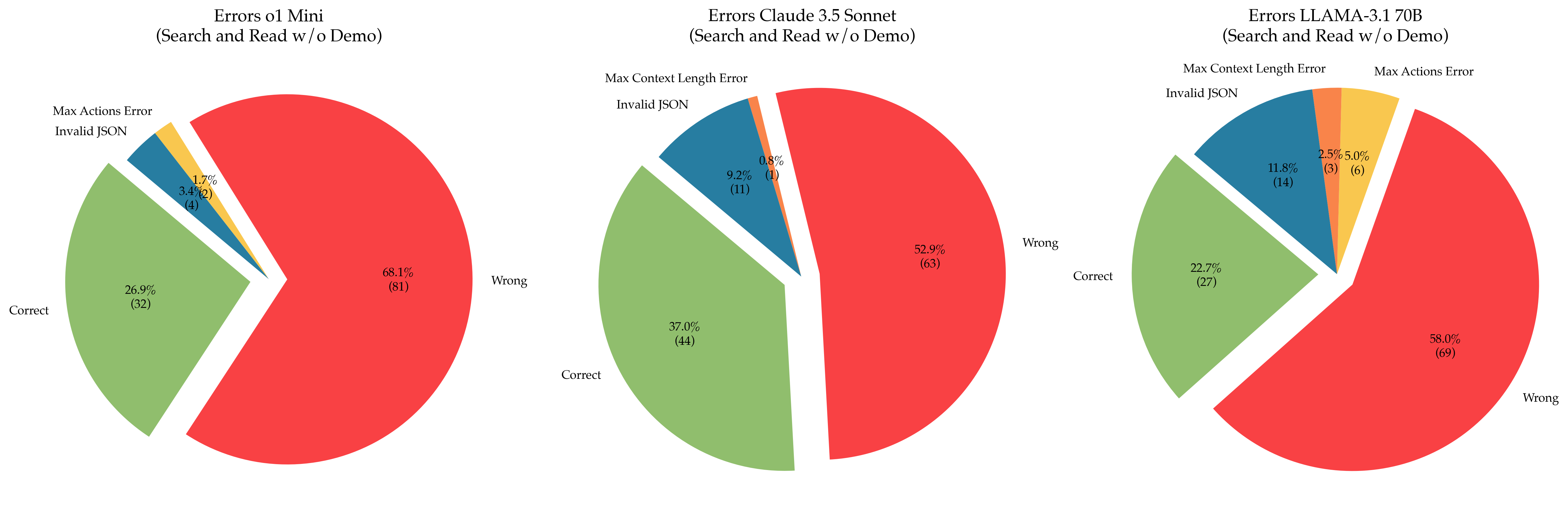

The image displays three pie charts arranged horizontally, each illustrating the error distribution for a different AI model when performing a "Search and Read" task without a demonstration ("w/o Demo"). The charts compare the performance of "o1 Mini," "Claude 3.5 Sonnet," and "LLAMA-3.1 70B." Each chart breaks down outcomes into "Correct," "Wrong," and specific error types.

### Components/Axes

* **Chart Titles (Top Center of each chart):**

* Left: `Errors o1 Mini (Search and Read w/o Demo)`

* Center: `Errors Claude 3.5 Sonnet (Search and Read w/o Demo)`

* Right: `Errors LLAMA-3.1 70B (Search and Read w/o Demo)`

* **Categories (Legend Labels):** The following categories are used across the charts, each associated with a specific color:

* **Wrong** (Red)

* **Correct** (Green)

* **Invalid JSON** (Blue)

* **Max Context Length Error** (Orange)

* **Max Actions Error** (Yellow)

* **Data Labels:** Each pie slice is labeled with its category name, a percentage, and a raw count in parentheses (e.g., `68.1% (81)`).

### Detailed Analysis

**1. Errors o1 Mini (Left Chart)**

* **Wrong (Red):** The largest slice, positioned on the right side. **68.1% (81)**.

* **Correct (Green):** The second-largest slice, positioned on the left side. **26.9% (32)**.

* **Invalid JSON (Blue):** A small slice adjacent to the "Correct" slice. **3.4% (4)**.

* **Max Actions Error (Yellow):** A very small slice adjacent to the "Invalid JSON" slice. **1.7% (2)**.

* **Max Context Length Error (Orange):** **Not present** in this chart.

* **Total Count:** 81 + 32 + 4 + 2 = 119.

**2. Errors Claude 3.5 Sonnet (Center Chart)**

* **Wrong (Red):** The largest slice, positioned on the right side. **52.9% (63)**.

* **Correct (Green):** The second-largest slice, positioned on the left side. **37.0% (44)**.

* **Invalid JSON (Blue):** A moderate slice adjacent to the "Correct" slice. **9.2% (11)**.

* **Max Context Length Error (Orange):** A very small slice adjacent to the "Invalid JSON" slice. **0.8% (1)**.

* **Max Actions Error (Yellow):** **Not present** in this chart.

* **Total Count:** 63 + 44 + 11 + 1 = 119.

**3. Errors LLAMA-3.1 70B (Right Chart)**

* **Wrong (Red):** The largest slice, positioned on the right side. **58.0% (69)**.

* **Correct (Green):** The second-largest slice, positioned on the left side. **22.7% (27)**.

* **Invalid JSON (Blue):** A moderate slice adjacent to the "Correct" slice. **11.8% (14)**.

* **Max Actions Error (Yellow):** A small slice adjacent to the "Invalid JSON" slice. **5.0% (6)**.

* **Max Context Length Error (Orange):** A small slice adjacent to the "Max Actions Error" slice. **2.5% (3)**.

* **Total Count:** 69 + 27 + 14 + 6 + 3 = 119.

### Key Observations

1. **Dominance of "Wrong" Outcomes:** In all three models, the "Wrong" category constitutes the majority of outcomes, ranging from 52.9% to 68.1%.

2. **Model Performance Ranking (by Correct %):** Claude 3.5 Sonnet (37.0%) > o1 Mini (26.9%) > LLAMA-3.1 70B (22.7%).

3. **Error Profile Diversity:** LLAMA-3.1 70B is the only model that exhibits all five error categories. o1 Mini lacks "Max Context Length Error," and Claude 3.5 Sonnet lacks "Max Actions Error."

4. **"Invalid JSON" Prevalence:** This is the most common specific error type across all models, increasing from o1 Mini (3.4%) to Claude 3.5 Sonnet (9.2%) to LLAMA-3.1 70B (11.8%).

5. **Rare Errors:** "Max Context Length Error" and "Max Actions Error" are relatively rare, each occurring in only one or two of the models and never exceeding 5.0% in any single chart.

### Interpretation

This comparative visualization suggests significant differences in how these AI models fail on a standardized "Search and Read" task.

* **Claude 3.5 Sonnet** demonstrates the highest reliability, with the lowest "Wrong" rate and the highest "Correct" rate. Its error profile is also simpler, lacking "Max Actions Error."

* **o1 Mini** has the highest outright failure rate ("Wrong") but a moderate "Correct" rate. Its errors are primarily concentrated in the "Invalid JSON" category, suggesting potential issues with output formatting or parsing.

* **LLAMA-3.1 70B** has the lowest "Correct" rate and the most diverse error profile. The presence of all error types, including the highest rates of "Max Actions Error" and "Max Context Length Error," indicates it may struggle with task constraints (action limits, context windows) more than the other models, in addition to general correctness and formatting issues.

The consistent total count of 119 across all charts implies a controlled experiment where each model was evaluated on the same number of tasks. The data highlights that model evaluation should look beyond a simple "correct/incorrect" binary, as the specific failure modes (e.g., JSON formatting vs. exceeding action limits) provide crucial insights for debugging and improvement. The absence of certain error types in some models could be due to model-specific safeguards, different underlying architectures, or simply chance given the sample size.