## Diagram: Large Language Model Processing of a Math Word Problem

### Overview

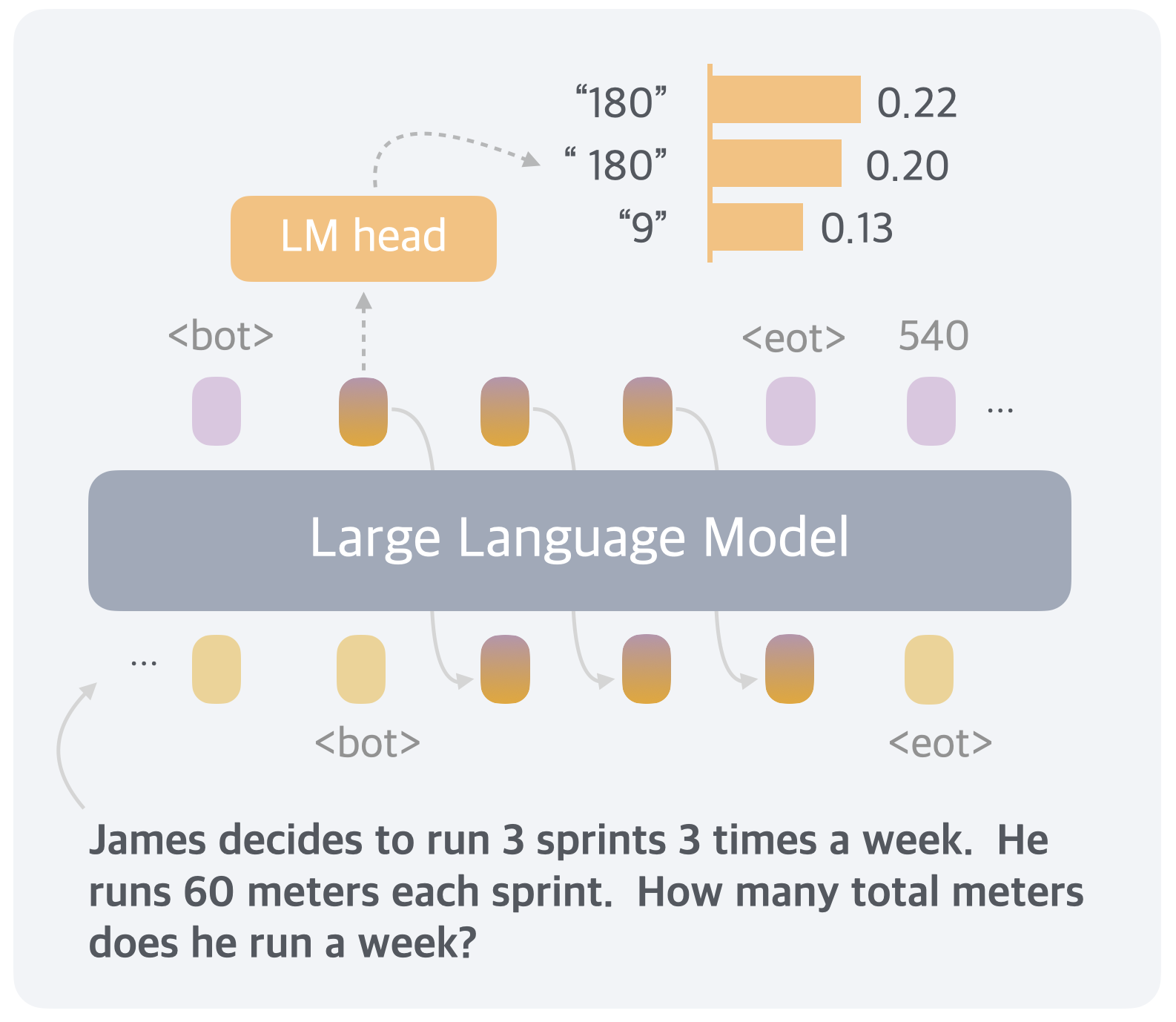

The image is a technical diagram illustrating the process of a Large Language Model (LLM) generating a response to a specific math word problem. It visualizes the flow from input text, through the model's internal processing, to the final output token selection via a Language Model (LM) head, which assigns probabilities to candidate answer tokens.

### Components/Axes

The diagram is structured in three main horizontal layers, with a clear flow from bottom to top.

1. **Input Layer (Bottom):**

* **Text:** A word problem is presented as the input prompt: "James decides to run 3 sprints 3 times a week. He runs 60 meters each sprint. How many total meters does he run a week?"

* **Token Sequence:** Above the text, a sequence of tokens is shown, represented by rounded rectangles. The sequence begins with an ellipsis (`...`), followed by a yellow token, a `<bot>` (beginning of turn) token, three gradient-colored (yellow to purple) tokens, and an `<eot>` (end of turn) token. A curved arrow points from the start of the text to the beginning of this token sequence, indicating the text is tokenized for input.

2. **Model Core (Center):**

* A large, grey, rounded rectangle labeled **"Large Language Model"** spans the width of the diagram. This represents the main computational body of the LLM.

* **Internal Flow:** Faint, curved arrows connect the input token sequence (below) to the output token sequence (above), passing through the "Large Language Model" block. This illustrates the transformation of input representations into output representations.

3. **Output & Prediction Layer (Top):**

* **Output Token Sequence:** A sequence of tokens mirrors the input structure above the model block. It starts with a `<bot>` token, followed by three gradient-colored tokens, an `<eot>` token, the number `540`, and an ellipsis (`...`).

* **LM Head:** An orange, rounded rectangle labeled **"LM head"** is positioned above the second output token (the first gradient token after `<bot>`). A dashed arrow points from this token up to the LM head, indicating it is the current token being predicted.

* **Prediction Bar Chart:** To the right of the LM head, a small horizontal bar chart displays the model's top predictions for the next token. The chart has three entries:

* `"180"` with a probability of `0.22` (longest bar).

* `" 180"` (note the leading space) with a probability of `0.20`.

* `"9"` with a probability of `0.13`.

* A dashed, curved arrow connects the LM head to this prediction chart.

### Detailed Analysis

* **Tokenization:** The input problem is broken into discrete tokens. The diagram uses symbolic tokens (`<bot>`, `<eot>`) and colored shapes to represent this process. The three gradient tokens between `<bot>` and `<eot>` in the input likely correspond to the core of the problem statement.

* **Model Processing:** The "Large Language Model" block processes the input token sequence. The internal arrows suggest a sequential or transformer-based processing flow where information from earlier tokens influences later ones.

* **Output Generation:** The model generates an output sequence. The diagram shows the model has already produced the tokens `<bot>`, three intermediate tokens, `<eot>`, and the number `540`. The ellipsis (`...`) indicates the sequence may continue.

* **Next-Token Prediction:** The focus is on predicting the token that should follow the first gradient token after the initial `<bot>` in the output sequence. The LM head evaluates the model's internal state at that point.

* **Candidate Answers & Probabilities:** The LM head's output is a probability distribution over potential next tokens. The top three candidates are all numerical answers to the word problem:

1. `"180"` (Probability: ~0.22)

2. `" 180"` (Probability: ~0.20) - This is a distinct token, likely representing the number with a leading space.

3. `"9"` (Probability: ~0.13)

* **Spatial Grounding:** The LM head and its prediction chart are located in the **top-center** of the diagram, directly above the output token sequence it is analyzing. The prediction chart is to the **right** of the LM head label.

### Key Observations

1. **Multiple Correct Representations:** The model considers two visually similar but token-distinct representations of the number 180 (`"180"` and `" 180"`) as the most likely answers, with a combined probability of approximately 0.42.

2. **Presence of an Incorrect Candidate:** The number `"9"` is also a top candidate, though with lower probability. This may represent a partial calculation (e.g., 3 sprints * 3 times = 9) or a common error mode.

3. **Output Sequence Anomaly:** The output sequence shown (`<bot> ... <eot> 540 ...`) is unusual. The number `540` appears *after* the `<eot>` token, which typically signifies the end of a model's response. This could indicate the diagram is illustrating a specific intermediate state or a particular model behavior where generation continues past a logical endpoint.

4. **Visual Coding:** The diagram uses color and shape consistently: yellow for general tokens, gradient for problem-specific tokens, orange for the prediction component (LM head and its bars), and purple for special control tokens (`<bot>`, `<eot>`).

### Interpretation

This diagram provides a **Peircean** insight into the "black box" of an LLM solving a reasoning task. It demonstrates that the model does not simply compute the answer (540) in one step. Instead, it operates in a **token-by-token, probabilistic** fashion.

* **What the data suggests:** The model's internal reasoning leads it to consider the intermediate answer "180" (which is 3 sprints * 60 meters) as a highly probable next step, even before arriving at the final correct answer of 540 (180 meters per session * 3 sessions per week). This suggests the model may be performing a **stepwise calculation** mirroring human problem-solving.

* **How elements relate:** The flow from input text to tokenized sequence, through the model core, to the LM head's probability distribution, visually maps the **inference pipeline**. The LM head acts as a translator from the model's high-dimensional internal state to a human-interpretable choice over discrete tokens.

* **Notable patterns/anomalies:** The high probability for `" 180"` (with a space) highlights how **tokenization artifacts** can influence model behavior. The presence of `540` after `<eot>` is a critical anomaly; it may imply the model's generation process is not perfectly aligned with the semantic structure of a conversation, or it could be a deliberate choice by the diagram's creator to show a specific point in the generation timeline. The diagram ultimately reveals that LLM "reasoning" is a **stochastic process of selecting the most likely next piece of information**, not a deterministic execution of a mathematical algorithm.