## Diagram: Large Language Model with LM Head

### Overview

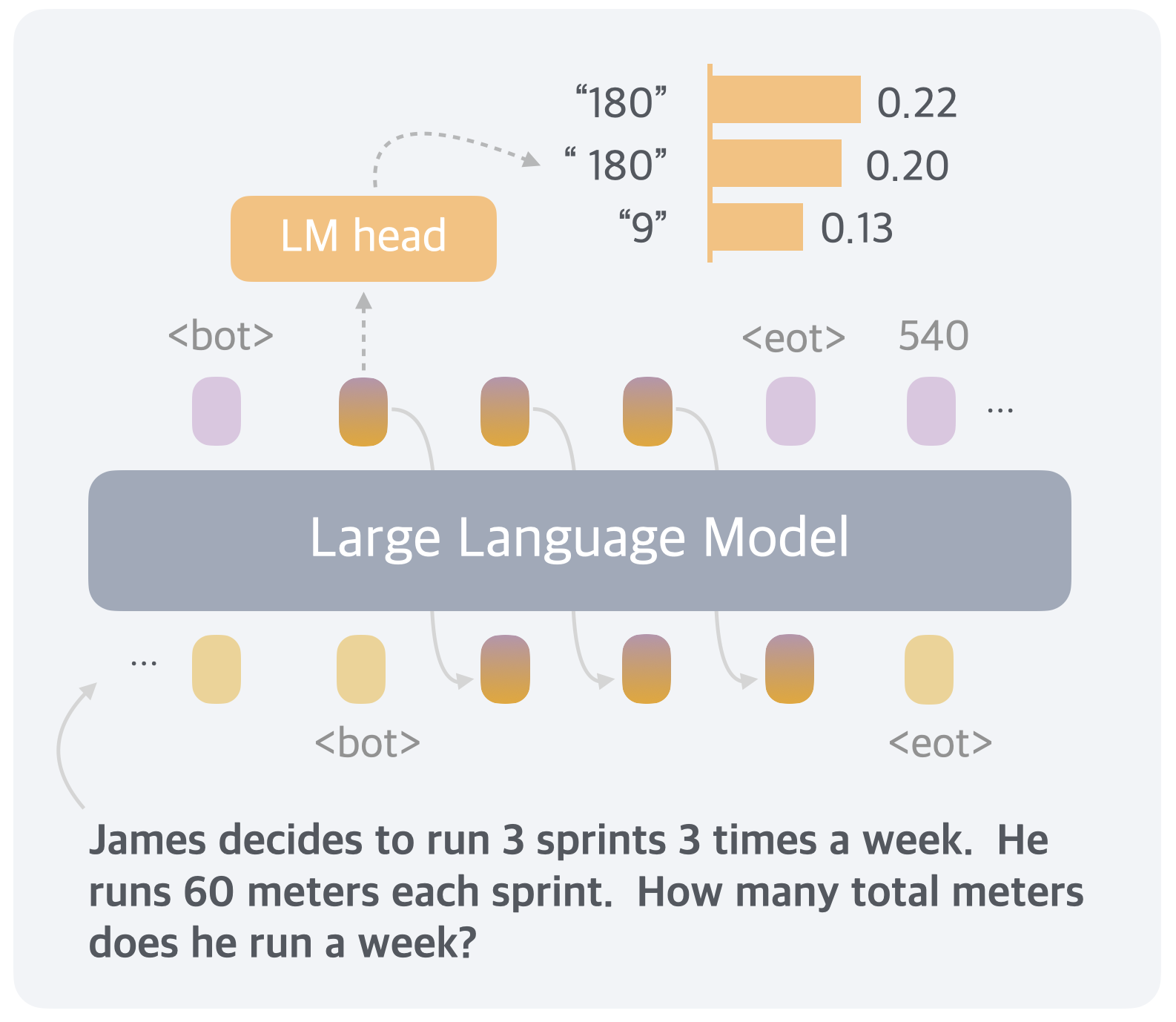

The image depicts a diagram of a large language model (LLM) with an LM head, showing the flow of information and some associated numerical values. The diagram includes input tokens, the LLM itself, the LM head, and output probabilities. It also includes a word problem.

### Components/Axes

* **LM head:** A rectangular box at the top-left, filled with an orange color.

* **Large Language Model:** A large, rounded rectangular box in the center, filled with a grey color.

* **Input Tokens:** Represented by rounded rectangles at the bottom of the LLM box. Some are labeled with `<bot>` and `<eot>`. The colors of the input tokens vary from light yellow to a gradient of yellow to purple.

* **Output Tokens:** Represented by rounded rectangles at the top of the LLM box. Some are labeled with `<bot>` and `<eot>`. The colors of the output tokens vary from light purple to a gradient of yellow to purple.

* **Probabilities:** A bar chart on the top-right, showing probabilities associated with the LM head's output. The bars are orange.

* **Text:** A word problem at the bottom of the diagram.

### Detailed Analysis

* **Input Tokens (Bottom):**

* The first token is represented by three dots.

* The second token is light yellow.

* The third token is light yellow and labeled `<bot>`.

* The fourth token is a gradient of yellow to purple.

* The fifth token is a gradient of yellow to purple.

* The sixth token is a gradient of yellow to purple.

* The seventh token is light yellow.

* The eighth token is labeled `<eot>`.

* **Output Tokens (Top):**

* The first token is light purple and labeled `<bot>`.

* The second token is a gradient of yellow to purple.

* The third token is a gradient of yellow to purple.

* The fourth token is a gradient of yellow to purple.

* The fifth token is light purple and labeled `<eot>`.

* The sixth token is light purple.

* The seventh token is represented by three dots.

* **Probabilities (Top-Right):**

* "180": 0.22

* "180": 0.20

* "9": 0.13

* **Text (Bottom):**

* "James decides to run 3 sprints 3 times a week. He runs 60 meters each sprint. How many total meters does he run a week?"

### Key Observations

* The diagram illustrates the flow of information through a large language model, from input tokens to the LM head, which then generates output probabilities.

* The input tokens are fed into the Large Language Model.

* The LM head predicts the next token based on the input.

* The probabilities associated with the LM head's output are shown in the bar chart.

* The word problem at the bottom provides a context for the model's task.

### Interpretation

The diagram provides a simplified view of how a large language model works. The input tokens represent the initial information provided to the model, which then processes this information and generates output tokens. The LM head is responsible for predicting the next token in the sequence, and the probabilities associated with its output reflect the model's confidence in its predictions. The word problem at the bottom suggests that the model is being used to solve a simple arithmetic problem. The model is likely trained to predict the answer to the question, given the context provided in the problem. The "180" and "9" are likely intermediate calculations or the final answer. The model predicts "180" with a probability of 0.22 and 0.20, and "9" with a probability of 0.13. The correct answer to the word problem is 540, which is also present in the diagram.