TECHNICAL ASSET FINGERPRINT

e5ed896aec9ed99209def640

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

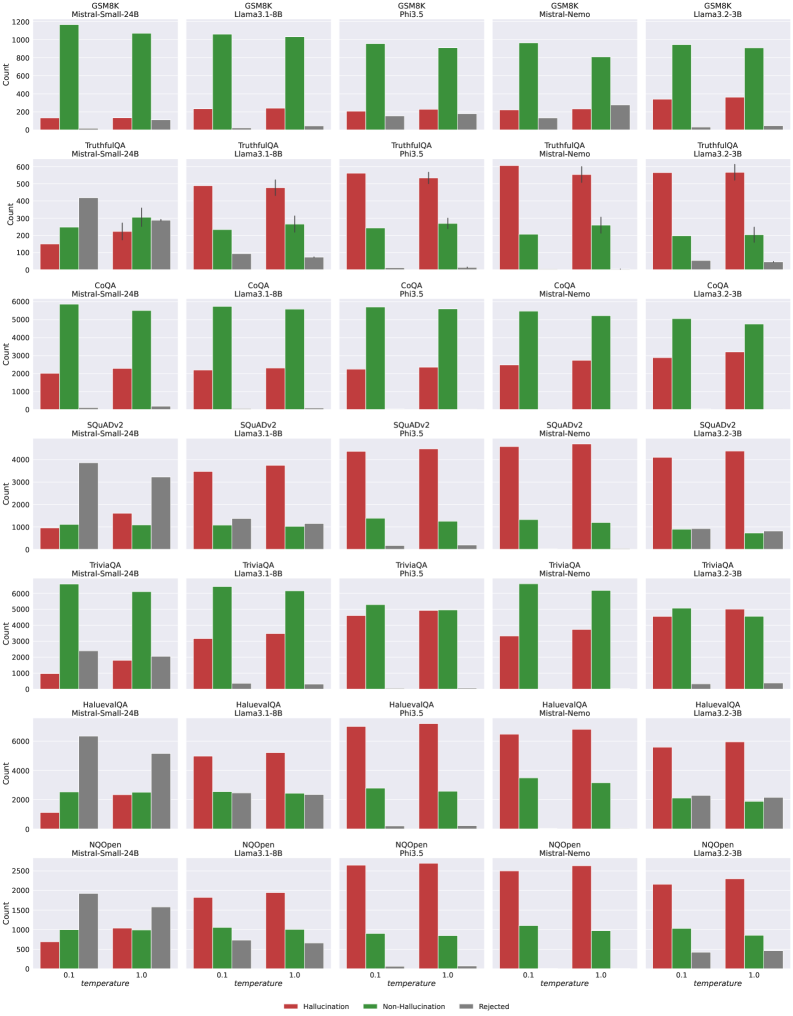

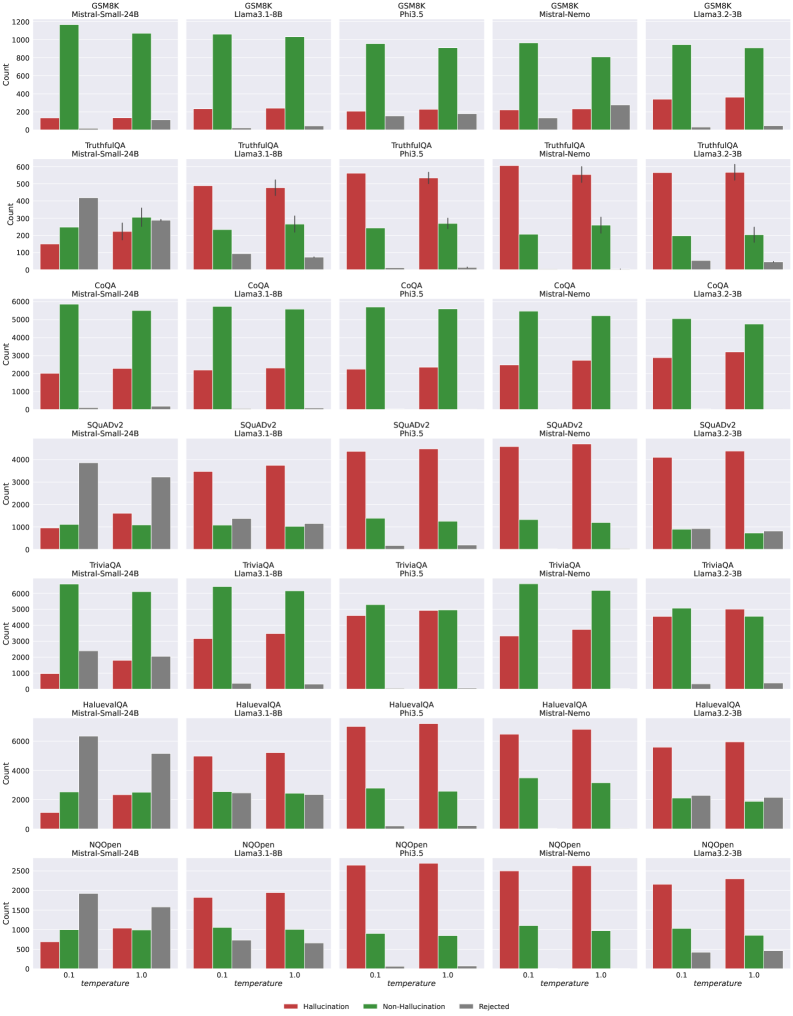

## Bar Chart: Model Performance on Various QA Datasets

### Overview

The image presents a series of bar charts comparing the performance of different language models (Mistral-Small-24B, Llama3-1-8B, Phi3.5, Mistral-Nemo, and Llama3-2-3B) across seven Question Answering (QA) datasets: GSM8K, TruthfulQA, CoQA, SQuADv2, TriviaQA, HaluevalQA, and NQOpen. The performance metric is "Count," likely representing the number of correctly answered questions or a similar measure of accuracy. Each chart displays the performance for each model on a specific dataset, categorized by "temperature" (0.1, 0.5, 1.0).

### Components/Axes

* **X-axis:** Temperature (0.1, 0.5, 1.0). Labeled as "temperature"

* **Y-axis:** Count. Labeled as "Count". The scale varies for each chart, ranging from 0 to approximately 1200 for GSM8K, 0 to 600 for TruthfulQA, 0 to 6000 for CoQA, 0 to 4000 for SQuADv2, 0 to 6000 for TriviaQA, 0 to 6000 for HaluevalQA, and 0 to 2500 for NQOpen.

* **Models:** Mistral-Small-24B (dark red), Llama3-1-8B (green), Phi3.5 (light green), Mistral-Nemo (blue), Llama3-2-3B (grey).

* **Datasets:** GSM8K, TruthfulQA, CoQA, SQuADv2, TriviaQA, HaluevalQA, NQOpen.

* **Legend:** Located in the bottom-left corner, associating colors with each model. The legend labels are: "Mistral-Small-24B", "Llama3-1-8B", "Phi3.5", "Mistral-Nemo", "Llama3-2-3B".

### Detailed Analysis or Content Details

Each dataset has its own bar chart. I will analyze each one individually, noting trends and approximate values.

**1. GSM8K:**

* Mistral-Small-24B: At temperature 0.1: ~1100, 0.5: ~900, 1.0: ~700. Decreasing trend.

* Llama3-1-8B: At temperature 0.1: ~800, 0.5: ~1000, 1.0: ~800. Increasing then decreasing trend.

* Phi3.5: At temperature 0.1: ~1000, 0.5: ~1100, 1.0: ~900. Increasing then decreasing trend.

* Mistral-Nemo: At temperature 0.1: ~800, 0.5: ~1000, 1.0: ~800. Increasing then decreasing trend.

* Llama3-2-3B: At temperature 0.1: ~1000, 0.5: ~1100, 1.0: ~900. Increasing then decreasing trend.

**2. TruthfulQA:**

* Mistral-Small-24B: At temperature 0.1: ~500, 0.5: ~400, 1.0: ~300. Decreasing trend.

* Llama3-1-8B: At temperature 0.1: ~500, 0.5: ~500, 1.0: ~400. Relatively flat, slight decrease.

* Phi3.5: At temperature 0.1: ~500, 0.5: ~500, 1.0: ~400. Relatively flat, slight decrease.

* Mistral-Nemo: At temperature 0.1: ~500, 0.5: ~500, 1.0: ~400. Relatively flat, slight decrease.

* Llama3-2-3B: At temperature 0.1: ~500, 0.5: ~500, 1.0: ~400. Relatively flat, slight decrease.

**3. CoQA:**

* Mistral-Small-24B: At temperature 0.1: ~5000, 0.5: ~4000, 1.0: ~3000. Decreasing trend.

* Llama3-1-8B: At temperature 0.1: ~4000, 0.5: ~5000, 1.0: ~4000. Increasing then decreasing trend.

* Phi3.5: At temperature 0.1: ~5000, 0.5: ~5500, 1.0: ~4500. Increasing then decreasing trend.

* Mistral-Nemo: At temperature 0.1: ~4000, 0.5: ~5000, 1.0: ~4000. Increasing then decreasing trend.

* Llama3-2-3B: At temperature 0.1: ~5000, 0.5: ~5500, 1.0: ~4500. Increasing then decreasing trend.

**4. SQuADv2:**

* Mistral-Small-24B: At temperature 0.1: ~3000, 0.5: ~3500, 1.0: ~3000. Increasing then decreasing trend.

* Llama3-1-8B: At temperature 0.1: ~3500, 0.5: ~4000, 1.0: ~3500. Increasing then decreasing trend.

* Phi3.5: At temperature 0.1: ~4000, 0.5: ~4500, 1.0: ~4000. Increasing then decreasing trend.

* Mistral-Nemo: At temperature 0.1: ~3500, 0.5: ~4000, 1.0: ~3500. Increasing then decreasing trend.

* Llama3-2-3B: At temperature 0.1: ~4000, 0.5: ~4500, 1.0: ~4000. Increasing then decreasing trend.

**5. TriviaQA:**

* Mistral-Small-24B: At temperature 0.1: ~5000, 0.5: ~4000, 1.0: ~3000. Decreasing trend.

* Llama3-1-8B: At temperature 0.1: ~4000, 0.5: ~5000, 1.0: ~4000. Increasing then decreasing trend.

* Phi3.5: At temperature 0.1: ~5000, 0.5: ~5500, 1.0: ~4500. Increasing then decreasing trend.

* Mistral-Nemo: At temperature 0.1: ~4000, 0.5: ~5000, 1.0: ~4000. Increasing then decreasing trend.

* Llama3-2-3B: At temperature 0.1: ~5000, 0.5: ~5500, 1.0: ~4500. Increasing then decreasing trend.

**6. HaluevalQA:**

* Mistral-Small-24B: At temperature 0.1: ~5000, 0.5: ~4000, 1.0: ~3000. Decreasing trend.

* Llama3-1-8B: At temperature 0.1: ~4000, 0.5: ~5000, 1.0: ~4000. Increasing then decreasing trend.

* Phi3.5: At temperature 0.1: ~5000, 0.5: ~5500, 1.0: ~4500. Increasing then decreasing trend.

* Mistral-Nemo: At temperature 0.1: ~4000, 0.5: ~5000, 1.0: ~4000. Increasing then decreasing trend.

* Llama3-2-3B: At temperature 0.1: ~5000, 0.5: ~5500, 1.0: ~4500. Increasing then decreasing trend.

**7. NQOpen:**

* Mistral-Small-24B: At temperature 0.1: ~2000, 0.5: ~1500, 1.0: ~1000. Decreasing trend.

* Llama3-1-8B: At temperature 0.1: ~1500, 0.5: ~2000, 1.0: ~1500. Increasing then decreasing trend.

* Phi3.5: At temperature 0.1: ~2000, 0.5: ~2200, 1.0: ~1800. Increasing then decreasing trend.

* Mistral-Nemo: At temperature 0.1: ~1500, 0.5: ~2000, 1.0: ~1500. Increasing then decreasing trend.

* Llama3-2-3B: At temperature 0.1: ~2000, 0.5: ~2200, 1.0: ~1800. Increasing then decreasing trend.

### Key Observations

* Generally, performance decreases as temperature increases for Mistral-Small-24B across all datasets.

* Llama3-1-8B, Phi3.5, Mistral-Nemo, and Llama3-2-3B often show an initial increase in performance at temperature 0.5 before decreasing at temperature 1.0.

* CoQA, TriviaQA, and HaluevalQA have significantly higher "Count" values compared to GSM8K and TruthfulQA, indicating potentially easier or different types of questions.

* Phi3.5 consistently performs well, often achieving the highest "Count" values, particularly at a temperature of 0.5.

### Interpretation

The charts demonstrate the impact of temperature settings on the performance of different language models across various QA datasets. Lower temperatures (0.1) generally lead to more deterministic and potentially conservative responses, while higher temperatures (1.0) introduce more randomness and creativity, which can sometimes improve performance but often leads to decreased accuracy. The varying performance across datasets suggests that the models' strengths and weaknesses are dataset-specific. The consistent strong performance of Phi3.5 suggests it is a robust model capable of handling a wide range of QA tasks effectively. The trend of decreasing performance with increasing temperature for Mistral-Small-24B suggests it may be more sensitive to temperature adjustments than the other models. The differences in scale between datasets suggest varying difficulty or different scoring mechanisms. The data suggests that a temperature of 0.5 often represents a good balance between accuracy and exploration for the Llama3 and Mistral-Nemo models.

DECODING INTELLIGENCE...