## Diagram: Deep Q-Network Architecture

### Overview

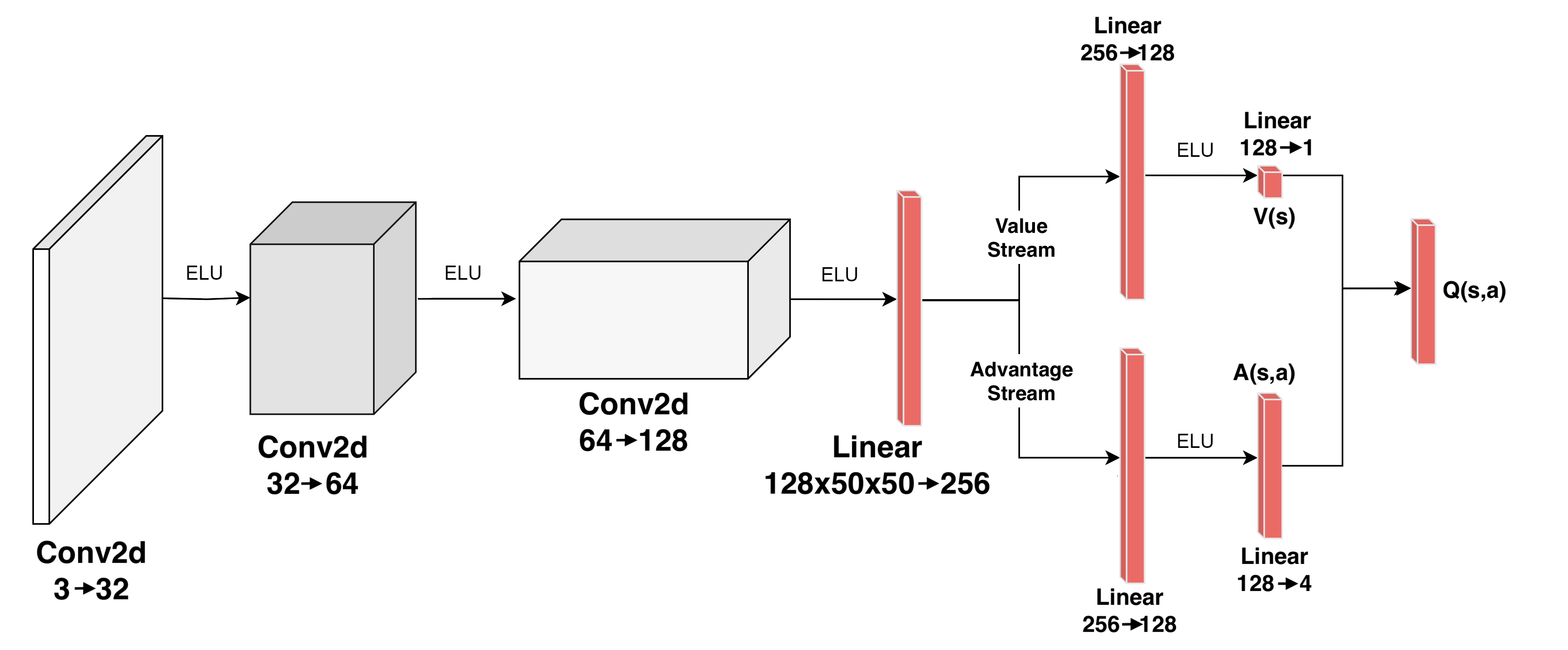

The image is a diagram illustrating the architecture of a Deep Q-Network (DQN). It shows the flow of data through convolutional and linear layers, splitting into value and advantage streams, and ultimately combining to estimate Q-values.

### Components/Axes

* **Layers:** The diagram consists of convolutional (Conv2d) and linear layers.

* **Activation Function:** ELU (Exponential Linear Unit) is used as the activation function between layers.

* **Streams:** The network splits into two streams: Value Stream and Advantage Stream.

* **Inputs/Outputs:** The network takes an input and outputs Q(s,a) values.

### Detailed Analysis

The diagram can be broken down into the following stages:

1. **Input Layer:**

* A rectangular block on the left represents the input.

* Label: Conv2d 3->32

2. **First Convolutional Layer:**

* A cube-shaped block follows the input.

* Label: Conv2d 32->64

* Activation: ELU

3. **Second Convolutional Layer:**

* Another cube-shaped block.

* Label: Conv2d 64->128

* Activation: ELU

4. **Linear Layer (Shared):**

* A red rectangular block.

* Label: Linear 128x50x50->256

* Activation: ELU

5. **Value Stream:**

* Label: Value Stream

* Linear Layer: Red rectangular block labeled Linear 256->128

* Activation: ELU

* Linear Layer: Red rectangular block labeled Linear 128->1

* Output: V(s)

6. **Advantage Stream:**

* Label: Advantage Stream

* Linear Layer: Red rectangular block labeled Linear 256->128

* Linear Layer: Red rectangular block labeled Linear 128->4

* Activation: ELU

* Output: A(s,a)

7. **Output Layer:**

* The Value and Advantage streams are combined.

* Red rectangular block labeled Q(s,a)

### Key Observations

* The network architecture involves a series of convolutional layers followed by a split into value and advantage streams.

* ELU activation functions are used throughout the network.

* The dimensions of the layers change as data flows through the network.

### Interpretation

The diagram illustrates a specific architecture for a Deep Q-Network, likely used in reinforcement learning. The convolutional layers are used for feature extraction from the input, while the value and advantage streams allow for separate estimation of the value of a state and the advantage of taking a particular action in that state. This separation can improve the stability and performance of the learning process. The final combination of the value and advantage streams results in an estimate of the Q-value, which represents the expected cumulative reward for taking a specific action in a given state.