## System Diagram: Knowledge Graph Enhanced Reasoning

### Overview

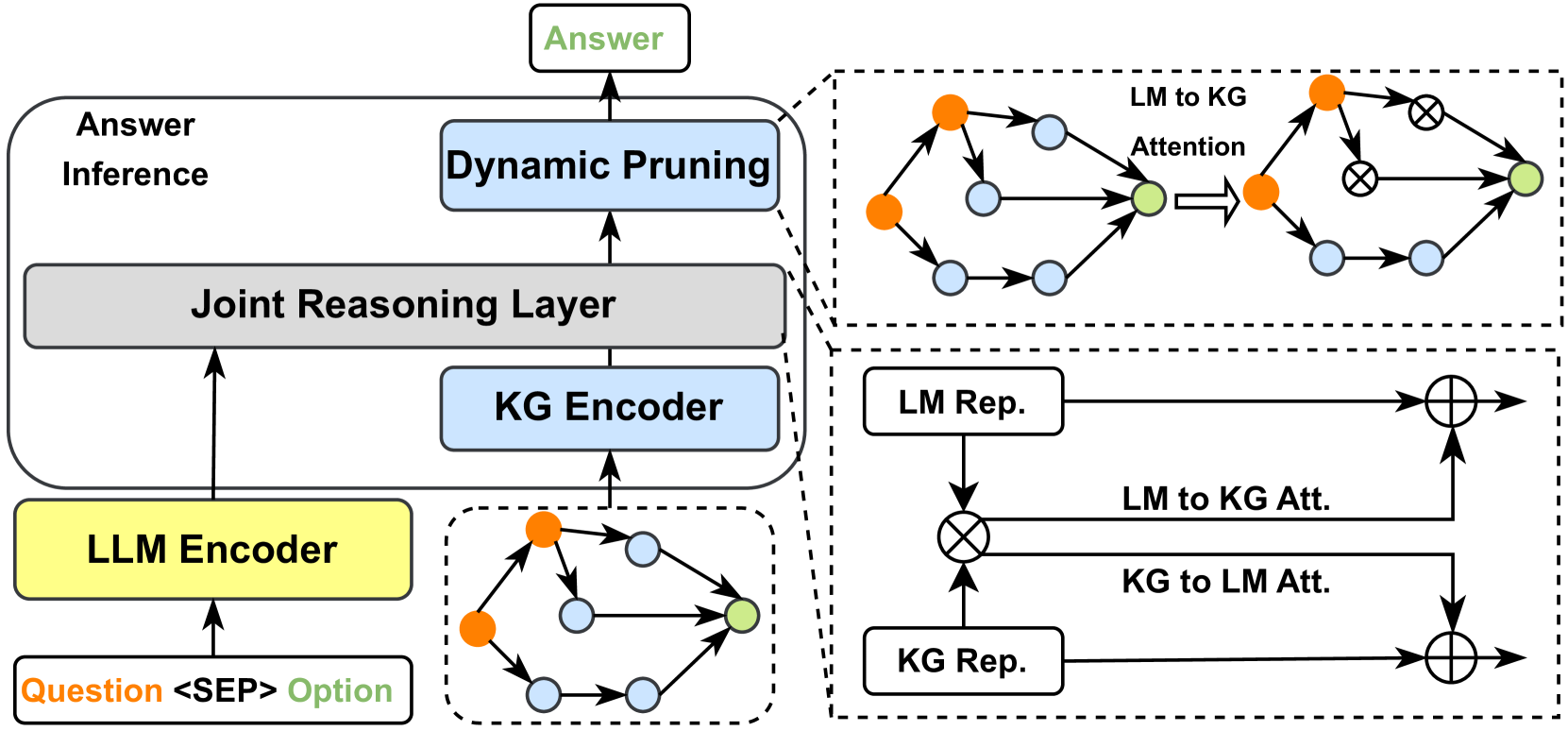

The image presents a system diagram illustrating a knowledge graph enhanced reasoning process. It depicts the flow of information from input (Question and Option) through various layers including LLM Encoder, KG Encoder, Joint Reasoning Layer, and Dynamic Pruning, ultimately leading to an Answer. The diagram also includes detailed views of the attention mechanisms between Language Model (LM) and Knowledge Graph (KG) representations.

### Components/Axes

* **Overall Structure:** The diagram is structured hierarchically, showing the flow of information from bottom to top.

* **Input:** "Question Option" (yellow box)

* **LLM Encoder:** (yellow box)

* **KG Encoder:** (light blue box)

* **Joint Reasoning Layer:** (gray box)

* **Dynamic Pruning:** (light blue box)

* **Output:** "Answer" (green box)

* **Answer Inference:** (gray box encompassing the KG Encoder, Joint Reasoning Layer, and Dynamic Pruning)

* **Attention Mechanisms:** Two detailed views enclosed in dashed boxes illustrate the attention mechanisms between LM and KG.

* **Top-Right:** "LM to KG Attention"

* **Bottom-Right:** LM and KG Representation interaction

### Detailed Analysis or Content Details

1. **Input Layer:**

* The input consists of a "Question" and an "Option", separated by "<SEP>".

* This input feeds into the "LLM Encoder" (yellow box).

2. **Encoding Layers:**

* The "LLM Encoder" (yellow) processes the input and passes its output to the "Joint Reasoning Layer" (gray).

* A separate "KG Encoder" (light blue) also feeds into the "Joint Reasoning Layer" (gray).

* The "KG Encoder" receives input from a knowledge graph structure, represented by interconnected nodes (orange, light blue, and green).

3. **Joint Reasoning and Pruning:**

* The "Joint Reasoning Layer" (gray) combines the outputs from the "LLM Encoder" and the "KG Encoder".

* The output of the "Joint Reasoning Layer" is then fed into the "Dynamic Pruning" (light blue) module.

4. **Output Layer:**

* The "Dynamic Pruning" module produces the final "Answer" (green).

5. **Attention Mechanism Details (Top-Right):**

* Label: "LM to KG Attention"

* Shows a network of nodes (orange, light blue, and green) representing the attention flow.

* The flow starts with a network of nodes (orange, light blue, and green), which is then transformed via an attention mechanism (represented by crossed circles) into another network of nodes (orange, light blue, and green).

* The arrow indicates the direction of attention flow.

6. **Attention Mechanism Details (Bottom-Right):**

* "LM Rep." (Language Model Representation) feeds into a multiplication operation (represented by a circled cross).

* "KG Rep." (Knowledge Graph Representation) also feeds into the same multiplication operation.

* The output of this multiplication, labeled "LM to KG Att.", feeds into an addition operation (represented by a circled plus).

* "KG to LM Att." also feeds into the same addition operation.

### Key Observations

* The diagram highlights the integration of a Knowledge Graph (KG) with a Language Model (LM) for enhanced reasoning.

* The attention mechanisms play a crucial role in guiding the reasoning process by focusing on relevant information from both the LM and KG.

* The "Dynamic Pruning" module suggests a mechanism for filtering or refining the reasoning process to arrive at the final answer.

### Interpretation

The diagram illustrates a system designed to leverage both linguistic knowledge (from the LLM) and structured knowledge (from the KG) to improve answer inference. The attention mechanisms allow the system to selectively focus on the most relevant parts of the KG when processing the language input, and vice versa. The "Dynamic Pruning" step likely serves to eliminate irrelevant or noisy information, leading to a more accurate and reliable answer. The system architecture suggests a sophisticated approach to question answering that goes beyond simple pattern matching and incorporates structured knowledge for more informed reasoning.