\n

## Diagram: Knowledge-Augmented Language Model Architecture

### Overview

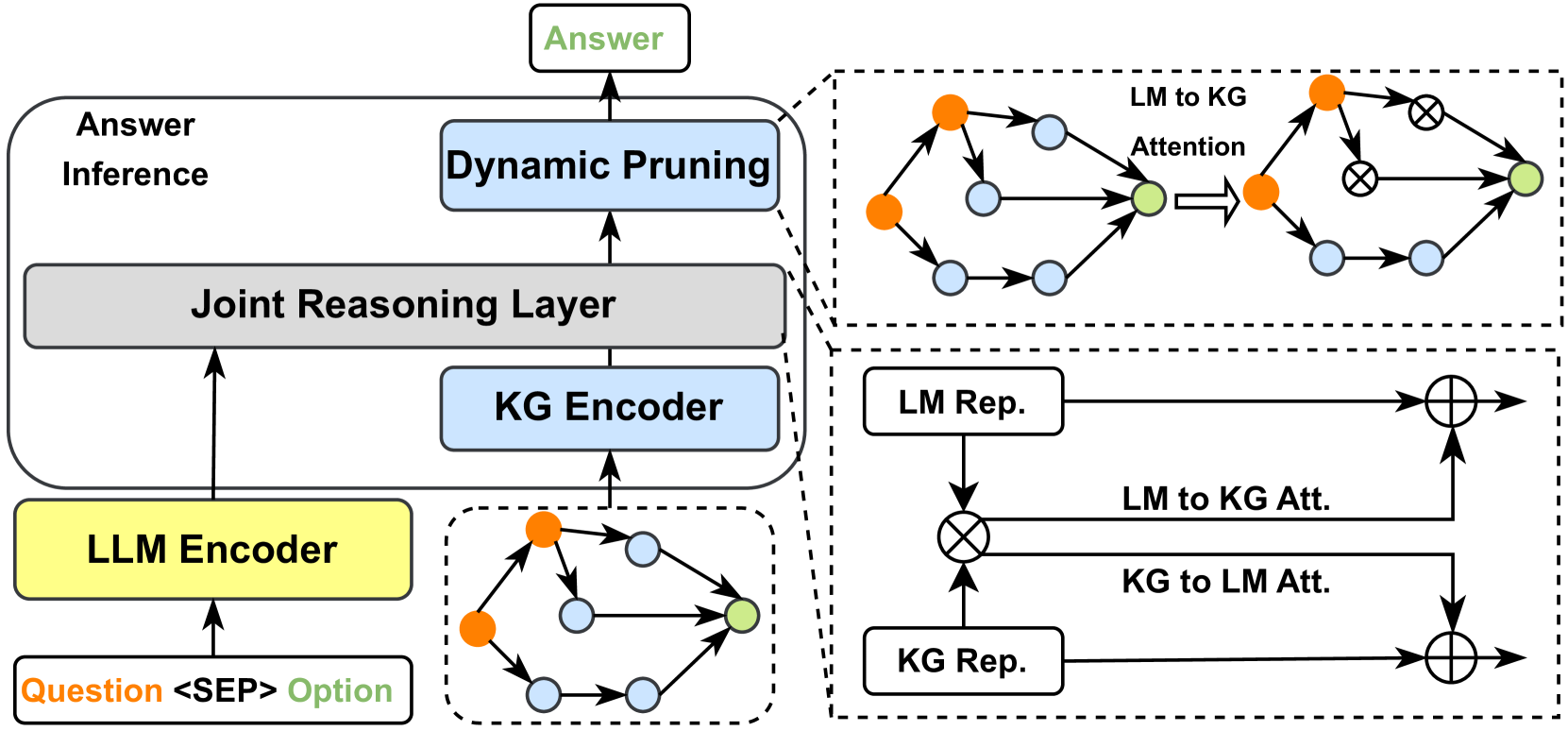

The image depicts a diagram of a knowledge-augmented language model architecture. It illustrates the flow of information from a question input through several layers, including an LLM Encoder, KG Encoder, Joint Reasoning Layer, Dynamic Pruning, and finally to an Answer. The right side of the diagram details the attention mechanisms and representations within the Joint Reasoning Layer.

### Components/Axes

The diagram consists of the following components, arranged vertically:

* **Question <SEP> Option:** Input to the LLM Encoder.

* **LLM Encoder:** Processes the question input.

* **KG Encoder:** Encodes knowledge graph information.

* **Joint Reasoning Layer:** Combines information from the LLM and KG Encoders.

* **Dynamic Pruning:** Filters information.

* **Answer Inference:** Generates the final answer.

* **Answer:** The output of the system.

The right side of the diagram shows the internal workings of the Joint Reasoning Layer, including:

* **LM Rep.:** Language Model Representation.

* **KG Rep.:** Knowledge Graph Representation.

* **LM to KG Att.:** Language Model to Knowledge Graph Attention.

* **KG to LM Att.:** Knowledge Graph to Language Model Attention.

* Attention mechanisms represented by circles with arrows.

* Mathematical operations represented by circled 'x' symbols (multiplication) and circled '+' symbols (addition).

### Detailed Analysis or Content Details

The diagram shows a flow of information from bottom to top.

1. **LLM Encoder:** Receives "Question <SEP> Option" as input.

2. **KG Encoder:** Processes knowledge graph data.

3. **Joint Reasoning Layer:**

* The KG Rep. is multiplied by the LM Rep.

* LM to KG Attention is applied.

* KG to LM Attention is applied.

* The results of the attention mechanisms are added to the original representations.

4. **Dynamic Pruning:** Filters the output of the Joint Reasoning Layer.

5. **Answer Inference:** Generates the final answer.

The attention mechanisms within the Joint Reasoning Layer are visually represented as follows:

* **LM to KG Attention:** A series of nodes connected by arrows, with an arrow pointing from a node to a multiplication symbol.

* **KG to LM Attention:** A similar structure to LM to KG Attention, but with the flow reversed.

The nodes in the knowledge graph representations are colored:

* Orange nodes

* Light blue nodes

* White nodes

The connections between nodes are represented by arrows.

### Key Observations

The diagram highlights the integration of knowledge graphs with language models. The attention mechanisms allow the model to selectively focus on relevant information from both sources. The dynamic pruning step suggests a mechanism for reducing noise or irrelevant information. The diagram is a high-level overview and does not provide specific details about the implementation of the individual components.

### Interpretation

This diagram illustrates a sophisticated approach to question answering by combining the strengths of language models and knowledge graphs. The LLM Encoder provides contextual understanding of the question, while the KG Encoder provides access to structured knowledge. The Joint Reasoning Layer allows the model to reason over both sources of information, and the attention mechanisms enable it to focus on the most relevant parts of each. The dynamic pruning step likely improves the efficiency and accuracy of the model by filtering out irrelevant information. This architecture suggests a system capable of answering complex questions that require both linguistic understanding and factual knowledge. The use of attention mechanisms is a key feature, allowing the model to dynamically weigh the importance of different pieces of information. The diagram is a conceptual representation and does not provide quantitative data or performance metrics.