## Line Graphs: Llama Model Performance on Question Answering

### Overview

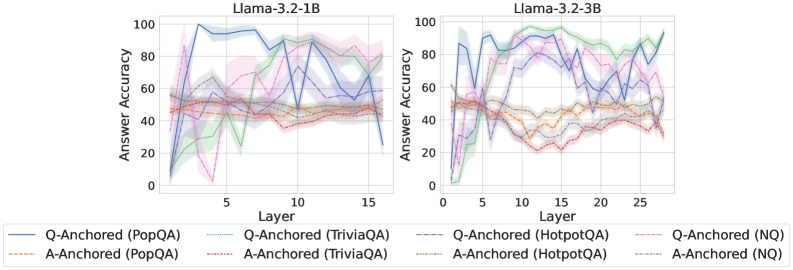

The image presents two line graphs comparing the performance of Llama models (Llama-3.2-1B and Llama-3.2-3B) on various question-answering tasks. The graphs depict the "Answer Accuracy" as a function of "Layer" for different question-answering datasets and anchoring methods (Q-Anchored and A-Anchored).

### Components/Axes

* **Titles:**

* Left Graph: "Llama-3.2-1B"

* Right Graph: "Llama-3.2-3B"

* **Y-axis (Answer Accuracy):**

* Label: "Answer Accuracy"

* Scale: 0 to 100, with tick marks at 0, 20, 40, 60, 80, and 100.

* **X-axis (Layer):**

* Label: "Layer"

* Left Graph Scale: 0 to 15, with tick marks at 0, 5, 10, and 15.

* Right Graph Scale: 0 to 25, with tick marks at 0, 5, 10, 15, 20, and 25.

* **Legend (bottom):**

* Q-Anchored (PopQA): Solid Blue Line

* A-Anchored (PopQA): Dashed Brown Line

* Q-Anchored (TriviaQA): Solid Green Line

* A-Anchored (TriviaQA): Dashed Red Line

* Q-Anchored (HotpotQA): Solid Gray Line

* A-Anchored (HotpotQA): Dashed Orange Line

* Q-Anchored (NQ): Dashed-dotted Pink Line

* A-Anchored (NQ): Dotted Black Line

### Detailed Analysis

**Left Graph (Llama-3.2-1B):**

* **Q-Anchored (PopQA) - Solid Blue:** Starts at approximately 5% accuracy at layer 1, rises sharply to around 95% by layer 4, and then fluctuates between 80% and 100% for the remaining layers.

* **A-Anchored (PopQA) - Dashed Brown:** Remains relatively stable between 45% and 55% across all layers.

* **Q-Anchored (TriviaQA) - Solid Green:** Starts near 0% at layer 1, increases to approximately 60% by layer 7, and then fluctuates between 40% and 70% for the remaining layers.

* **A-Anchored (TriviaQA) - Dashed Red:** Starts around 50% at layer 1, dips to 20% at layer 4, and then fluctuates between 40% and 50% for the remaining layers.

* **Q-Anchored (HotpotQA) - Solid Gray:** Starts around 55% at layer 1, fluctuates between 50% and 70% across all layers.

* **A-Anchored (HotpotQA) - Dashed Orange:** Starts around 50% at layer 1, fluctuates between 40% and 50% across all layers.

* **Q-Anchored (NQ) - Dashed-dotted Pink:** Starts near 0% at layer 1, increases to approximately 70% by layer 7, and then fluctuates between 20% and 70% for the remaining layers.

* **A-Anchored (NQ) - Dotted Black:** Remains relatively stable between 50% and 60% across all layers.

**Right Graph (Llama-3.2-3B):**

* **Q-Anchored (PopQA) - Solid Blue:** Starts at approximately 5% accuracy at layer 1, rises sharply to around 95% by layer 4, and then fluctuates between 60% and 100% for the remaining layers.

* **A-Anchored (PopQA) - Dashed Brown:** Starts around 50% at layer 1, dips to 20% at layer 14, and then fluctuates between 30% and 50% for the remaining layers.

* **Q-Anchored (TriviaQA) - Solid Green:** Starts near 0% at layer 1, increases to approximately 90% by layer 26, and then fluctuates between 70% and 90% for the remaining layers.

* **A-Anchored (TriviaQA) - Dashed Red:** Starts around 50% at layer 1, dips to 20% at layer 14, and then fluctuates between 20% and 40% for the remaining layers.

* **Q-Anchored (HotpotQA) - Solid Gray:** Starts around 55% at layer 1, fluctuates between 50% and 70% across all layers.

* **A-Anchored (HotpotQA) - Dashed Orange:** Starts around 50% at layer 1, dips to 20% at layer 14, and then fluctuates between 20% and 40% for the remaining layers.

* **Q-Anchored (NQ) - Dashed-dotted Pink:** Starts near 0% at layer 1, increases to approximately 90% by layer 14, and then fluctuates between 60% and 90% for the remaining layers.

* **A-Anchored (NQ) - Dotted Black:** Remains relatively stable between 50% and 60% across all layers.

### Key Observations

* **Q-Anchored (PopQA)** shows a rapid increase in accuracy in the initial layers for both models.

* **A-Anchored (PopQA)**, **A-Anchored (TriviaQA)**, and **A-Anchored (HotpotQA)** generally exhibit lower and more stable accuracy compared to their Q-Anchored counterparts.

* The 3B model has more layers (25) than the 1B model (15).

* The shaded regions around each line indicate the variance or uncertainty in the accuracy measurements.

### Interpretation

The graphs illustrate the performance of Llama models on different question-answering tasks, highlighting the impact of anchoring methods (Q-Anchored vs. A-Anchored) and the specific dataset used (PopQA, TriviaQA, HotpotQA, NQ).

The Q-Anchored (PopQA) results suggest that the model quickly learns to answer questions from the PopQA dataset, achieving high accuracy within the first few layers. The A-Anchored methods generally result in lower accuracy, indicating that anchoring on the answer might not be as effective as anchoring on the question for these tasks.

The difference in the number of layers between the Llama-3.2-1B and Llama-3.2-3B models (15 vs. 25) could contribute to the observed performance variations, especially for tasks like TriviaQA and NQ, where the 3B model appears to achieve higher accuracy in later layers.

The shaded regions provide insight into the stability and reliability of the accuracy measurements. Wider shaded regions indicate greater variability in performance across different runs or samples.