\n

## Technical Document: Instructions for Evaluating GPT4's Pattern Inference

### Overview

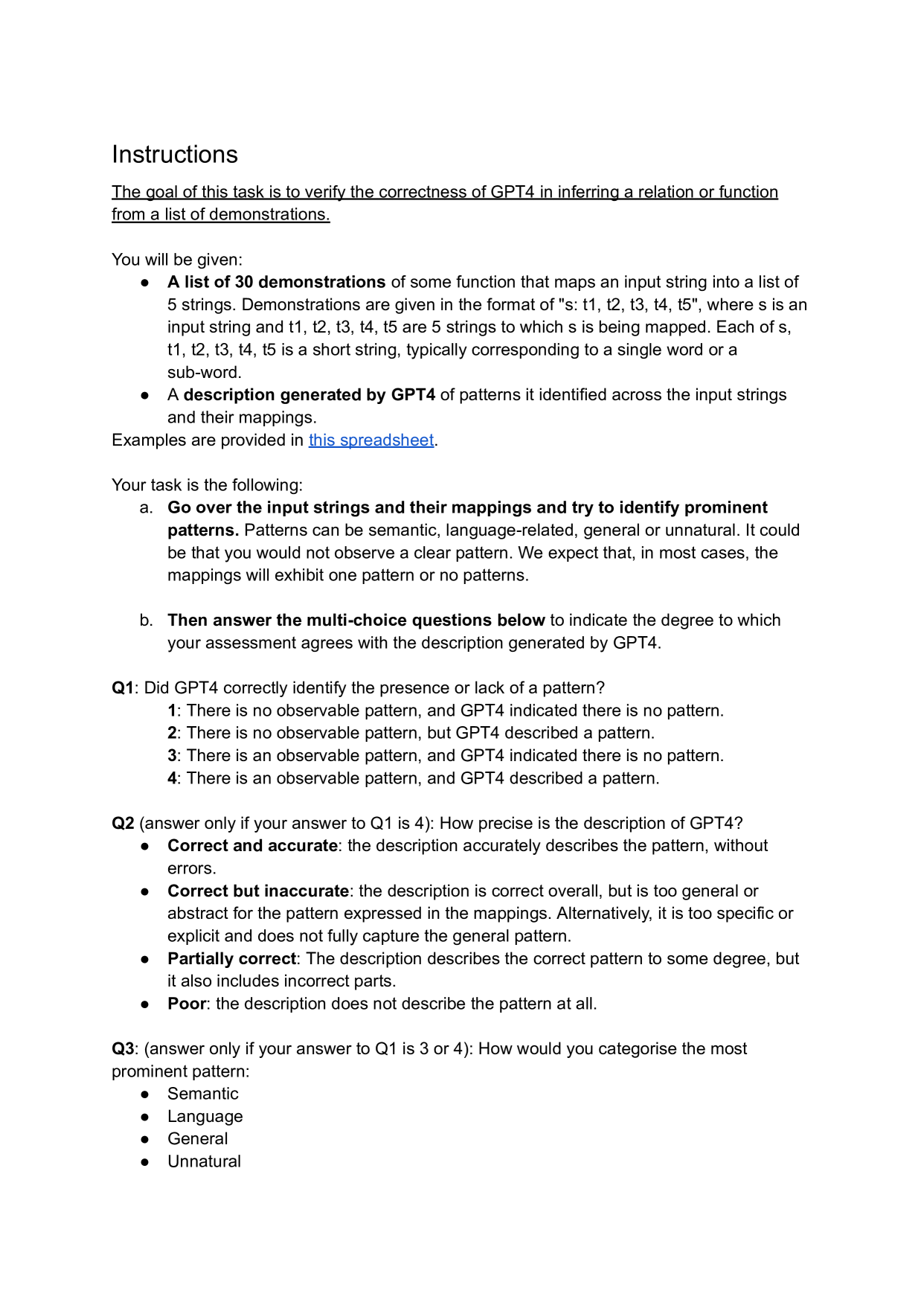

This image displays a set of instructions for a human evaluation task. The task's goal is to verify the correctness of GPT4 in inferring a relation or function from a list of demonstrations. The evaluator is provided with input-output mappings and a description generated by GPT4, and must assess the accuracy of GPT4's analysis.

### Components/Axes

The document is structured as follows:

* **Title:** "Instructions"

* **Goal Statement:** A single, underlined sentence defining the task's objective.

* **"You will be given:" Section:** A bulleted list describing the two materials provided to the evaluator.

* **"Your task is the following:" Section:** A two-step process (labeled a. and b.) for the evaluator to follow.

* **Question Block:** Three multi-choice questions (Q1, Q2, Q3) with conditional logic for when to answer them.

* **Hyperlink:** The text "this spreadsheet" is a blue, underlined hyperlink.

### Detailed Analysis

The document contains the following precise text:

**Instructions**

<u>The goal of this task is to verify the correctness of GPT4 in inferring a relation or function from a list of demonstrations.</u>

You will be given:

* **A list of 30 demonstrations** of some function that maps an input string into a list of 5 strings. Demonstrations are given in the format of "s: t1, t2, t3, t4, t5", where s is an input string and t1, t2, t3, t4, t5 are 5 strings to which s is being mapped. Each of s, t1, t2, t3, t4, t5 is a short string, typically corresponding to a single word or a sub-word.

* **A description generated by GPT4** of patterns it identified across the input strings and their mappings.

Examples are provided in [this spreadsheet](https://docs.google.com/spreadsheets/).

Your task is the following:

a. **Go over the input strings and their mappings and try to identify prominent patterns.** Patterns can be semantic, language-related, general or unnatural. It could be that you would not observe a clear pattern. We expect that, in most cases, the mappings will exhibit one pattern or no patterns.

b. **Then answer the multi-choice questions below** to indicate the degree to which your assessment agrees with the description generated by GPT4.

**Q1: Did GPT4 correctly identify the presence or lack of a pattern?**

1: There is no observable pattern, and GPT4 indicated there is no pattern.

2: There is no observable pattern, but GPT4 described a pattern.

3: There is an observable pattern, and GPT4 indicated there is no pattern.

4: There is an observable pattern, and GPT4 described a pattern.

**Q2 (answer only if your answer to Q1 is 4): How precise is the description of GPT4?**

* **Correct and accurate:** the description accurately describes the pattern, without errors.

* **Correct but inaccurate:** the description is correct overall, but is too general or abstract for the pattern expressed in the mappings. Alternatively, it is too specific or explicit and does not fully capture the general pattern.

* **Partially correct:** The description describes the correct pattern to some degree, but it also includes incorrect parts.

* **Poor:** the description does not describe the pattern at all.

**Q3: (answer only if your answer to Q1 is 3 or 4): How would you categorise the most prominent pattern:**

* Semantic

* Language

* General

* Unnatural

### Key Observations

1. **Conditional Logic:** The questionnaire has built-in dependencies. Q2 is only to be answered if the evaluator selects option 4 for Q1. Q3 is only to be answered if the evaluator selects option 3 or 4 for Q1.

2. **Pattern Taxonomy:** The instructions explicitly define four categories for patterns: Semantic, Language-related, General, and Unnatural.

3. **Task Structure:** The evaluator's job is two-fold: first, to perform an independent analysis of the raw data (30 demonstrations), and second, to compare their findings against GPT4's generated description.

4. **Data Format:** The demonstrations are strictly formatted as "s: t1, t2, t3, t4, t5", where each element is a short string (word or sub-word).

5. **Reference Material:** A hyperlink to a spreadsheet is provided for examples, though the link's destination is not visible in the image.

### Interpretation

This document outlines a rigorous protocol for auditing the reasoning capabilities of a large language model (GPT4). The task is designed to measure two key aspects of the model's performance:

1. **Detection Accuracy:** Can the model correctly discern whether a pattern exists in a given dataset (Q1)?

2. **Description Fidelity:** When a pattern is detected, how accurately and precisely can the model describe it (Q2)?

The inclusion of the "Unnatural" pattern category is particularly noteworthy. It suggests the task may involve synthetic or adversarial examples designed to test the model's ability to recognize non-intuitive, rule-based mappings that don't align with human semantic or linguistic intuition. This moves beyond testing simple knowledge retrieval to evaluating abstract reasoning and rule induction.

The conditional structure of the questions ensures a nuanced evaluation. It prevents a simple "right/wrong" binary and instead captures degrees of correctness (e.g., "correct but inaccurate") and the evaluator's independent judgment on pattern categorization. This methodology is typical of high-quality AI alignment and capability research, where understanding the *nature* of model errors is as important as counting them. The ultimate goal is likely to identify failure modes in the model's inferential reasoning, which is crucial for improving reliability and trustworthiness in AI systems.