## Training Data Flow Diagram

### Overview

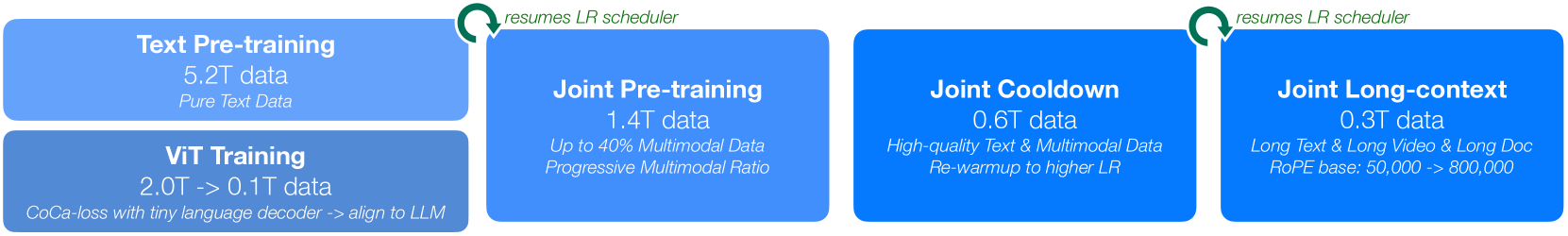

The image is a diagram illustrating the data flow and stages of a training process, likely for a large language model (LLM). It outlines four distinct phases: Text Pre-training, ViT Training, Joint Pre-training, Joint Cooldown, and Joint Long-context. Each phase is represented by a blue rounded rectangle containing information about the data used. The diagram also indicates the resumption of the learning rate (LR) scheduler between certain phases.

### Components/Axes

* **Blue Rounded Rectangles:** Represent the different training phases.

* **Text within Rectangles:** Describes the phase name, the amount of data used (in terabytes), and additional details about the data or training process.

* **Green Arrows:** Indicate the flow of the training process and the resumption of the LR scheduler.

### Detailed Analysis or ### Content Details

**1. Text Pre-training:**

* Data: 5.2T data

* Data Type: Pure Text Data

**2. ViT Training:**

* Data: 2.0T -> 0.1T data

* Details: CoCa-loss with tiny language decoder -> align to LLM

**3. Joint Pre-training:**

* Data: 1.4T data

* Details: Up to 40% Multimodal Data, Progressive Multimodal Ratio

* Arrow: A green arrow indicates that the LR scheduler resumes after this phase.

**4. Joint Cooldown:**

* Data: 0.6T data

* Details: High-quality Text & Multimodal Data, Re-warmup to higher LR

**5. Joint Long-context:**

* Data: 0.3T data

* Details: Long Text & Long Video & Long Doc, RoPE base: 50,000 -> 800,000

* Arrow: A green arrow indicates that the LR scheduler resumes after this phase.

### Key Observations

* The amount of data used decreases as the training progresses from Text Pre-training (5.2T) to Joint Long-context (0.3T).

* The training process transitions from pure text data to multimodal data.

* The ViT Training phase significantly reduces the amount of data used (2.0T -> 0.1T).

* The LR scheduler is resumed after the Joint Pre-training and Joint Long-context phases.

### Interpretation

The diagram illustrates a multi-stage training process for a model, likely a large multimodal model. The initial phase focuses on pre-training with a large amount of pure text data. Subsequent phases incorporate multimodal data and fine-tune the model for specific tasks or contexts, such as long-context understanding. The reduction in data size and the use of techniques like CoCa-loss and RoPE suggest a focus on efficiency and specialized training as the process evolves. The resumption of the LR scheduler indicates adjustments to the learning rate during training, likely to optimize convergence and performance. The progression from pure text to multimodal data suggests an effort to build a model capable of processing and understanding diverse types of information.