\n

## Diagram: Text-to-Knowledge Alignment with LLMs

### Overview

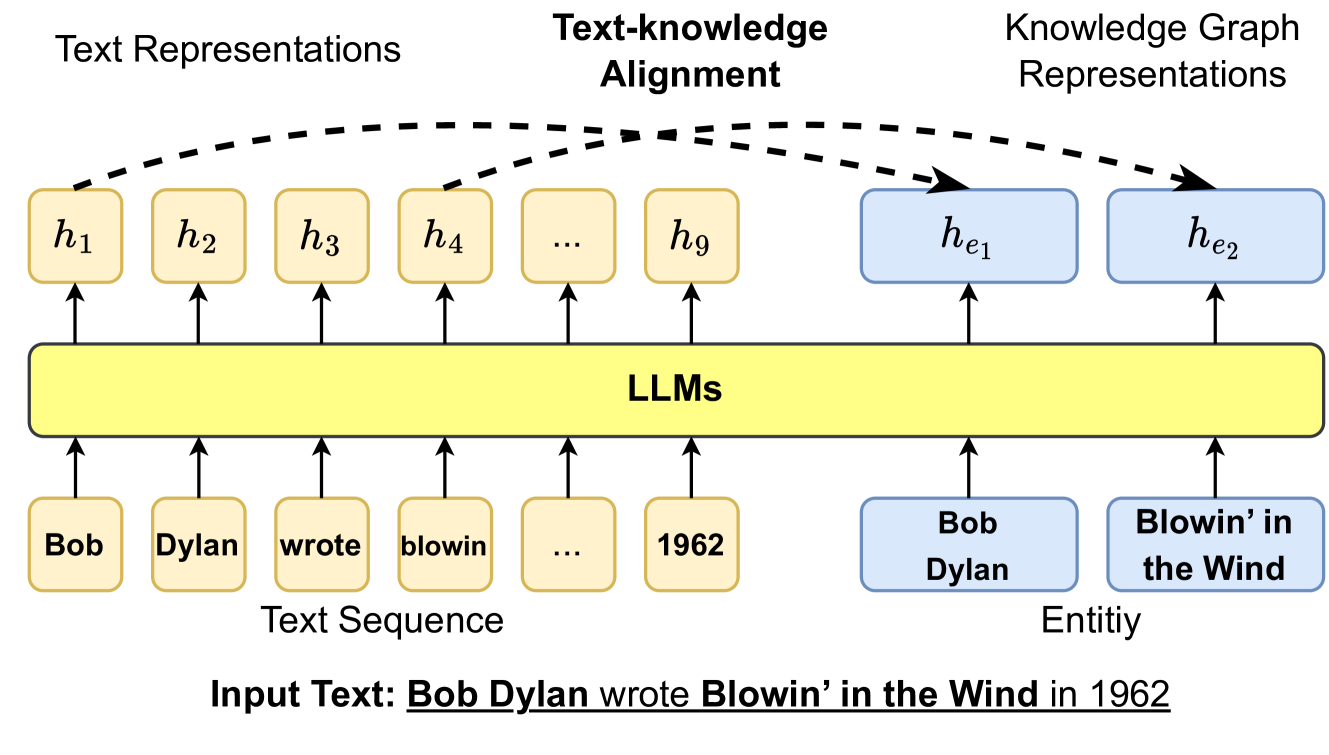

This diagram illustrates the process of aligning text representations with knowledge graph representations using Large Language Models (LLMs). It depicts how an input text sequence is processed by LLMs to generate text representations, which are then aligned with corresponding entities in a knowledge graph.

### Components/Axes

The diagram consists of three main horizontal sections: "Text Representations", "Text-knowledge Alignment", and "Knowledge Graph Representations". Below these sections is a "Text Sequence" and "Entity" section. The central component is a yellow rectangle labeled "LLMs".

* **Text Representations:** Contains a series of labeled nodes: h1, h2, h3, h4, ..., h9.

* **Text-knowledge Alignment:** A dashed line connecting the "Text Representations" and "Knowledge Graph Representations" sections, labeled "Text-knowledge Alignment".

* **Knowledge Graph Representations:** Contains a series of labeled nodes: he1, he2.

* **Text Sequence:** Contains the following words in yellow rounded rectangles: "Bob", "Dylan", "wrote", "blowin'", "...", "1962".

* **Entity:** Contains the following entities in green rounded rectangles: "Bob Dylan", "Blowin' in the Wind".

* **LLMs:** A large yellow rectangle in the center, acting as the processing unit.

* **Input Text:** "Bob Dylan wrote Blowin’ in the Wind in 1962"

### Detailed Analysis or Content Details

The diagram shows the following relationships:

* "Bob" in the Text Sequence is connected to h1 in Text Representations via an upward arrow.

* "Dylan" in the Text Sequence is connected to h2 in Text Representations via an upward arrow.

* "wrote" in the Text Sequence is connected to h3 in Text Representations via an upward arrow.

* "blowin'" in the Text Sequence is connected to h4 in Text Representations via an upward arrow.

* "..." in the Text Sequence is connected to an unspecified number of h nodes in Text Representations.

* "1962" in the Text Sequence is connected to h9 in Text Representations via an upward arrow.

* "Bob Dylan" in the Entity section is connected to he1 in Knowledge Graph Representations via an upward arrow.

* "Blowin' in the Wind" in the Entity section is connected to he2 in Knowledge Graph Representations via an upward arrow.

* The LLMs rectangle receives input from both the Text Sequence and the Knowledge Graph Representations.

* The dashed line "Text-knowledge Alignment" indicates a connection between the Text Representations and Knowledge Graph Representations.

### Key Observations

The diagram highlights the transformation of text into numerical representations (h1-h9, he1-he2) by the LLM. The "..." suggests that the text sequence can be of variable length. The alignment process aims to link textual information with corresponding entities in a knowledge graph.

### Interpretation

This diagram illustrates a core concept in modern Natural Language Processing (NLP) – grounding language in knowledge. The LLM acts as a bridge, converting text into a format suitable for reasoning and knowledge retrieval. The alignment process is crucial for tasks like question answering, information extraction, and semantic search. The diagram suggests that the LLM learns to represent both the text and the entities in a shared embedding space, enabling the alignment. The use of "h" and "he" notation likely refers to hidden states or embeddings within the LLM and knowledge graph, respectively. The diagram doesn't provide specific data or numerical values, but rather a conceptual overview of the process. It demonstrates how LLMs can be used to connect natural language with structured knowledge, enabling more sophisticated AI applications.