## Diagram: Echo State Network / Reservoir Computing Architecture

### Overview

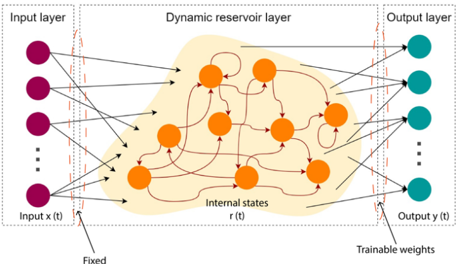

The image is a technical schematic diagram illustrating the architecture of a reservoir computing model, specifically an Echo State Network (ESN). It depicts the flow of information from an input layer, through a complex, recurrently connected "dynamic reservoir" layer, to an output layer. The diagram emphasizes the distinction between fixed and trainable components of the network.

### Components/Axes

The diagram is organized into three primary vertical sections, demarcated by dashed gray boxes:

1. **Input layer (Left Section):**

* **Visual Elements:** A vertical column of four solid purple circles, with a vertical ellipsis (`...`) below the third circle, indicating additional inputs.

* **Labels:**

* Top label: `Input layer`

* Bottom label: `Input x(t)`

* Annotation below the input column: `Fixed` (with an arrow pointing to the connections leaving the input layer).

2. **Dynamic reservoir layer (Center Section):**

* **Visual Elements:** A large, irregularly shaped, light-orange cloud containing eight solid orange circles (neurons). These neurons are interconnected by a complex web of red arrows, indicating recurrent connections. Some arrows loop back to the same neuron.

* **Labels:**

* Top label: `Dynamic reservoir layer`

* Label inside the cloud, near the bottom: `Internal states r(t)`

3. **Output layer (Right Section):**

* **Visual Elements:** A vertical column of four solid teal circles, with a vertical ellipsis (`...`) below the third circle, indicating additional outputs.

* **Labels:**

* Top label: `Output layer`

* Bottom label: `Output y(t)`

* Annotation below the output column: `Trainable weights` (with an arrow pointing to the connections entering the output layer).

**Connections and Flow:**

* **Input to Reservoir:** Black arrows originate from each purple input neuron and point to multiple orange neurons within the reservoir. The `Fixed` label indicates these input-to-reservoir connection weights are not adjusted during training.

* **Reservoir Recurrence:** Red arrows form a dense, recurrent network within the reservoir cloud, connecting orange neurons to each other.

* **Reservoir to Output:** Black arrows originate from various orange neurons within the reservoir and point to the teal output neurons. The `Trainable weights` label indicates these reservoir-to-output connection weights are adjusted during training.

### Detailed Analysis

* **Spatial Grounding:** The `Fixed` label is positioned at the bottom-left of the diagram, directly associated with the input-to-reservoir connections. The `Trainable weights` label is at the bottom-right, associated with the reservoir-to-output connections. The `Internal states r(t)` label is centered within the reservoir cloud.

* **Component Isolation:**

* **Header Region:** Contains the three main layer titles.

* **Main Diagram Region:** Contains the visual representation of neurons (circles) and connections (arrows) across the three layers.

* **Footer Region:** Contains the key annotations `Fixed` and `Trainable weights`.

* **Trend/Flow Verification:** The diagram illustrates a clear, unidirectional information flow from left to right: `Input x(t)` → `Dynamic reservoir layer (Internal states r(t))` → `Output y(t)`. The complexity and recurrence are confined to the central reservoir.

### Key Observations

1. **Architectural Dichotomy:** The core design principle highlighted is the separation of the network into a fixed, complex, nonlinear dynamical system (the reservoir) and a simple, trainable linear readout (the output layer).

2. **Recurrent Complexity:** The reservoir is depicted as a "black box" of rich, recurrent dynamics (red arrows), which is the source of the model's computational power for processing temporal sequences.

3. **State Representation:** The label `Internal states r(t)` explicitly identifies the reservoir's activity vector as the dynamic state that is used by the output layer.

4. **Scalability Hint:** The vertical ellipses (`...`) in both the input and output layers indicate that the diagram is a simplified representation and the actual number of input and output dimensions can be larger.

### Interpretation

This diagram is a canonical representation of the Echo State Network paradigm within reservoir computing. It visually encodes the core hypothesis of this approach: that a fixed, randomly connected, and nonlinear recurrent neural network (the reservoir) can project input signals into a high-dimensional dynamical space. The temporal patterns within this space (`r(t)`) are then linearly combined by the trainable output weights to produce the desired output `y(t)`.

The key takeaway is **efficiency through constraint**. By fixing the vast majority of the network's parameters (the input and internal reservoir connections) and only training the simple output layer, the model avoids the complex, often unstable, training procedures (like backpropagation through time) required for fully trainable recurrent networks. The diagram argues that the "magic" happens in the rich, emergent dynamics of the reservoir, which the output layer merely learns to interpret. The `Fixed` vs. `Trainable` annotation is the most critical piece of information, defining the entire learning philosophy of this architecture.