\n

## Diagram: RAG Bot vs. Generic Bot Prompt Comparison

### Overview

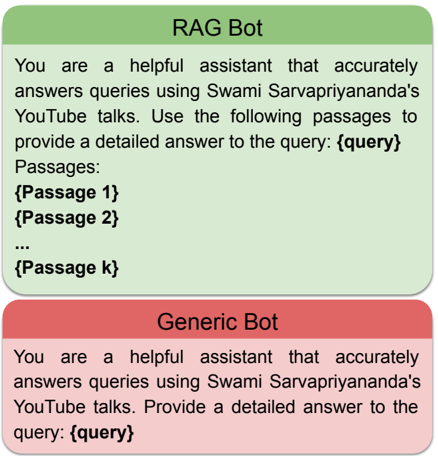

The image presents a comparison between the prompts used for a Retrieval-Augmented Generation (RAG) Bot and a Generic Bot. The comparison is visually represented as two rectangular blocks, one green for the RAG Bot and one red for the Generic Bot, stacked vertically. Each block contains the text of the prompt used for the respective bot.

### Components/Axes

The diagram consists of two main components:

* **RAG Bot Block:** A green rectangle at the top.

* **Generic Bot Block:** A red rectangle at the bottom.

Each block contains text defining the bot's role and instructions. The variable `{query}` appears in both prompts.

### Content Details

**RAG Bot Prompt (Green Block):**

"You are a helpful assistant that accurately answers queries using Swami Sarvapriyananda's YouTube talks. Use the following passages to provide a detailed answer to the query: {query}

Passages:

{Passage 1}

{Passage 2}

...

{Passage k}"

**Generic Bot Prompt (Red Block):**

"You are a helpful assistant that accurately answers queries using Swami Sarvapriyananda's YouTube talks. Provide a detailed answer to the query: {query}"

### Key Observations

The key difference between the two prompts is the inclusion of "Passages:" and the placeholder for multiple passages (`{Passage 1}`, `{Passage 2}`, ..., `{Passage k}`) in the RAG Bot prompt. This indicates that the RAG Bot is designed to utilize retrieved information (passages) to formulate its responses, while the Generic Bot does not explicitly have access to such passages within its prompt.

### Interpretation

The diagram illustrates the core distinction between a standard Large Language Model (LLM) and a RAG-enhanced LLM. The Generic Bot relies solely on its pre-trained knowledge to answer queries. The RAG Bot, however, is augmented with external knowledge retrieved from a source (in this case, Swami Sarvapriyananda's YouTube talks) and provided as context within the prompt. This allows the RAG Bot to provide more accurate and contextually relevant answers, especially for queries requiring information not readily available in the LLM's pre-trained data. The use of `{query}` in both prompts suggests that both bots are designed to respond to user input, but the RAG Bot's response will be informed by the provided passages. The "..." in the RAG Bot prompt indicates that the number of passages can vary. This is a conceptual diagram demonstrating the difference in prompt structure, not a presentation of data.