TECHNICAL ASSET FINGERPRINT

eedcdf69ca71a39bef14b308

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

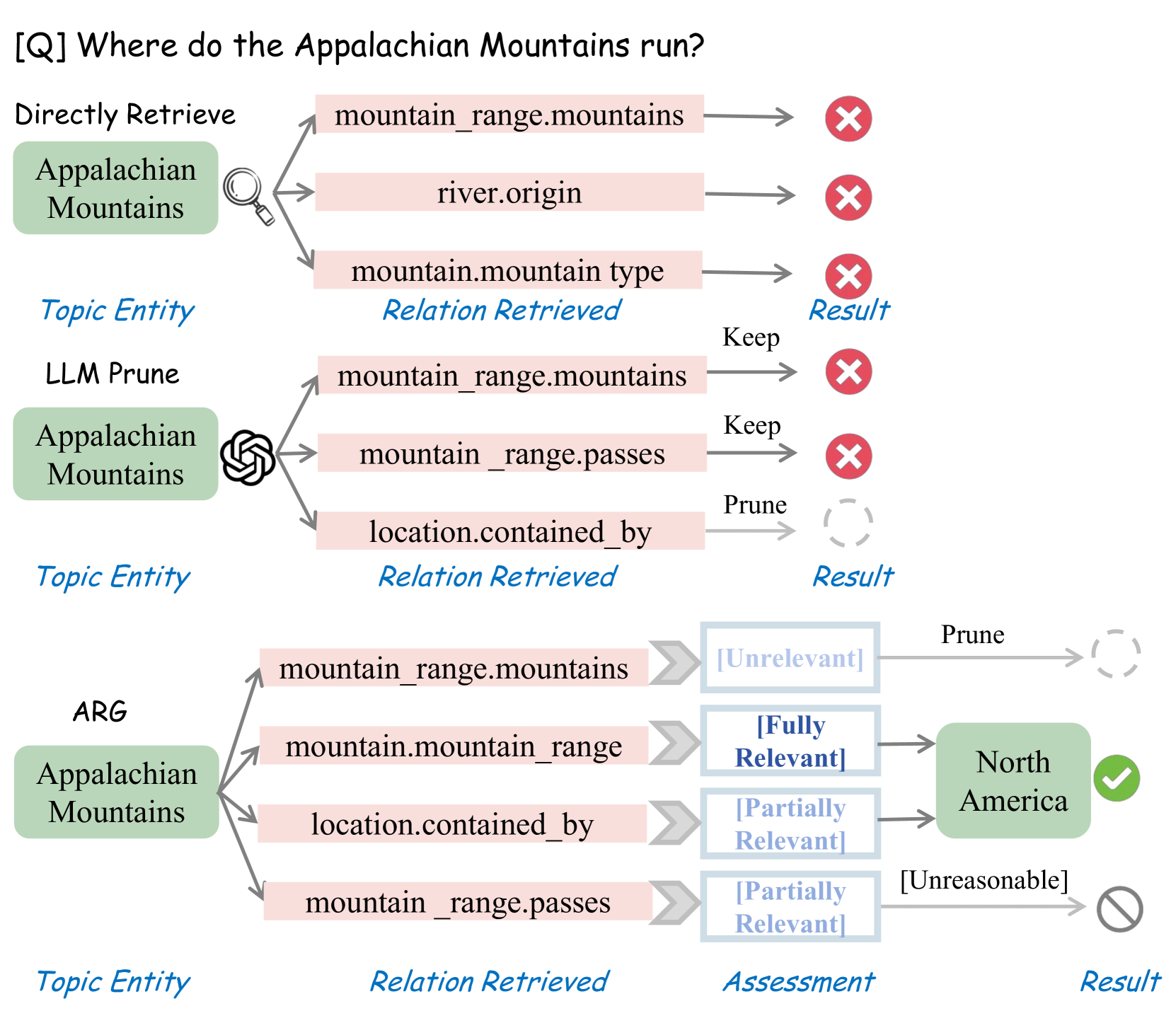

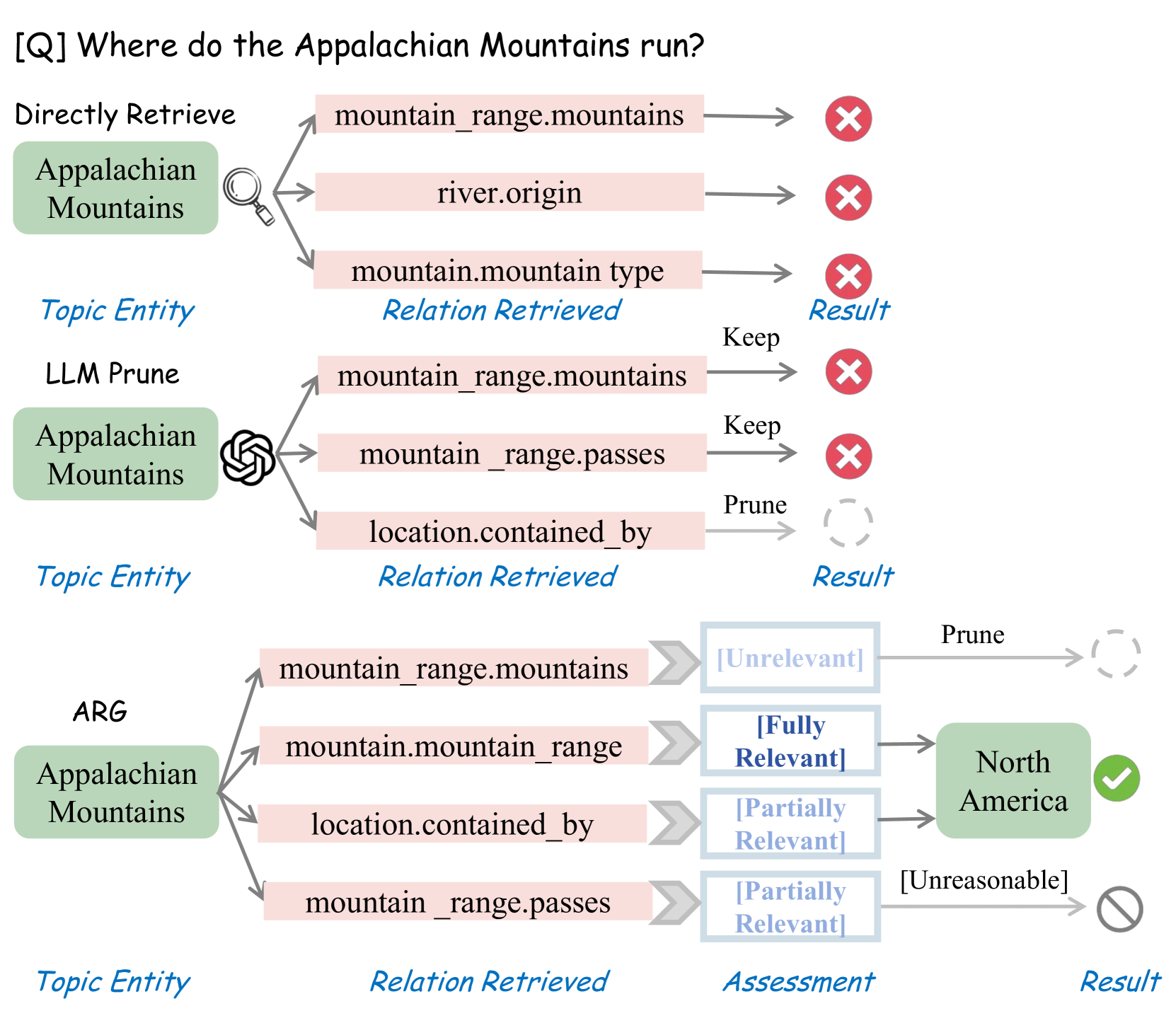

## Diagram: Comparison of Information Retrieval Methods for a Knowledge Graph Query

### Overview

The image is a technical diagram comparing three different methods for answering the factual question: **"[Q] Where do the Appalachian Mountains run?"**. It visually contrasts the processes and outcomes of a "Directly Retrieve" method, an "LLM Prune" method, and an "ARG" (likely Augmented Retrieval/Generation) method. The diagram is structured into three horizontal sections, each dedicated to one method, showing the flow from a topic entity through retrieved relations to a final result.

### Components/Axes

The diagram is organized into three main horizontal lanes, each with a consistent column structure defined by labels at the bottom:

1. **Topic Entity** (Leftmost column): The subject of the query, "Appalachian Mountains," presented in a light green rounded rectangle.

2. **Relation Retrieved** (Middle column): A list of potential knowledge graph relations connected to the topic entity, shown in light pink rectangles.

3. **Assessment** (Present only in the ARG section): A column evaluating the relevance of each retrieved relation.

4. **Result** (Rightmost column): The outcome of the process for each relation or the final answer, indicated by icons (red X, green checkmark, grey prohibition sign, or a dashed circle for pruned items).

**Legend/Key:** The column labels ("Topic Entity", "Relation Retrieved", "Assessment", "Result") are written in blue italic text at the bottom of the diagram, serving as a key for the columns above.

### Detailed Analysis

#### **Section 1: Directly Retrieve**

* **Process:** The topic entity "Appalachian Mountains" is used to directly retrieve relations from a knowledge base.

* **Retrieved Relations & Results:**

1. `mountain_range.mountains` -> Result: Red X (Incorrect/Irrelevant).

2. `river.origin` -> Result: Red X (Incorrect/Irrelevant).

3. `mountain.mountain type` -> Result: Red X (Incorrect/Irrelevant).

* **Outcome:** All three directly retrieved relations lead to incorrect or irrelevant results for answering the question.

#### **Section 2: LLM Prune**

* **Process:** The topic entity "Appalachian Mountains" is processed by an LLM (indicated by a brain-like icon) to prune retrieved relations.

* **Retrieved Relations, Actions, & Results:**

1. `mountain_range.mountains` -> Action: "Keep" -> Result: Red X (Incorrect/Irrelevant).

2. `mountain_range.passes` -> Action: "Keep" -> Result: Red X (Incorrect/Irrelevant).

3. `location.contained_by` -> Action: "Prune" -> Result: Dashed circle (Relation discarded).

* **Outcome:** The LLM-based pruning keeps two relations that are still incorrect and prunes a potentially relevant one (`location.contained_by`).

#### **Section 3: ARG (Augmented Retrieval/Generation)**

* **Process:** The topic entity "Appalachian Mountains" undergoes a more sophisticated retrieval and assessment process.

* **Retrieved Relations, Assessments, & Results:**

1. `mountain_range.mountains` -> Assessment: `[Unrelevant]` -> Action: "Prune" -> Result: Dashed circle.

2. `mountain.mountain_range` -> Assessment: `[Fully Relevant]` -> Result: **"North America"** in a green box with a green checkmark (Correct Answer).

3. `location.contained_by` -> Assessment: `[Partially Relevant]` -> Result: **"North America"** (Contributes to the correct answer).

4. `mountain_range.passes` -> Assessment: `[Partially Relevant]` -> Action: `[Unreasonable]` -> Result: Grey prohibition sign (Discarded as unreasonable).

* **Outcome:** The ARG method successfully identifies the correct answer, "North America," by assessing the relevance of different relations. It uses the `[Fully Relevant]` relation `mountain.mountain_range` and the `[Partially Relevant]` relation `location.contained_by` to arrive at the answer, while pruning irrelevant or unreasonable relations.

### Key Observations

1. **Methodological Progression:** The diagram shows a clear progression from a naive retrieval method (Direct) to a simple filtering method (LLM Prune) to a more advanced, assessment-based method (ARG).

2. **Critical Relation:** The relation `location.contained_by` is pivotal. It is incorrectly pruned by the LLM Prune method but is correctly identified as `[Partially Relevant]` by the ARG method, contributing to the correct answer.

3. **Assessment Labels:** The ARG method introduces explicit relevance assessments (`[Unrelevant]`, `[Fully Relevant]`, `[Partially Relevant]`) and a reasonableness check (`[Unreasonable]`), which are absent in the other methods.

4. **Visual Coding:** Results are consistently coded: Red X for failure, green checkmark for success, dashed circle for pruned items, and a grey prohibition sign for discarded unreasonable items.

### Interpretation

This diagram illustrates a core challenge in knowledge graph question answering: retrieving the correct relational path to answer a natural language query. It argues for the superiority of an ARG-style approach over simpler retrieval or LLM-based pruning.

* **What it demonstrates:** The "Directly Retrieve" method fails because it retrieves relations that are structurally connected but semantically irrelevant to the *question's intent* (e.g., knowing the mountains within a range doesn't tell you where the range runs). The "LLM Prune" method shows a limitation of using an LLM for filtering without deep semantic assessment—it keeps some irrelevant relations and, crucially, prunes a potentially useful one (`location.contained_by`).

* **Why ARG succeeds:** The ARG method's success hinges on its **assessment layer**. It doesn't just retrieve or prune; it evaluates the *relevance* of each relation to the specific question. The relation `mountain.mountain_range` (which likely links individual mountains to their parent range) is assessed as `[Fully Relevant]`, providing a direct path. The relation `location.contained_by` is `[Partially Relevant]`, offering supporting geographical context. By combining these assessed insights, ARG converges on the correct answer, "North America."

* **Underlying Message:** The diagram advocates for AI systems that can perform **semantic reasoning over structural knowledge**. It suggests that effective question answering requires not just accessing data (relations) but understanding their contextual relevance to the query, a task where structured assessment outperforms simple retrieval or generic LLM pruning.

DECODING INTELLIGENCE...