\n

## Pie Charts: Comparative Error Analysis of AI Models on a "Search and Read" Task

### Overview

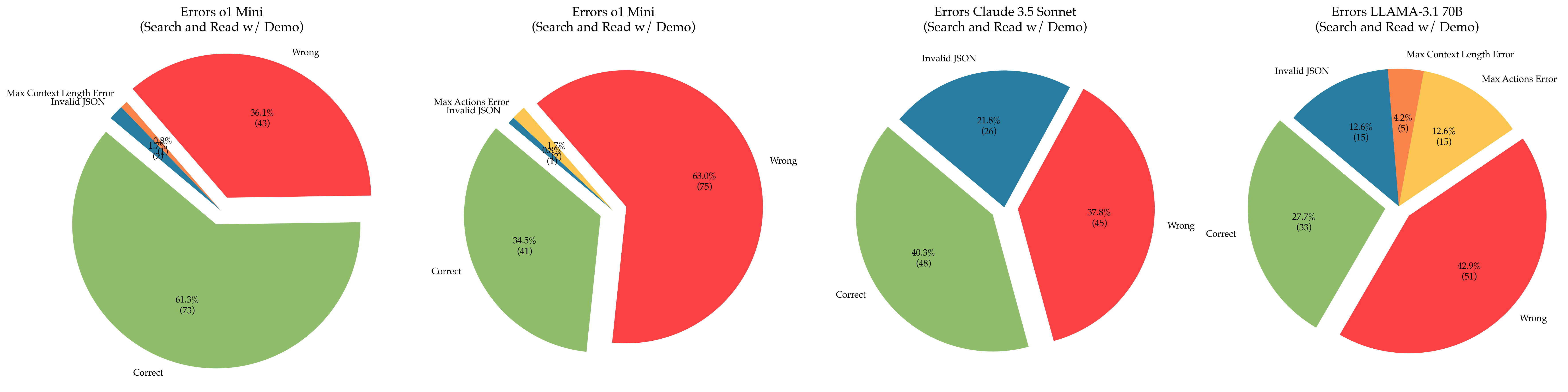

The image displays four pie charts arranged horizontally, each illustrating the distribution of outcomes (correct answers and various error types) for a specific AI model performing a "Search and Read w/ Demo" task. The charts compare the performance of two instances of "o1 Mini", "Claude 3.5 Sonnet", and "LLAMA-3.1 70B". Each chart is titled with the model name and task.

### Components/Axes

* **Chart Titles (Top-Center of each pie):**

1. `Errors o1 Mini (Search and Read w/ Demo)`

2. `Errors o1 Mini (Search and Read w/ Demo)`

3. `Errors Claude 3.5 Sonnet (Search and Read w/ Demo)`

4. `Errors LLAMA-3.1 70B (Search and Read w/ Demo)`

* **Data Categories (Legend/Labels within slices):** The same five categories are used across all charts, color-coded as follows:

* **Correct** (Green slice)

* **Wrong** (Red slice)

* **Invalid JSON** (Blue slice)

* **Max Context Length Error** (Orange slice)

* **Max Actions Error** (Yellow slice)

* **Data Presentation:** Each slice is labeled with its category name, a percentage, and a raw count in parentheses (e.g., `61.3% (73)`). Slices are slightly separated ("exploded") for clarity.

### Detailed Analysis

**Chart 1: Errors o1 Mini (First Instance)**

* **Correct (Green, bottom-left):** 61.3% (73). This is the largest segment.

* **Wrong (Red, top-right):** 36.1% (43). The second-largest segment.

* **Invalid JSON (Blue, thin slice top-left):** 1.7% (2).

* **Max Context Length Error (Orange, very thin slice top-left):** 0.8% (1).

* **Max Actions Error (Yellow, not visibly present):** 0.0% (0). This category is listed in the legend but has no corresponding slice, indicating zero occurrences.

**Chart 2: Errors o1 Mini (Second Instance)**

* **Wrong (Red, right):** 63.0% (75). This is the dominant segment.

* **Correct (Green, left):** 34.5% (41). The second-largest segment.

* **Invalid JSON (Blue, thin slice top-left):** 1.7% (2).

* **Max Actions Error (Yellow, thin slice top-left):** 0.8% (1).

* **Max Context Length Error (Orange, not visibly present):** 0.0% (0). This category is listed but has no slice.

**Chart 3: Errors Claude 3.5 Sonnet**

* **Correct (Green, bottom-left):** 40.3% (48). The largest segment.

* **Wrong (Red, right):** 37.8% (45). Slightly smaller than the "Correct" segment.

* **Invalid JSON (Blue, top):** 21.8% (26). A substantial segment.

* **Max Context Length Error (Orange, not visibly present):** 0.0% (0).

* **Max Actions Error (Yellow, not visibly present):** 0.0% (0).

**Chart 4: Errors LLAMA-3.1 70B**

* **Wrong (Red, bottom-right):** 42.9% (51). The largest segment.

* **Correct (Green, bottom-left):** 27.7% (33). The second-largest segment.

* **Invalid JSON (Blue, top-left):** 12.6% (15).

* **Max Actions Error (Yellow, top-right):** 12.6% (15). Equal in size to the "Invalid JSON" segment.

* **Max Context Length Error (Orange, top-center):** 4.2% (5).

### Key Observations

1. **High Variability in o1 Mini:** The two charts for "o1 Mini" show dramatically different results. The first instance has a majority "Correct" rate (61.3%), while the second has a majority "Wrong" rate (63.0%). This suggests significant inconsistency in the model's performance or possibly different test conditions between runs.

2. **Model-Specific Error Profiles:**

* **Claude 3.5 Sonnet** has a balanced split between "Correct" and "Wrong" but is notable for a high rate of "Invalid JSON" errors (21.8%), which is its primary failure mode.

* **LLAMA-3.1 70B** has the highest "Wrong" rate (42.9%) and is the only model to exhibit all five error categories, including a significant "Max Actions Error" rate (12.6%).

3. **Error Type Prevalence:** "Invalid JSON" is a common error across three models (o1 Mini, Claude, LLAMA). "Max Context Length Error" and "Max Actions Error" are less frequent overall but are most prominent in the LLAMA model.

### Interpretation

These charts provide a comparative diagnostic view of how different large language models fail on a specific, likely tool-augmented, task ("Search and Read w/ Demo"). The data suggests:

* **Task Suitability & Reliability:** The stark contrast between the two o1 Mini runs indicates potential reliability issues or high sensitivity to prompt/task variations. Claude 3.5 Sonnet shows more consistent, though not superior, performance with a clear weakness in output formatting (JSON).

* **Error Nature as a Model Fingerprint:** The distribution of error types acts as a fingerprint for each model's limitations. Claude's errors are primarily syntactic ("Invalid JSON"), while LLAMA's errors are more diverse, including both syntactic and resource-limit errors ("Max Actions", "Max Context Length"). This could inform debugging or prompt engineering strategies specific to each model.

* **Performance Benchmarking:** For this specific task, no model achieves a "Correct" rate above ~61%. The highest "Wrong" rate is 63%, indicating the task is challenging for all evaluated models. The presence of system-level errors (Max Context/Actions) in LLAMA suggests it may be less optimized for multi-step, agentic workflows compared to the others.

**Note on Language:** All text in the image is in English.