## Bar Chart: Model Performance Comparison

### Overview

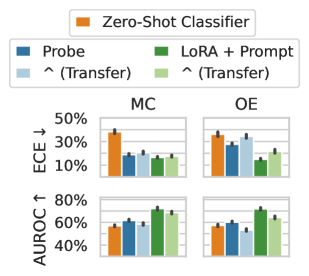

The image presents a comparison of model performance across two datasets (MC and OE) using four different methods: Zero-Shot Classifier, Probe, Transfer (represented by a caret symbol "^"), and LoRA + Prompt. Performance is evaluated using two metrics: Expected Calibration Error (ECE) and Area Under the Receiver Operating Characteristic curve (AUROC). Each bar chart includes error bars, indicating the variability of the results.

### Components/Axes

* **X-axis:** Represents the different methods being compared: Zero-Shot Classifier (orange), Probe (blue), Transfer (light blue), and LoRA + Prompt (green).

* **Y-axis (Top Charts):** Expected Calibration Error (ECE) measured in percentage, ranging from 0% to 50%. The arrow indicates that lower values are better.

* **Y-axis (Bottom Charts):** Area Under the Receiver Operating Characteristic curve (AUROC) measured in percentage, ranging from 40% to 80%. The arrow indicates that higher values are better.

* **Datasets:** Two datasets are used for comparison: MC (left column) and OE (right column).

* **Legend:** Located at the top-left of the image, it maps colors to the different methods.

* **Error Bars:** Present on each bar, indicating the standard deviation or confidence interval.

### Detailed Analysis or Content Details

**MC Dataset (Left Column)**

* **ECE (Top-Left Chart):**

* Zero-Shot Classifier: Approximately 36% ± 4%

* Probe: Approximately 18% ± 3%

* Transfer: Approximately 15% ± 2%

* LoRA + Prompt: Approximately 12% ± 2%

* Trend: The Zero-Shot Classifier has the highest ECE, while LoRA + Prompt has the lowest.

* **AUROC (Bottom-Left Chart):**

* Zero-Shot Classifier: Approximately 55% ± 3%

* Probe: Approximately 58% ± 3%

* Transfer: Approximately 65% ± 3%

* LoRA + Prompt: Approximately 70% ± 3%

* Trend: The Zero-Shot Classifier has the lowest AUROC, while LoRA + Prompt has the highest.

**OE Dataset (Right Column)**

* **ECE (Top-Right Chart):**

* Zero-Shot Classifier: Approximately 33% ± 3%

* Probe: Approximately 28% ± 3%

* Transfer: Approximately 22% ± 2%

* LoRA + Prompt: Approximately 18% ± 2%

* Trend: Similar to the MC dataset, the Zero-Shot Classifier has the highest ECE, and LoRA + Prompt has the lowest.

* **AUROC (Bottom-Right Chart):**

* Zero-Shot Classifier: Approximately 53% ± 3%

* Probe: Approximately 56% ± 3%

* Transfer: Approximately 64% ± 3%

* LoRA + Prompt: Approximately 72% ± 3%

* Trend: Again, the Zero-Shot Classifier has the lowest AUROC, and LoRA + Prompt has the highest.

### Key Observations

* LoRA + Prompt consistently outperforms all other methods in both datasets for both ECE and AUROC.

* The Zero-Shot Classifier consistently performs the worst across all metrics and datasets.

* Transfer learning consistently improves performance compared to the Zero-Shot Classifier and Probe methods.

* The difference in performance between Probe and Transfer is smaller than the difference between Zero-Shot Classifier and Probe.

* The error bars suggest that the differences in performance between methods are statistically significant.

### Interpretation

The data suggests that LoRA + Prompt is the most effective method for improving model calibration and discrimination performance on both the MC and OE datasets. Zero-Shot classification performs poorly, indicating a need for task-specific adaptation. Transfer learning provides a significant improvement over Zero-Shot classification, demonstrating the benefits of leveraging pre-trained knowledge. The consistent performance of LoRA + Prompt across both datasets suggests its robustness and generalizability. The relatively small error bars indicate that the observed differences are likely not due to random chance. The combination of low ECE and high AUROC for LoRA + Prompt indicates that the model is both well-calibrated (its predicted probabilities are reliable) and highly discriminative (it can effectively distinguish between classes). This suggests that LoRA + Prompt is a promising approach for building reliable and accurate models.