\n

## Grouped Bar Chart: CogGRAG Model Performance Comparison

### Overview

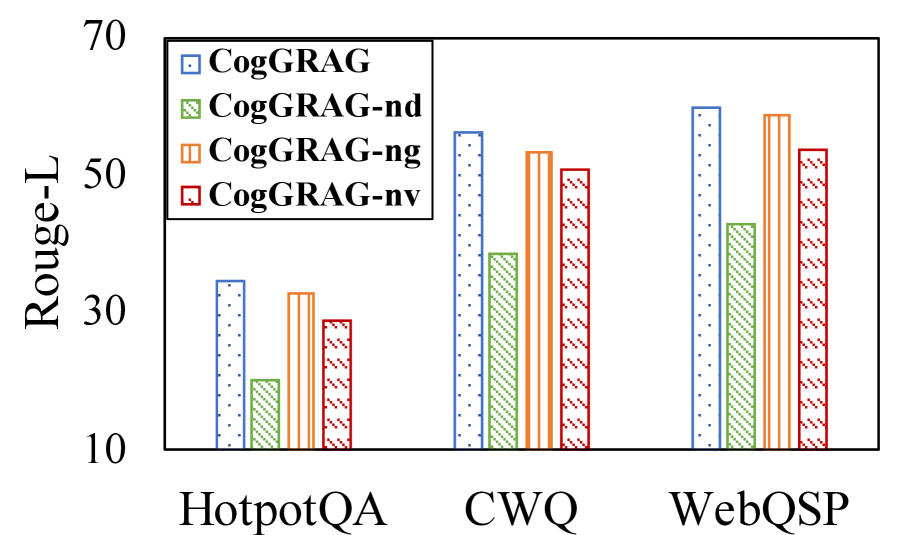

The image is a grouped bar chart comparing the performance of four model variants across three different question-answering datasets. The performance metric is "Rouge-L," a common evaluation metric for text generation tasks that measures the overlap of longest common subsequences between generated and reference text. The chart visually demonstrates the impact of removing specific components (dense retrieval, graph, verification) from the base CogGRAG model.

### Components/Axes

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis:**

* **Label:** "Rouge-L" (written vertically).

* **Scale:** Linear scale from 10 to 70.

* **Major Tick Marks:** 10, 30, 50, 70.

* **X-Axis:**

* **Label:** None explicit. The categories are the dataset names.

* **Categories (from left to right):** "HotpotQA", "CWQ", "WebQSP".

* **Legend:**

* **Position:** Top-left corner of the chart area.

* **Items (with visual pattern/color):**

1. **CogGRAG:** Blue bar with a dotted pattern.

2. **CogGRAG-nd:** Green bar with a cross-hatch (X) pattern.

3. **CogGRAG-ng:** Orange bar with a vertical line pattern.

4. **CogGRAG-nv:** Red bar with a diagonal line pattern (top-left to bottom-right).

### Detailed Analysis

The chart presents the Rouge-L score for each model variant on each dataset. Values are approximate based on visual estimation against the y-axis.

**1. HotpotQA Dataset (Leftmost Group):**

* **CogGRAG (Blue, dotted):** ~34

* **CogGRAG-nd (Green, cross-hatch):** ~20

* **CogGRAG-ng (Orange, vertical lines):** ~32

* **CogGRAG-nv (Red, diagonal lines):** ~28

* **Trend within group:** CogGRAG performs best, followed closely by CogGRAG-ng. CogGRAG-nv is slightly lower, and CogGRAG-nd shows a significant drop.

**2. CWQ Dataset (Middle Group):**

* **CogGRAG (Blue, dotted):** ~56

* **CogGRAG-nd (Green, cross-hatch):** ~38

* **CogGRAG-ng (Orange, vertical lines):** ~53

* **CogGRAG-nv (Red, diagonal lines):** ~50

* **Trend within group:** The same performance order is maintained: CogGRAG > CogGRAG-ng > CogGRAG-nv > CogGRAG-nd. All scores are higher than on HotpotQA.

**3. WebQSP Dataset (Rightmost Group):**

* **CogGRAG (Blue, dotted):** ~60

* **CogGRAG-nd (Green, cross-hatch):** ~43

* **CogGRAG-ng (Orange, vertical lines):** ~58

* **CogGRAG-nv (Red, diagonal lines):** ~53

* **Trend within group:** Consistent pattern again. CogGRAG and CogGRAG-ng are very close at the top, with CogGRAG-nv and CogGRAG-nd following. This dataset yields the highest overall scores.

**Overall Trend Across Datasets:**

* Performance (Rouge-L score) increases for all models when moving from HotpotQA to CWQ to WebQSP.

* The relative ranking of the four models is consistent across all three datasets: **CogGRAG (full model) > CogGRAG-ng > CogGRAG-nv > CogGRAG-nd**.

### Key Observations

1. **Consistent Model Hierarchy:** The full CogGRAG model consistently outperforms all its ablated variants. The variant without dense retrieval (`-nd`) consistently performs the worst.

2. **Impact of Components:** Removing the graph component (`-ng`) causes a small but consistent performance drop compared to the full model. Removing the verification component (`-nv`) causes a slightly larger drop than removing the graph.

3. **Dataset Difficulty:** The models achieve the lowest scores on HotpotQA and the highest on WebQSP, suggesting HotpotQA may be the most challenging task for these models, or WebQSP the most aligned with their training.

4. **Visual Encoding:** The chart uses both distinct colors (blue, green, orange, red) and distinct fill patterns (dots, cross-hatch, vertical lines, diagonal lines) to differentiate the four model series, ensuring clarity.

### Interpretation

This chart presents an **ablation study** for the CogGRAG model. The data strongly suggests that all three core components—dense retrieval (`nd`), graph integration (`ng`), and verification (`nv`)—contribute positively to the model's performance on complex question-answering tasks.

* **The most critical component appears to be dense retrieval (`-nd`),** as its removal leads to the most substantial performance degradation across all datasets. This implies that the ability to retrieve relevant passages is foundational to the model's success.

* **Graph integration (`-ng`) and verification (`-nv`) provide secondary but meaningful improvements.** The graph component likely helps in reasoning over structured knowledge, while verification refines the generated answers. The fact that `-ng` performs slightly better than `-nv` might suggest that structured knowledge is marginally more beneficial than the verification step for these specific benchmarks, or that the verification module's design could be further optimized.

* The consistent performance increase across datasets (HotpotQA < CWQ < WebQSP) could indicate varying levels of complexity or different types of reasoning required, with WebQSP being the most amenable to the CogGRAG architecture.

In summary, the chart provides clear empirical evidence that the full CogGRAG system, combining dense retrieval, graph-based reasoning, and verification, is more effective than any simplified version of itself for the evaluated tasks. The ablation study validates the design choice of incorporating these three modules.